We recently saw a financial services firm deploy an AI agent to automate preliminary loan assessments. The client reported to us that the agent worked well for six weeks. Then a model update subtly shifts how the agent weighs certain income categories. Nobody noticed since there was no behavioral monitoring, just input/output logging that looks normal on the surface. Three months later, an internal audit discovers the agent has been systematically underweighting freelance income in a way that creates disparate impact across applicant demographics. The firm now has a compliance exposure that a policy document sitting in SharePoint did absolutely nothing to prevent.

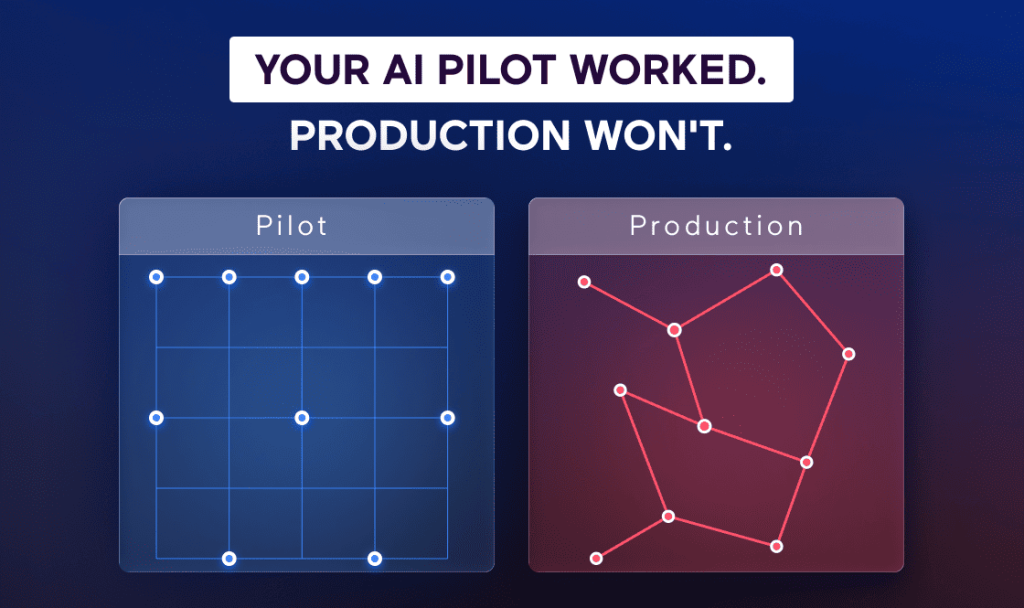

This isn’t a hypothetical edge case. Once we dug in deeper, we learned that eighty percent of organizations deploying AI agents report unexpected or risky behaviors in production. The EU AI Act introduces fines up to €35 million. Colorado’s AI Act takes effect in 2026. Board-level questions about AI governance are no longer theoretical. They’re blocking deployment decisions right now.

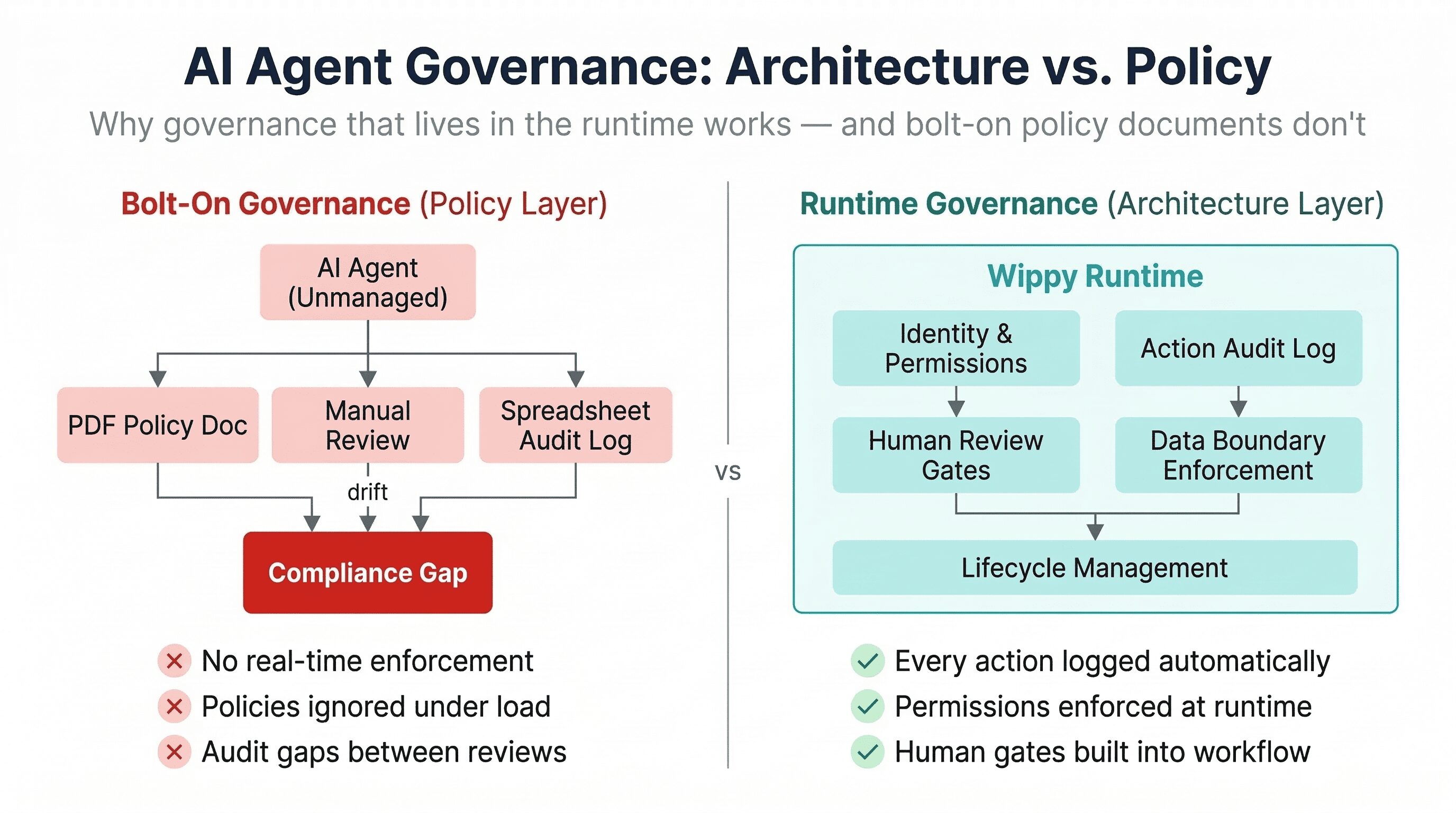

Governance for AI agents cannot be solved by writing policies. It’s an architecture problem. Unless governance constraints like identity, permissions, audit trails, human review, data boundaries are enforced at the runtime layer, they will be violated under load, ignored during rapid iteration, and impossible to audit after the fact.

Key Takeaways:

- Policy-layer governance fails for AI agents because agents operate at speeds and volumes that outpace human review cycles.

- Effective agent governance requires five architectural capabilities enforced at runtime: identity management, action-level audit logging, human review gates, data boundary enforcement, and lifecycle controls.

- Bolt-on governance gets more expensive as agent count grows; runtime-native governance scales with the platform.

- The governance question is now a gating factor on enterprise AI purchases.

Why policy documents don’t govern anything

Most organizations approach AI agent governance the same way they’ve handled IT governance for decades: write a policy, form a review committee, schedule periodic audits, hope compliance holds between checkpoints. This works for systems that change slowly and operate within predictable boundaries.

AI agents break every assumption this model depends on. An agent might execute thousands of actions per day, each following a different reasoning path. A model update can change behavior without any code change. An agent processing customer data in one workflow might be reused in a different workflow with different compliance requirements and the policy document has no mechanism to know this happened.

The fundamental issue is enforcement timing. Policy-layer governance is retrospective. It discovers violations after they occur. Runtime-layer governance is preventive: it blocks unauthorized actions before they execute. For a system that takes consequential actions autonomously, the difference between “we’ll catch it in the quarterly review” and “the system won’t allow it” is the difference between a compliance finding and a compliance architecture.

We’ve watched this play out across multiple AI agent automation engagements at Spiral Scout. Teams that treat governance as a documentation exercise consistently hit the same wall. The policy says one thing, the system does another, and the gap only surfaces when something consequential breaks.

Five capabilities that actually constitute governance

Governance for AI agents isn’t a checkbox. It’s a set of architectural capabilities that operate at the runtime layer – enforced by the system itself, not by humans remembering to follow a process.

Identity and permissions means every agent operates under a defined identity with explicit scopes. Agent A can read customer records but cannot write to billing. These boundaries are enforced at invocation time. When an agent attempts an out-of-scope action, the runtime blocks it and logs the attempt. In practice, most agent systems still run with a single service account that has broad permissions – the “root access” antipattern carried over from early prototypes.

Action-level audit logging means every decision the agent makes is recorded: what data it accessed, what tools it invoked, what reasoning path it followed. Not just the final result – the intermediate steps. The difference between “the agent approved the loan” and “the agent accessed income data, called risk scoring with parameters X, received score Y, compared against threshold Z, and approved.” The second version is what a compliance auditor actually needs.

Human review gates mean the orchestration layer can pause execution at defined points and route decisions to a human reviewer with full context. This requires durable execution, a simple notification won’t survive a server restart or a reviewer who takes four hours to respond. The agent’s workflow halts durably, preserves state, and resumes only after the human decision is captured.

Data boundary enforcement means the runtime controls what data each agent accesses at the tenant, user, and document level. In multi-tenant environments, this prevents Agent A running on behalf of Customer X from accessing Customer Y’s data enforced at the infrastructure layer before data reaches the agent’s context window.

Lifecycle controls mean agents can be versioned, paused, rolled back, and retired as managed entities. When a compliance issue requires pulling an agent offline immediately, there’s a mechanism to do it without taking down the platform. Agents aren’t fire-and-forget scripts. They’re managed services with explicit states.

Three ways governance breaks in real deployments

The teams that struggle aren’t ignoring governance. They’re implementing it in ways that can’t hold up under production conditions.

The first is the “audit gap cascade.” A team implements logging at the API gateway. They capture every request and response to the agent. Looks comprehensive on paper. In practice, it misses everything between the request and the response: internal tool calls, data retrieval steps, branching logic. When an auditor asks “why did the agent make this decision?”, the logs show what it decided, not how. Retrofitting decision-level instrumentation into a running agent pipeline typically costs more than building it right initially.

The second is “permissions erosion.” The team sets up proper scoping at launch. Three months later, a developer needs Agent A to also write to the CRM for a new feature. Rather than going through the governance process, which is a policy checklist, not a runtime constraint. They grant write access directly. Over six months, incremental scope expansions create an agent with far broader access than anyone intended. This is the privilege creep problem from traditional IAM, but faster, because agent capabilities change with every prompt and tool update.

The third is what Anton Titov, Spiral Scout’s CTO, describes as “governance theater”:

Runtime governance vs. bolt-on governance

The classification that matters isn’t “governed vs. ungoverned.” It’s where the governance logic lives.

| Governance Capability | Bolt-On (Policy Layer) | Runtime-Native (Architecture Layer) |

| Permissions | Manually configured; verified in periodic audits | Enforced at invocation; unauthorized actions blocked automatically |

| Audit trail | API-level input/output logs; gaps between checkpoints | Action-level recording of every decision, tool call, and data access |

| Human review | Notification outside workflow; no state preservation | Durable workflow pause; reviewer acts within execution context |

| Data boundaries | App-level filtering; depends on correct implementation | Infrastructure-level tenant isolation; enforced before data reaches agent |

| Lifecycle management | Manual tracking; rollback requires redeployment | Agent registry with version history, one-click rollback, controlled retirement |

Bolt-on governance costs more as agent count grows, because each new agent requires manual configuration and monitoring. Runtime-native governance scales with the platform adding an agent automatically inherits the governance constraints of the runtime.

This is the design philosophy behind Wippy where governance capabilities are built into the runtime, not layered on after deployment. A team shipping a new agent inherits production-grade governance by default. And critically, the system is designed so clients own the IP and the infrastructure. Governance is embedded in the architecture they control, not something they rent.

Regulation is accelerating faster than most teams realize

The EU AI Act is the most discussed regulatory pressure, but it’s far from the only one. Colorado’s AI Act targets “high-risk AI systems” making consequential consumer decisions. The SEC has signaled increased scrutiny of AI-assisted financial decisions. Healthcare organizations deploying AI agents for triage or prior authorization face HIPAA implications that existing compliance frameworks weren’t built for because those frameworks assume human decision-makers.

The practical implication: the governance architecture you ship today needs to support audit requirements that don’t fully exist yet. Teams that can produce action-level audit trails for every agent decision from day one will be structurally better positioned than teams that reconstruct evidence after a regulatory inquiry. Building governance into the runtime early isn’t just cheaper. It’s the only approach that produces the historical record tomorrow’s regulations will require.

The governance you keep is the governance you build

The organizations that navigate the next wave of AI regulation successfully won’t have the thickest policy binders. They’ll be the ones whose agent systems produce clean, complete, action-level records of every decision automatically, without gaps. Governance at the architecture layer isn’t more expensive. It’s cheaper, because it scales with the system instead of requiring linear human effort for every new agent, every new workflow, and every new compliance requirement. The question isn’t whether to invest in governance. It’s whether to invest once in the runtime, or repeatedly in manual processes that were never designed for systems that think and act on their own.

Concerned that your AI agents can’t pass a compliance audit?

The gap between “we have a governance policy” and “our system enforces governance at runtime” is where compliance exposure lives. Spiral Scout’s AI Readiness Audit evaluates your agent infrastructure against the five runtime governance capabilities and maps what needs to change before your next deployment or your next audit, whichever comes first.