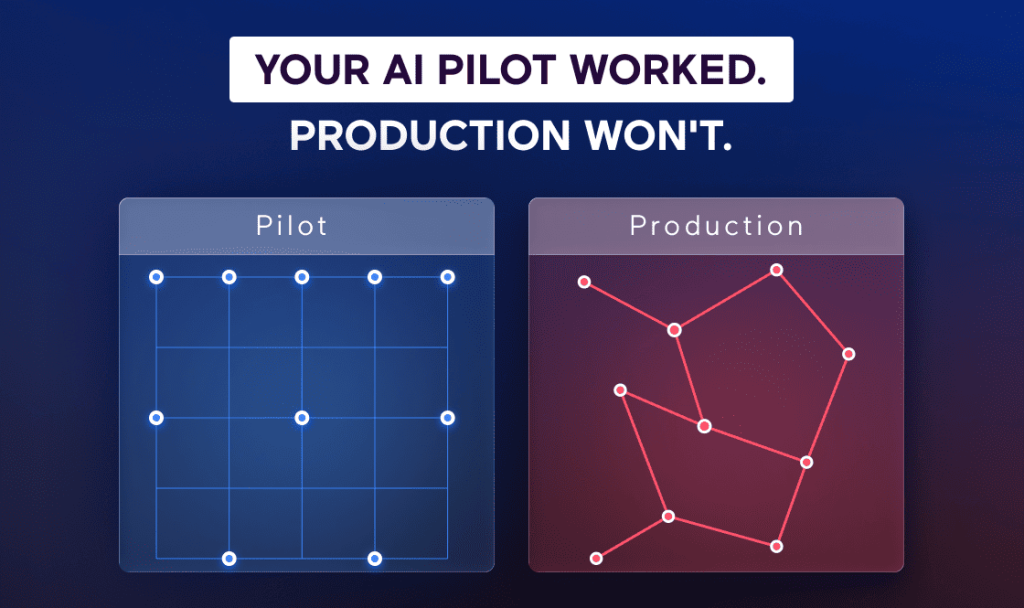

Every engineering team building AI agents eventually hits the same wall. The prototype works. The chain runs. The agent calls a tool, gets a response, and produces something useful. Then someone asks: “Can this run in production?” And the answer, if you’re honest, is usually “not yet.”

I’ve been shipping software for 16 years at Spiral Scout and building production agent systems for the last two on Wippy, the runtime my team created to solve this problem. We’re not anti-LangChain. We use it. But after deploying systems that manage legal contracts, generate industrial equipment quotes, and process thousands of concurrent workflows with hard deadlines, I can tell you that the framework you use to build an agent and the infrastructure you need to run it are two different concerns. Conflating them is where most projects go sideways.

This is not a features comparison. It’s a perspective from the production side of the fence – what changes when your agent needs to work every day, not just on demo day.

The Problem LangChain Solves Well

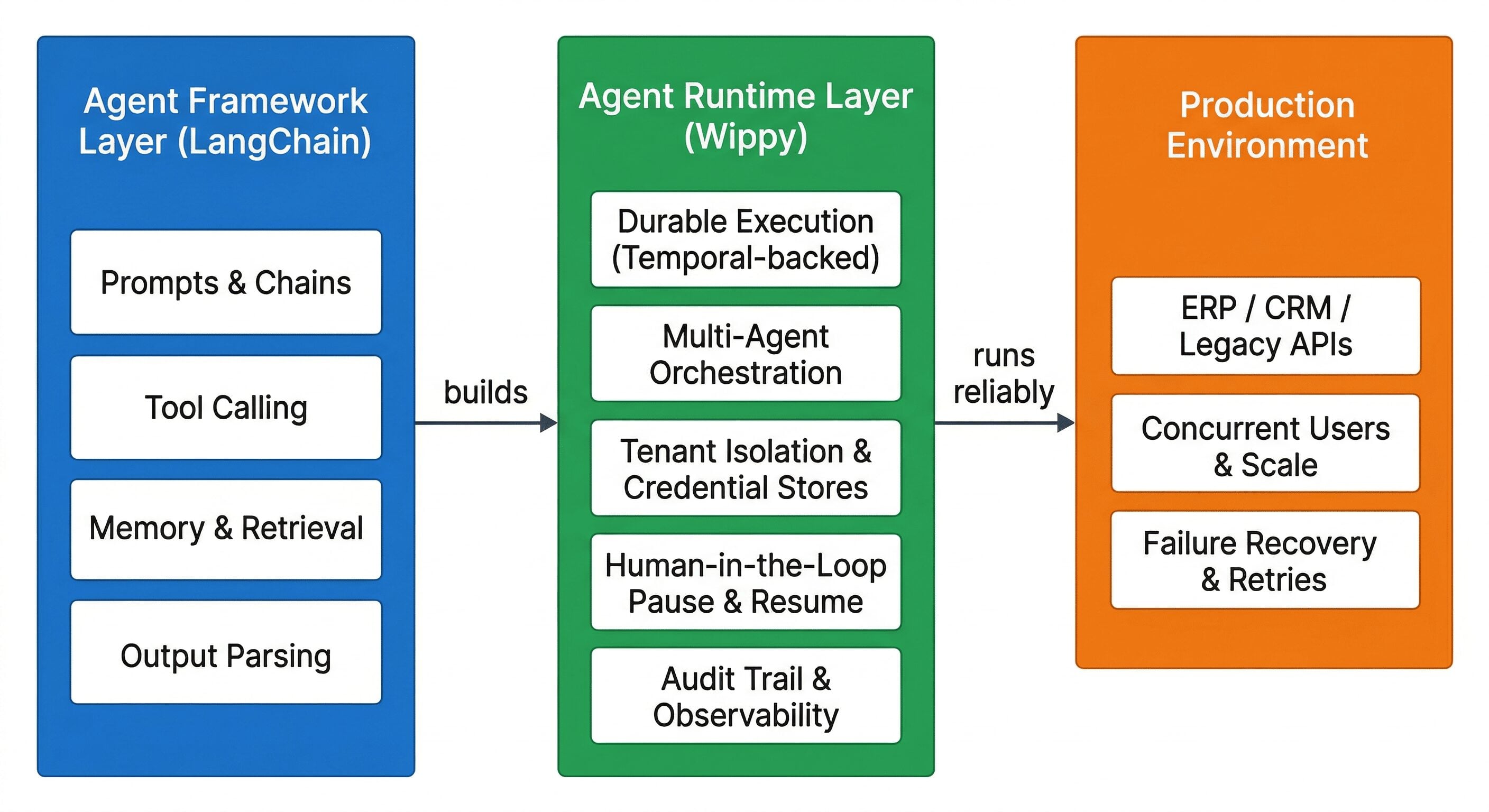

LangChain is a solid construction toolkit. It gives you clean abstractions for prompts, chains, memory, tool calling, and retrieval. If you need to wire up an agent that queries a vector store, calls an API, and returns a structured response, LangChain gets you there fast. LangGraph extends this by letting you define agent graphs with branching logic and state transitions. For prototyping and single-shot AI agent automation tasks, these tools are excellent and we have zero complaints about them in that context.

Where things get complicated is the transition from “this agent works on my machine” to “this agent runs our business process.” That transition is not about swapping out a library. It’s about confronting infrastructure problems that frameworks were not designed to solve. We wrote about this pattern extensively in our breakdown of modern AI agent architectures – the orchestration layer is where production success or failure gets decided.

What Production Actually Demands

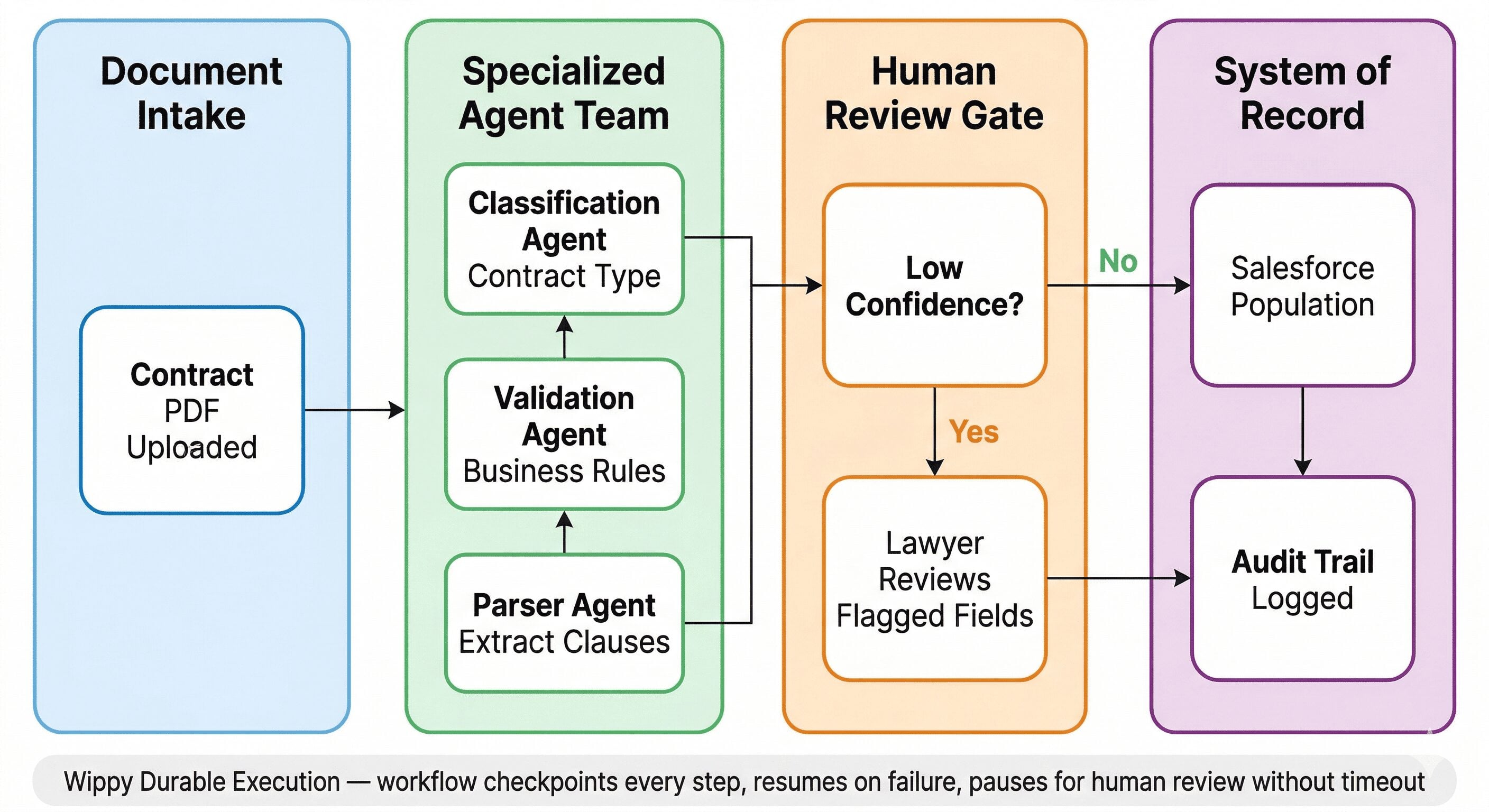

When we built Project Fortress – a legal deal management system with over 100 specialized agents extracting contract data and populating Salesforce, we learned quickly that the hard part is not getting an LLM to parse a clause. The hard part is what happens when the Salesforce API rate-limits you on document 437 out of 1,000. Or when a 120-page contract takes four hours to process and your server recycles halfway through. Or when a lawyer needs to review a flagged extraction before the workflow continues, and that review might not happen until Tuesday.

None of those are LLM problems. They’re infrastructure problems. And they’re the ones that determine whether your system ships or stays on a staging server.

Here is what we needed that no agent framework gave us out of the box.

Durable execution. Real workflows fail mid-flight. APIs time out, services go down, rate limits kick in. You need the system to checkpoint every step and resume from the last successful one i.e. not restart from zero. We built Wippy on top of Temporal’s orchestration patterns for this reason. When Fortress crashes on document 437, it picks up at 437 when it comes back, with full state intact. Every step is checkpointed automatically. This is not retry logic bolted onto an agent as it’s baked into the runtime architecture. Our team authored the official Temporal PHP SDK, so we understand these patterns at a deep level.

Multi-agent coordination that persists. Most agent frameworks handle multi-agent setups as synchronous function calls within a single execution context. That works if everything finishes in seconds. But when Agent A extracts contract data, Agent B validates it against business rules, and Agent C waits for a human to approve before writing to Salesforce and that approval might come three days later. You need a different execution model entirely. Wippy treats each agent as an isolated actor with its own lifecycle, coordinated through a persistent workflow that can pause for days without losing state or consuming compute resources. We explored this coordination model in depth in our piece on multi-agent frameworks for intelligent automation.

Tenant isolation. If you’re building agentic systems for multiple clients (or operating as a SaaS), you cannot have Client A’s data anywhere near Client B’s execution context. This is not just an access control question. It’s an architectural one. Wippy was designed multi-tenant from the start, with isolated execution contexts, separate credential stores, and audit trails that let you prove to a compliance team exactly what data an agent touched and why. The client owns their data, the IP, the agents, and the outputted data. The model is disposable fuel.

Where This Gets Concrete

Three systems we can share that we’ve deployed on Wippy make the tradeoffs between framework and runtime tangible.

Project Fortress involved 100+ specialized agents handling contract data extraction for a legal SaaS product. Each agent was trained on a specific extraction type i.e. parties, dates, terms, clauses and they coordinated through a supervisor workflow that managed sequencing, validation, and Salesforce population. The workflow for a single complex contract could span hours. Human-in-the-loop review was required for low-confidence extractions, meaning the system had to pause mid-workflow and wait for a lawyer’s input without timing out or losing context. The result was 80% automation of what had been entirely manual data entry, with a complete audit trail for every decision the system made. In a pure LangChain implementation, you would need to build the fault-tolerance layer, the pause-and-resume logic, the state persistence, and the multi-agent coordination infrastructure from scratch. That is not a weekend vibe coding project. That is months of platform engineering.

Our CPQ work for industrial distribution was a completely different challenge. We built a quoting agent for an industrial hose and fittings distributor through our work with Intelli.Build, using Wippy to orchestrate the configuration logic. Hundreds of SKUs, complex compatibility rules between components, regional pricing variations, and pressure ratings that change based on assembly configuration. The agent needed to pull from ERP data, apply engineering constraints, check inventory, and produce a compliant quote. The workflow involved multiple specialized agents. One for product lookup, one for compatibility validation, one for pricing, one for document generation. Each operating against different backend systems with different response times and failure modes. Temporal-backed orchestration meant that if the ERP timed out during a pricing call, the system retried that specific step without re-running the entire configuration. Quote turnaround dropped by 90%.

The Santa Letters program was a time-bounded constraint: process 10,000+ handwritten letters from children, generate personalized AI responses, and coordinate physical printing and mailing all within a three-week holiday window. Six agents in a pipeline handled intake, OCR of handwritten text, content moderation (with human review for flagged items), response generation in handwriting, print coordination, and status tracking. The system ran thousands of concurrent workflows with a hard deadline. A framework-level solution would have struggled with the concurrency, the multi-day processing pipeline, and the human-in-the-loop moderation step. Wippy’s fault-tolerant execution guaranteed that no letter got lost in the pipeline, even when the OCR vendor’s API had intermittent failures. You can see the full pattern across all three deployments in our multi-agent system case study.

The Runtime Is the Invisible Layer That Governs System Stability

Anton Titov, our CTO and the author of the official Temporal PHP SDK, puts it bluntly:

That framing captures the core architectural distinction. LangChain operates at the agent construction layer via how you define prompts, call tools, manage memory. Wippy operates at the execution infrastructure layer via how agents run reliably across hours, days, or weeks in environments where things constantly break. They are complementary when used together, and many of our teams do exactly that. LangChain for the logic, Wippy for the plumbing. Anton wrote about this approach to self-modifying AI agents on our blog, where the runtime enables the system to study and improve its own behavior over time.

Framework vs. Runtime: What Each Layer Handles

| Capability | LangChain (Framework) | Wippy (Runtime) |

| Core job | Build agent logic, prompts, chains | Run agents reliably at scale |

| Failure handling | Application-level try/catch | Automatic checkpointing and resume from last step |

| Multi-agent | Synchronous within one process | Isolated actors with persistent coordination |

| Long-running workflows | Stateless by default | Durable across hours, days, weeks |

| Human-in-the-loop | Must build custom pause logic | Native pause and resume without timeout |

| Tenant isolation | Not addressed | Multi-tenant from architecture layer |

| Audit trail | Logging as add-on | Built-in decision-level observability |

When LangChain Is the Right Call (And When It Is Not)

To be clear, if you are building a single-shot agent, a RAG pipeline, or a prototype to validate whether an AI approach works for your use case, LangChain is a perfectly good choice. If your agent runs, returns a result, and you are done. There is no durability concern. If you are not coordinating multiple agents across long-running processes, you do not need an orchestration runtime.

The decision point is straightforward. If your agents need to run for hours or days, if they coordinate across multiple systems with different failure modes, if humans need to intervene mid-workflow, if you need tenant isolation and audit trails, or if you are running hundreds of concurrent agent workflows, you have outgrown what a framework alone can give you. You need a runtime underneath it. An AI Readiness Audit can help you figure out which side of that line you’re on.

The Boring Infrastructure Is Where Production Lives

The AI agent ecosystem has an obsession with the model layer and the framework layer. Which LLM is fastest. Which framework has the most integrations. Those matter, but in production, the thing that determines success or failure is the boring infrastructure underneath: does the system recover from failures? Can you audit what happened? Does it scale without manual intervention? Can you prove to a client’s legal team that their data is isolated?

Nobody is writing blog posts about retry logic and tenant isolation. But those are the questions your stakeholders will ask before signing off on putting an AI system into a production workflow. We’ve shipped enough of these – across legal, industrial distribution, pre-sales automation, and high-volume processing – to know that the runtime layer is where the real separation happens between projects that ship and projects that stay in staging.

Not Sure Whether Your Agent System Is Production-Ready?

Spiral Scout’s AI Readiness Audit evaluates your current agent architecture against production failure modes – durability gaps, orchestration constraints, security boundaries, and scaling bottlenecks – and maps a concrete path from where you are to a system that ships. Whether you’re building with LangChain, a custom stack, or exploring Wippy, we’ll tell you what’s ready and what’s not.