Most teams building AI agents start with the same general idea, “automate everything we can, freeze or reduce headcount, and transform the business.” Then they build a chatbot that answers FAQ questions and call it a win. The gap between that demo and something a real operations team would trust with actual revenue is where most projects die, not from a lack of ambition, but from a lack of specificity about what agents are actually good at today and if that team is truly prepared to get themselves ready, data wise, for what is needed to make agents work at scale.

After building agent systems across manufacturing, automotive, legal, finance, and industrial distribution, a clear pattern has emerged. The companies getting real value from AI agents are not chasing general intelligence. They are solving six specific, ugly, high-friction problems that have resisted traditional software for years. These patterns repeat across industries, and they share a common trait: the work is too messy, too contextual, or too dependent on human judgment for conventional automation, but too repetitive and expensive to keep doing by hand.

What you will find in this article:

- Why data normalization, not analysis, is the highest-ROI agent pattern in production today

- How “boring” integration work became the fastest path to agent adoption

- The architectural pattern (supervisor/worker decomposition) that makes multi-step agent workflows reliable

- Why the “walled garden” constraint in legal and compliance is actually a product advantage

- Where tribal knowledge capture fits, and why it changes the economics of professional services

The Real Bottleneck Is Normalization, Not Intelligence

Every company that showed up to a recent engagement with an “AI strategy” had the same underlying problem: their data was a disaster. Not a little messy but structurally broken. Suppliers sending Excel files with different column headers. PDFs with inconsistent formatting. Legacy databases where the same part number appears three different ways depending on who entered it and when.

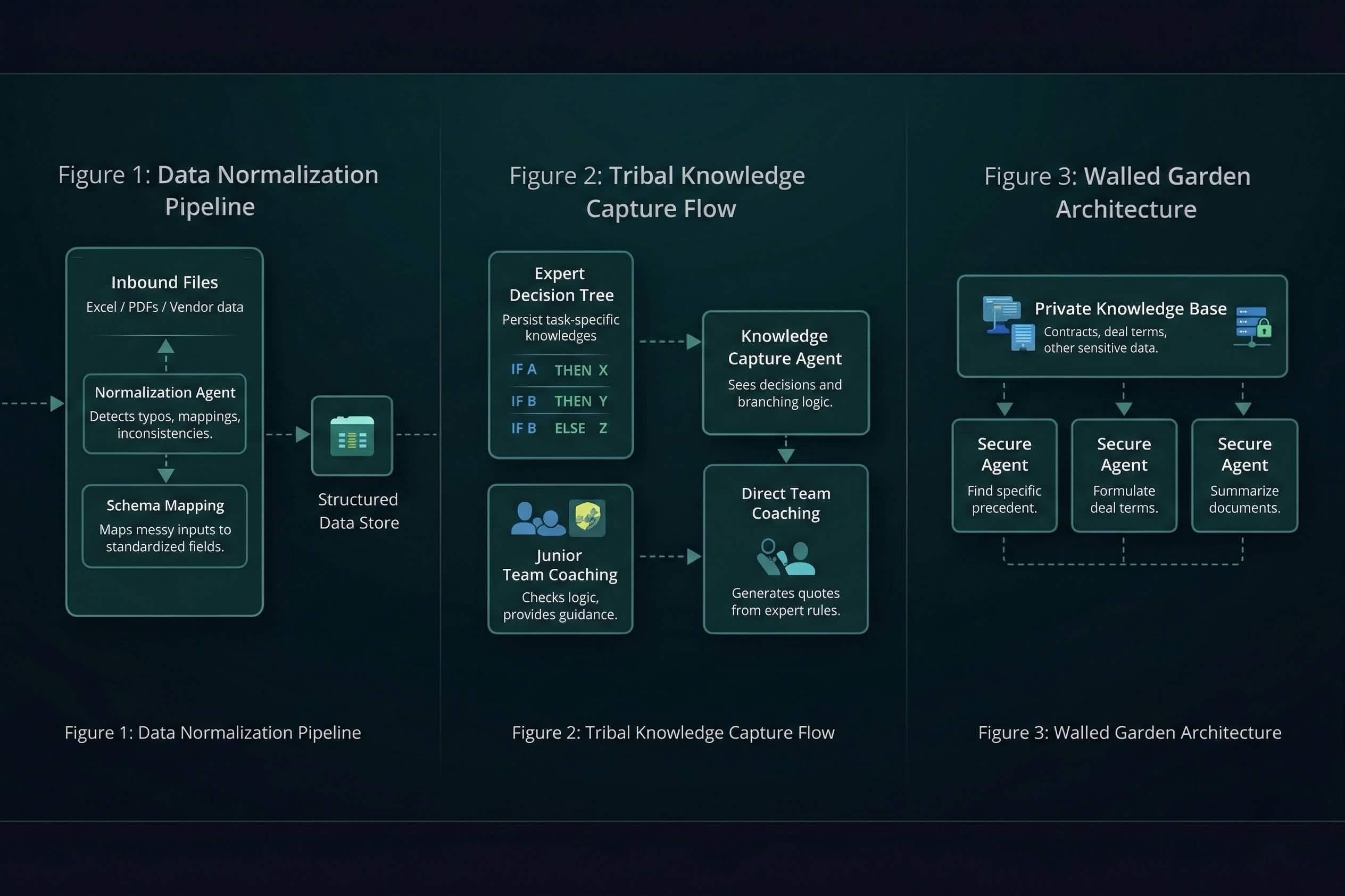

The conventional instinct is to clean the data first, then apply AI. In practice, that sequencing is backwards. The cleaning is the agent’s job. Across manufacturing and automotive engagements, the pattern that delivers the fastest ROI is an agent trained to read messy inbound files, identify anomalies like typos or duplicate SKUs, and map them to a standardized schema. A process that used to consume six weeks of manual labor, so about a month and a half of someone’s time, now runs in minutes.

The important framing here is that the agent is not doing analysis. It is doing normalization. It turns unstructured garbage into a structured source of truth that every downstream system can actually consume. This is the “last mile” of data ingestion, and it is the place where most data pipeline projects stall because the variation in real-world inputs is too high for rigid ETL rules but too low-value for a senior engineer to sit and babysit.

For teams evaluating where to deploy their first AI agent, this is the pattern with the shortest time to measurable value. You do not need a sophisticated reasoning model. You need a specialized agent with a well-defined schema target and a tolerance for messy inputs.

Integration Work Nobody Wants to Do

There is a category of engineering work that is essential, constant, and to quote one of our engineering leads “mind-bogglingly boring.” API integrations. Connecting a CRM to an ERP. Wiring up JIRA to a deployment pipeline. Reading vendor documentation, writing boilerplate connector code, and then maintaining it when the vendor ships a breaking change.

This work burns out mid-level engineers, and it is the kind of task where an agent provides disproportionate leverage. Not because the agent writes better code. It doesn’t always but because the agent does not lose focus after the third hour of reading API documentation. The pattern we ship repeatedly is an agent that ingests API docs, generates connector code against a standardized interface, and produces integration scaffolding that a human engineer reviews and deploys.

The key insight is positioning. The agent is not replacing core engineering logic. It is handling the glue code that connects systems, which frees human engineers to work on product architecture and business logic i.e. the work that actually differentiates the product. This distinction matters operationally and it matters politically inside engineering teams. When you frame agents as handling the drudge work, adoption resistance drops significantly.

Tribal Knowledge Is the Asset Nobody Accounts For

In industrial sales, a senior rep with 20 years of experience knows which fitting goes with which hose assembly, which customer gets which pricing tier, and which configurations will fail under specific operating conditions. That knowledge exists nowhere except in their head. When they retire, or get poached, the company loses an asset it never put on the balance sheet.

This is the pattern that changes the economics of professional services and distribution businesses. We call it tribal knowledge capture, though the more accurate description is building a persistent digital system that encodes expert decision-making into repeatable, auditable logic.

The implementation follows a consistent arc. Record the expert’s workflow and decision tree. Identify the branching logic i.e. the “if this, then that” chains that they run intuitively but have never documented. Train a specialized agent on that logic. Deploy the agent as a coaching layer for junior staff or as a direct quoting engine for customers.

In one engagement with an industrial distributor, the result was a quoting agent that lets a first-year sales rep produce quotes with the same accuracy and product knowledge as a 20-year veteran. The business impact is not subtle. It is the difference between a two-day quote turnaround and a 90-second one. The VP of Sales can calculate the revenue impact on a napkin.

However, the deeper shift is structural. The business model moves from renting human hours to owning a persistent digital asset. The agent does not quit, does not need onboarding, and does not take institutional knowledge with it when it leaves. For firms where expertise concentration is both the competitive advantage and the biggest operational risk, this pattern is existential.

Why Decomposition Beats “Universal Agents”

The architectural mistake teams make most often is building a single agent with too many responsibilities. Give one model access to 15 tools, a broad instruction set, and a complex multi-step workflow, and you get hallucinations, dropped steps, and outputs that look plausible but fail on inspection. Universal agents are the demo that never ships.

The pattern that actually holds up in production is decomposition: a supervisor agent that breaks a request into discrete tasks, and specialized worker agents that each handle one narrow responsibility. One agent scrapes the web. Another formats the data. A third updates the CRM. A fourth generates the summary. The supervisor validates each output before passing it to the next stage.

This works because reliability comes from specialization. It is significantly easier to validate whether an agent correctly found a PDF than whether it correctly synthesized a complex judgment call across multiple data sources. Each worker agent has a constrained scope, a defined input/output contract, and a failure mode you can test independently.

The orchestration layer, the part that manages state, handles retries, routes between workers, and maintains the workflow lifecycle, is where most teams underinvest. They build clever agents and then wire them together with brittle scripts. When a step fails at 2 AM, nobody knows where the workflow broke or how to recover it. This is the infrastructure problem that Wippy was built to solve: durable execution, state management, and lifecycle visibility for multi-agent workflows that need to run unsupervised.

| Pattern | Scope | Reliability Profile | Human Review Point |

| Single monolithic agent | Broad including multiple tools, open-ended reasoning | Low including hallucination risk scales with tool count | End of workflow (often too late) |

| Supervisor/worker decomposition | Narrow per worker i.e. one task, one validation | High i.e. each step independently testable | Between stages (catch errors early) |

| Human-in-the-loop hybrid | Mixed where the agent does volume, human does judgment | Highest but slower throughput | At decision points defined by business rules |

The “Walled Garden” Is a Product Feature, Not a Constraint

In private equity, law, and compliance, the data sensitivity constraint that most teams treat as a blocker is actually what makes the agent pattern defensible. Firms processing terabytes of deal documents, thousand-page contracts, and decades of precedent cannot send that data to a public model. The security requirement is non-negotiable.

Building a private knowledge base, a walled garden where agents query specific deal terms, compare indemnity caps across transactions, or surface relevant precedents from internal archives, turns the security constraint into a competitive moat. The firm that builds this system can retrieve in seconds what used to require a junior associate working for days. The firm that doesn’t build it continues to pay $400/hour for human document review at a pace that cannot scale.

The failure mode to watch for here is scope creep. The temptation is to build a general-purpose legal AI. The implementations that ship and retain trust start narrow, one deal type, one document class, one specific query pattern and expand only after the first use case is validated with real users and real data. “Can you find the indemnity cap on the 2020 Meridian deal?” is a better starting point than “analyze all our deals.”

Legacy Code Is Not Technical Debt. It Is Unstructured Knowledge

The sixth pattern is less discussed but equally impactful. Companies sitting on aging codebases like Ruby on Rails applications from 2012, .NET systems from 2008 or Cobalt even, face a specific problem: the original developers are gone, the documentation is incomplete or missing, and the code contains business logic that nobody fully understands anymore.

An agent trained to ingest the codebase, build a knowledge graph of dependencies and business rules, and then assist with targeted refactoring turns what would be a six-month archaeological dig into a structured modernization project. The agent reads millions of lines without fatigue and without the institutional bias that makes human developers afraid to touch code they did not write. The output is not a full rewrite. It is comprehension, mapped to a refactoring plan that engineers can execute with confidence.

The Systems That Survive Are the Ones You Own

Every pattern described here shares a structural property: the value accumulates over time only if the system is durable, auditable, and owned by the business that operates it. An agent that runs on a vendor’s infrastructure with the vendor’s model and the vendor’s data pipeline is a subscription. An agent that runs on your infrastructure, encodes your expertise, and operates within your governance framework is IP.

The difference matters when the question shifts from “does this work?” to “can we depend on this?” Production agents need workflow orchestration that survives failures, state management that enables auditability, and an architecture that the business can operate independently of whoever built it. That is the standard we build to because a demo that impresses a room is worth nothing if it cannot run unsupervised on a Tuesday at 3 AM.

Tired of Agent Projects That Stall After the POC?

Most teams get stuck between a working prototype and a system their operations team will actually trust. We build agent systems that run in production with durable orchestration, human-in-the-loop controls, and infrastructure you own.

Spiral Scout builds production AI systems for companies navigating complex workflows. Wippy is the runtime that makes those systems durable.