Every week we talk to companies that want AI agents. The conversation usually follows the same pattern where someone on the leadership team saw a demo, got excited, and now there is a mandate to “implement AI” across three or four departments by next quarter. The team spins up a proof of concept, it works beautifully on test data, and then the project quietly dies somewhere between the security review and the realization that their product catalog lives in 14 different spreadsheets. After deploying dozens of agent systems across legal, industrial distribution, insurance, and professional services, the pattern of what kills these projects is consistent enough that we have codified it into a repeatable methodology.

Key Takeaways

- Agent projects fail for organizational reasons, not technical ones. Data fragmentation, over-scoped mandates, and skipped stakeholder alignment kill more pilots than model selection ever will.

- The supervisor-worker architecture is the only pattern that reliably ships. Single monolithic agents become unreliable the moment you add real-world complexity.

- Prototype in Figma before you write agent code. This is the single highest-leverage risk reduction step and it costs almost nothing.

- Tribal knowledge extraction is the real deliverable. When you encode a senior expert’s decision-making into an agent, you create a compounding asset the business owns permanently.

- Self-modifying runtimes that generate their own integrations will replace the pre-built connector model within two years. The maintenance cost difference is an order of magnitude.

The professional services displacement is structural, not cyclical

The shift happening in professional services right now is not a temporary disruption. AI agents are absorbing the tasks that companies historically staffed with junior engineers, analysts, and coordinators. Data scrubbing, document review, QA cycles, report generation, compliance checks. The work that is repetitive, structured, and high-volume. Companies that sell human hours for this kind of output are already losing deals to competitors who deliver the same results through agent systems at a fraction of the cost and turnaround time.

The distinction that matters here is between a chatbot and what we call a digital employee. A chatbot responds to prompts in isolation. A digital employee persists inside your infrastructure, retains institutional knowledge across sessions, operates within defined permissions with full audit trails, and gets more effective as your data improves. It does not leave the company. It does not take institutional knowledge with it when it moves to a competitor. The architecture underneath it determines whether it is a toy or a production system.

Pro Tip: When scoping a digital employee, define its permissions matrix before you define its capabilities. In practice, the permissions conversation surfaces 80% of the edge cases that would otherwise blow up during production. We build a RACI-style document for every agent that maps which systems it can read, which it can write to, and which actions require human approval. This single artifact prevents more project failures than any amount of prompt engineering.

Why single-agent architectures break under production load

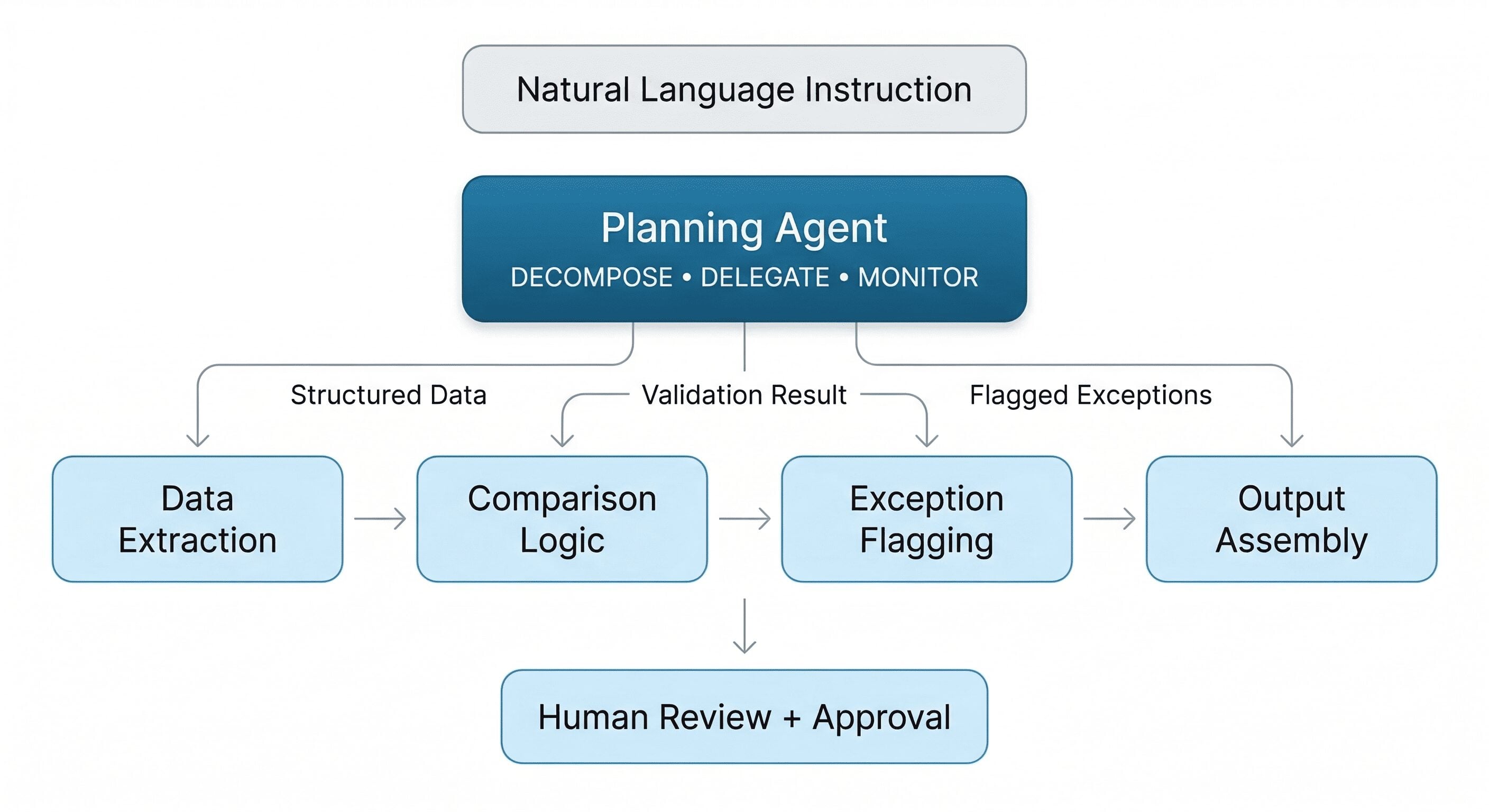

The architecture that makes digital employees viable in production is a supervisor-worker model. A planning agent receives a natural language instruction, decomposes it into discrete subtasks, and delegates each one to a specialized worker agent. One worker handles data extraction. Another runs the comparison logic. A third flags exceptions for human review. A fourth assembles the output. This decomposition is what prevents the failure mode where a single monolithic agent tries to handle an entire complex workflow and becomes unreliable at all of it.

The reason this pattern works is counterintuitive. You might assume that a single agent with access to all tools would be more efficient than coordinating multiple specialists. In practice, the opposite is true. Every tool you add to a single agent’s context degrades its reasoning accuracy. By the time a monolithic agent has 25 or 30 tools available, it struggles to select the right one for the right step, and the error surface expands combinatorially. The supervisor-worker pattern constrains each agent’s scope, which makes each individual agent dramatically more reliable and the overall system easier to debug.

Pro Tip: When building supervisor-worker systems, never let the supervisor agent call the LLM more than once per delegation cycle. The supervisor should decompose the full task into a plan in a single reasoning pass, then hand off each subtask without re-reasoning about the overall plan at every step. Re-reasoning at every delegation creates drift, where the supervisor gradually reinterprets the original instruction as it processes intermediate results. We enforce this by making the plan immutable once generated, with only the retry and exception paths triggering a re-plan.

There is a practical constraint that most teams discover too late: the handoff format between supervisor and worker agents matters enormously. If the supervisor passes unstructured natural language to each worker, the workers re-interpret the instruction through their own system prompts and the output drifts from what was intended. The fix is to enforce a structured schema for every handoff. Each worker receives a typed input object with explicit fields, not a prose description. This eliminates the telephone game effect where meaning degrades at every handoff in the chain.

Stop storming every beach at once

The single most common reason AI agent projects fail is scope. A VP gets buy-in for “an AI initiative,” the team tries to automate five workflows simultaneously, and six months later nothing is in production. We call this the Normandy problem. You do not win by attacking everywhere. You win by picking one beachhead, one specific and well-defined workflow, and proving it works completely before expanding.

The beachhead has to be something concrete and measurable. Processing a specific type of Excel report. Responding to a specific category of inbound RFPs. Automating a specific QA test suite. It has to be something where you can point to a before-and-after metric within weeks, not quarters. The practical execution follows a five-phase methodology that we have refined across dozens of deployments.

Phase Zero is the data audit. Before anyone writes agent code, you inventory every data source, format, and system of record the agent will need to touch. The output is a map of what is structured versus unstructured, what is duplicated, and what is missing entirely. Phase One is a clickable prototype in Figma. This sounds old-school, but it is the single most effective risk reduction tool we have found. Stakeholders validate the user journey, catch misunderstandings about what the agent should actually do, and align on the interface before any engineering budget is committed. The number of projects we have saved by catching a fundamental scope misunderstanding at the Figma stage instead of during development is significant.

Phase Two is the proof-of-concept build. A scoped agent with clear success criteria running against real data, delivered in one to two weeks. If it works, you move to Phase Three: production build with full orchestration, permissions, audit trails, and state management. Phase Four is expansion to adjacent workflows, where each new agent builds on proven infrastructure and organizational trust earned in the previous phases.

Pro Tip: During Phase One prototyping, always include the error states and edge case screens in the Figma, not just the happy path. The most valuable feedback you will get from stakeholders is not about the ideal flow. It is about what happens when the agent encounters an invoice with a missing PO number, or a contract clause it has never seen, or a data source that returns a timeout. Designing these failure paths in Figma forces the team to make decisions about fallback behavior before they are debugging it in production at 2am.

Your AI agent is only as good as your worst spreadsheet

Here is the uncomfortable truth that most AI vendors skip in the sales process: if your data is a mess, your agents will be a mess. This is not a cliche. It is a project-killing reality that surfaces in two specific forms.

The first form is format fragmentation. Critical business data trapped across Excel files, PDFs, emails, and ERPs that do not talk to each other. Agents need to read, normalize, and reconcile this data before they can reason about it. The second form, and the one with significantly higher value when addressed, is tribal knowledge. The expertise that lives in the heads of your senior people and nowhere else. The veteran salesperson who knows which distributors require different pricing treatment. The lawyer who knows which contract clauses are actually negotiable versus which ones look negotiable but will get rejected by opposing counsel every time. The QA lead who knows which test failures indicate real bugs versus which ones are environment noise that can be safely ignored.

Extracting and encoding tribal knowledge into an agent’s reasoning is where the compounding value lives. When you capture a subject matter expert’s decision-making patterns and embed them into an agent, you create something durable. The agent does not forget. It does not retire. It applies that expertise consistently at a scale the expert never could alone. But this only works if you do the extraction work upfront through structured interviews, documentation, and validation with the expert, rather than hoping the LLM will figure it out from raw data.

Pro Tip: Run what we call a “knowledge decay audit” before you start tribal knowledge extraction. Identify the three to five people in the organization whose departure would cause the most operational damage, then map exactly which decisions they make that nobody else can replicate. This gives you a prioritized extraction list. Start with the person closest to retirement or the role with the highest turnover. We have seen companies lose six months of agent development progress because the domain expert who was supposed to validate the agent’s reasoning left the company before the validation phase.

Why self-modifying systems will replace the connector model

Most enterprise software solves the integration problem by maintaining a library of pre-built connectors. Salesforce connector, NetSuite connector, Jira connector. Every time a new system enters the picture, someone has to build and maintain another connector. This model made sense when integration was a one-time engineering task. It breaks down when you need agents that operate across dozens of systems and data sources that change their APIs quarterly.

The alternative is an agent runtime that can read API documentation and generate its own integrations on the fly. Instead of maintaining a connector for every system, the runtime studies the target API, generates the integration code, tests it in a sandbox, and deploys it. When the API changes, the agent adapts without a manual update cycle. This is the architecture we built into Wippy. The system includes a self-reflection mechanism where it examines its own composition, its tools, integrations, and workflows, and can modify or extend itself when it encounters a new requirement.

The practical implication is that non-technical domain experts can describe what they need in natural language, and the system builds the workflow without a round-trip through an engineering team. This matters because the traditional communication loop between a business user and an engineer is lossy by nature. Requirements get simplified, context gets dropped, and the delivered solution often misses the nuance of what the expert actually needed. Removing that translation layer does not eliminate the need for engineering oversight, but it compresses the feedback cycle from weeks to hours.

Pro Tip: When deploying self-modifying agents, always enforce a “sandbox-then-promote” pattern. The agent generates integration code, but that code executes first in an isolated sandbox against a copy of the target data. Only after the sandbox run completes successfully and passes a validation check does the code get promoted to the production workflow. We learned this the hard way: a self-modifying agent that writes directly to production APIs will eventually generate a valid but semantically wrong integration that corrupts downstream data. The sandbox step catches these. It adds about 30 seconds to each new integration and has saved us from three data integrity incidents in the last year alone.

Agent architecture patterns that ship in production

Not every agent problem calls for the same structure. In practice, the architecture decision depends on whether the workflow is deterministic or adaptive, how long the process runs, and whether a human needs to intervene mid-stream.

| Pattern | Workflow Type | State Duration | Human-in-Loop | Use Case |

| Pipeline Agent | Deterministic, sequential | Minutes | No | Document processing, data ETL, report generation |

| Supervisor + Specialists | Adaptive, branching | Hours to days | Optional checkpoints | QA automation, CPQ quoting, intake processing |

| Event-Driven Reactor | Reactive, trigger-based | Persistent | Alert-based escalation | Monitoring, compliance flagging, SLA enforcement |

| Human-in-Loop Orchestrator | Collaborative, approval-gated | Days to weeks | Mandatory at decision points | Contract review, financial approvals, audit workflows |

Pro Tip: The most common architecture mistake we see is teams selecting the Pipeline Agent pattern because it is simplest, then discovering mid-build that their workflow actually requires approval gates or retry logic. Before you commit to an architecture, map every decision point in the workflow where a human currently intervenes. If there are more than two, you need the Supervisor + Specialists pattern at minimum. Refactoring from Pipeline to Supervisor after development has started typically costs 40-60% of the original build budget.

Three failure modes that only surface after the demo works

The confidence calibration gap

An agent that is 95% accurate sounds impressive until you calculate what 5% failure means at production volume. A quoting agent that runs 200 quotes per day produces 10 wrong quotes daily. Whether that is tolerable depends entirely on the downstream consequence. For internal report summaries, 95% might be fine. For customer-facing pricing commitments, it is a liability. The fix is not making the model more accurate. The fix is designing the workflow so that high-consequence outputs always route through a confidence-scored approval gate. When the agent’s confidence drops below a defined threshold, the output goes to human review automatically. This is an orchestration decision, not a model decision.

State management across time

Demos run in minutes. Production workflows run for days or weeks. A contract review agent that processes a document in a single session is a demo. A contract review agent that tracks a document through legal review, collects approvals from three stakeholders, handles revisions, and maintains a complete audit trail across a two-week cycle is a product. The infrastructure required for the second scenario is fundamentally different. You need durable state that survives server restarts, event-driven triggers that respond to external actions rather than polling, and observability that lets the engineering team trace exactly what happened on day 9 of a 14-day workflow. This is the problem Temporal was designed to solve and the reason Wippy is built on top of it.

The integration maintenance spiral

Teams often underestimate the ongoing cost of maintaining agent integrations. An agent that connects to five systems works beautifully at launch. Six months later, two of those systems have updated their APIs, one has changed its authentication method, and the agent is silently failing on 15% of its tasks because nobody noticed the deprecation warnings. The self-modifying runtime approach addresses this directly, but even without it, the minimum viable practice is to build health checks into every integration endpoint and alert when response schemas change. We run automated schema comparison tests nightly across every integration in every deployed Wippy instance. The cost is trivial. The alternative is discovering data drift three months after it started.

The only asset that compounds is encoded judgment

The companies shipping agent systems into production in 2026 are not doing anything exotic. They audit their data before touching a model. They scope ruthlessly to one beachhead workflow. They prototype before they build. They extract tribal knowledge and encode it into agents the business owns permanently. And they build on infrastructure that gives the client full ownership of every agent, every workflow, and every data pipeline.

The real deliverable at the end of this process is not the AI model. Models are commoditizing fast. The deliverable is the institutional judgment you have captured and operationalized: the decision-making patterns of your best people, encoded in systems that run 24/7, applied consistently at scale, and owned entirely by your organization. That is IP that compounds. Every workflow you encode makes the next one faster. Every edge case you handle makes the system smarter. Every audit trail you generate makes the compliance conversation easier. The builders willing to do the unglamorous work of making this real are the ones who will own the operational infrastructure of their industries in five years.

Stuck between a working demo and a production deployment?

Most AI agent failures are not technical. They are architectural and organizational. If you are building agents on data you have not audited, with a scope that is too broad, and no plan for how the system survives its first encounter with real edge cases, you already know what happens next.

Spiral Scout’s AI Readiness Audit maps your data, identifies what is buildable today, and gives you a concrete path from prototype to production in two weeks.