A hydraulic hose distributor we work with has a senior sales rep who has been quoting custom assemblies for 22 years. He knows which fittings are compatible under specific pressure ratings, which substitutions will pass inspection, and which configurations a customer’s engineering team will reject before they even review the spec sheet. None of that knowledge is in a database or a manual. It lives entirely in his head. When he retires—and he’s close—the company loses the ability to quote accurately until someone else spends half a decade learning the hard way. This is the most expensive problem in industrial companies, and almost nobody is building AI agent systems to fix it.

Most AI companies are building horizontally: another chatbot framework, another prompt playground, another “platform” that does a little of everything and solves nothing completely. We took our open-source AI runtime and went vertical. We started partnering with subject matter experts—the 20-year veterans, the domain specialists, the people who actually know the rules—and encoding their knowledge into agent architectures that work without the expert in the room. Not “AI implementation” in the abstract. The specific, often boring, high-stakes problem of turning undocumented expertise into systems that run reliably in production.

Key Takeaways:

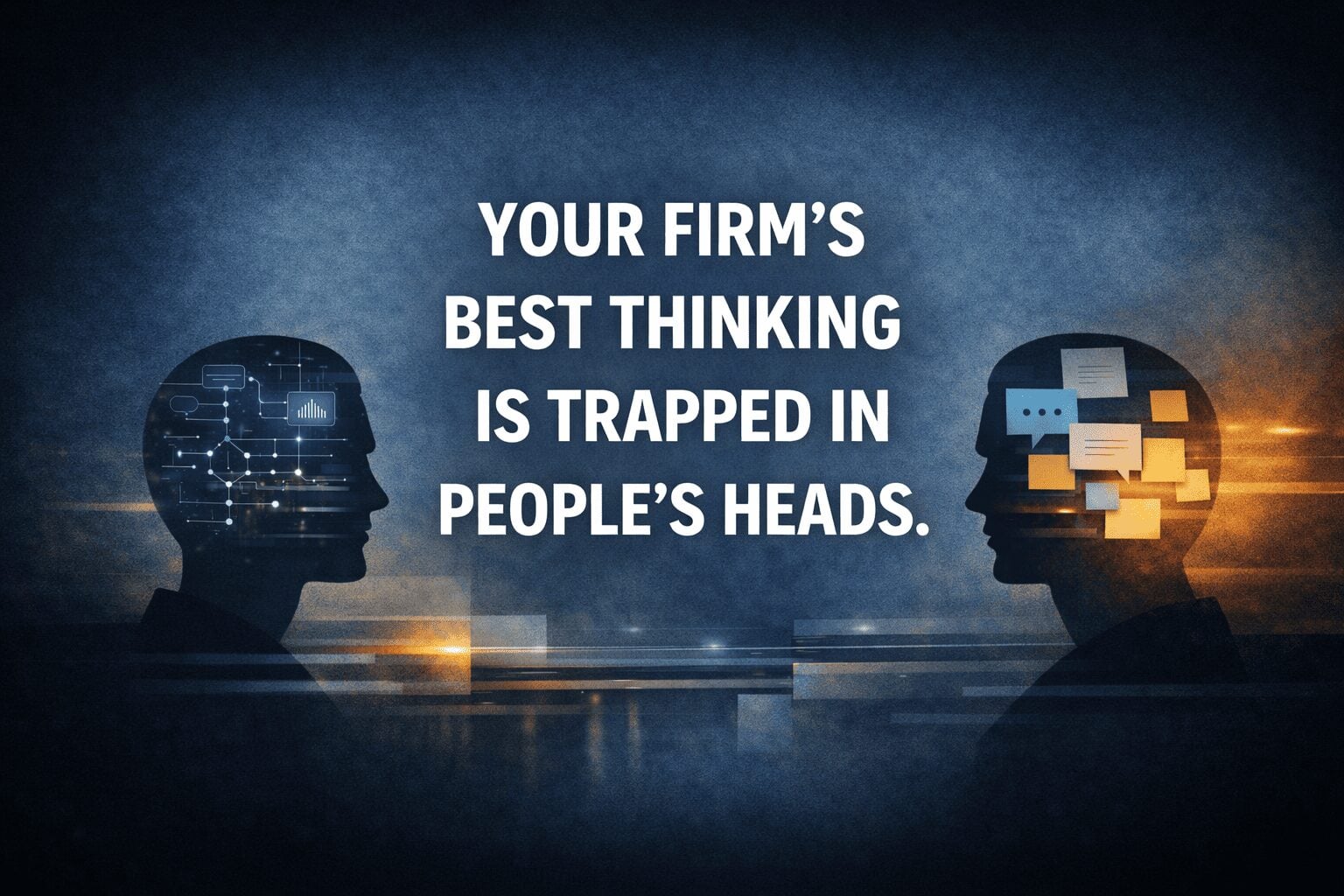

- The most valuable knowledge in most companies is undocumented and walks out the door when senior employees leave. AI agents that encode this tribal knowledge solve a structural business problem, not a technology problem.

- Horizontal AI platforms fail for the same reason unconfigured ERPs fail—the value is in domain-specific rules and heuristics, not infrastructure.

- Vertical agent systems in manufacturing, legal, industrial distribution, and automotive are already shipping in production, replacing months of manual work with minutes of automated execution.

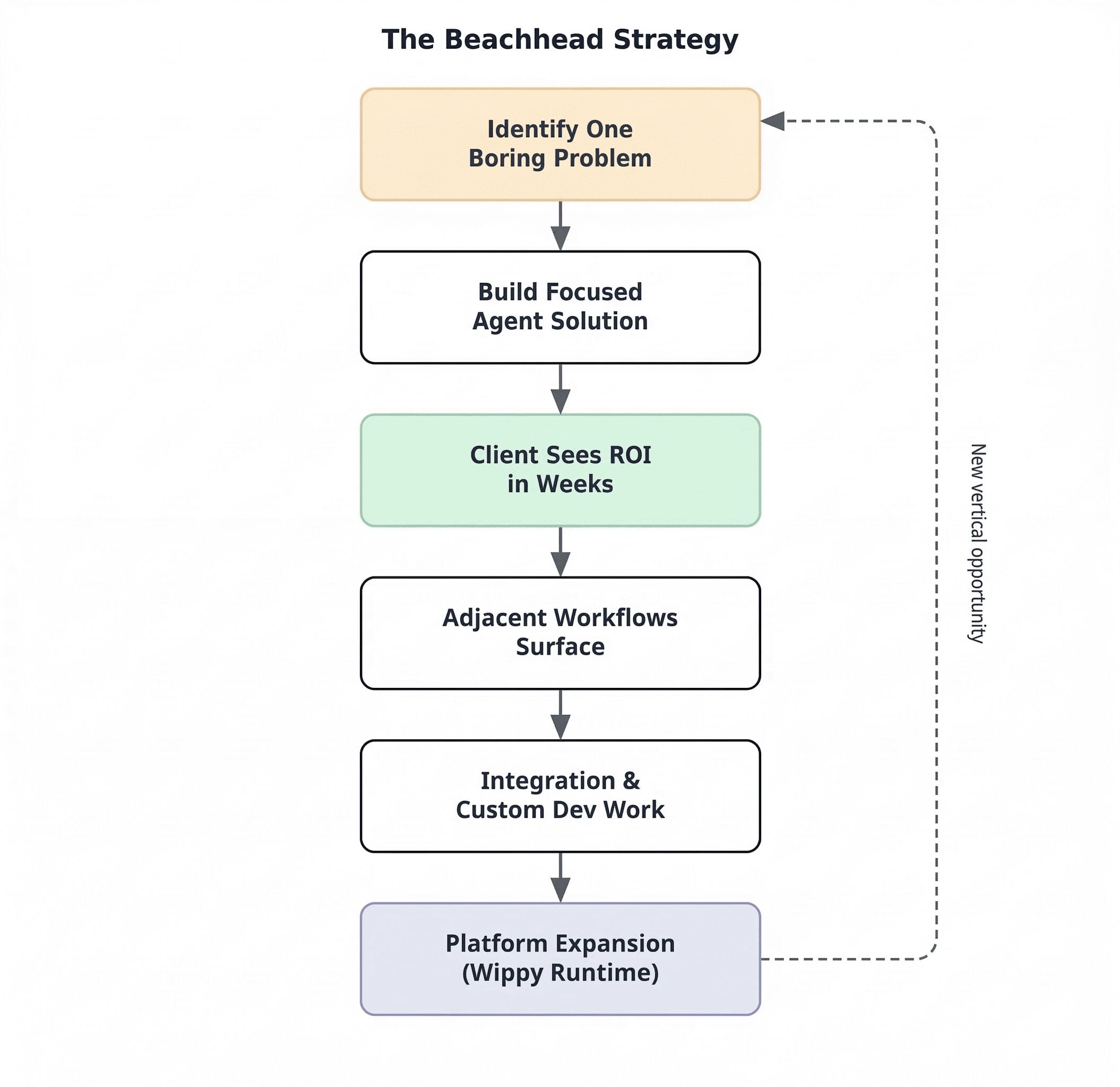

- The beachhead strategy—solving one specific, boring workflow completely before expanding—is the only repeatable path to AI adoption that sticks.

- Agents work as an integration layer on top of legacy systems, eliminating the risk and cost of full rewrites.

Why Horizontal AI Breaks When It Hits Real Companies

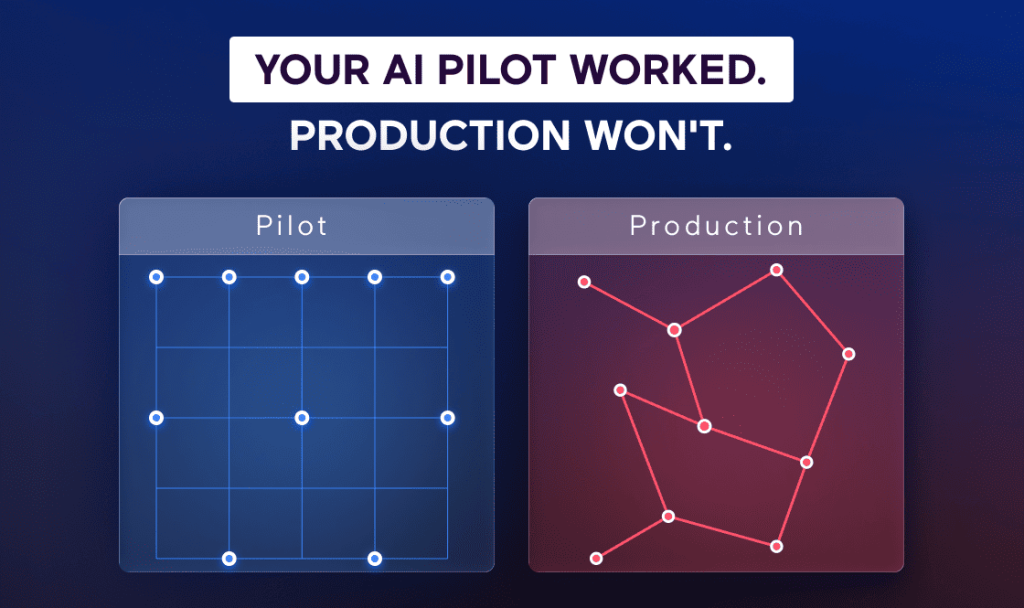

We tried the horizontal approach first. Build a general-purpose agent framework, let clients figure out what to do with it. In practice, that breaks for the same reason a general-purpose ERP breaks without configuration: the value is in the domain-specific rules, not the infrastructure. A manufacturing company doesn’t need “AI.” They need a system that knows their specific supplier data formats, compatibility matrices, and quality tolerances. A law firm doesn’t need a chatbot. They need an agent that understands indemnification caps, regulatory conditions, and deal structure patterns across ten years of historical transactions.

The pattern we kept seeing across manufacturing, legal, industrial distribution, and automotive was identical. Every one of these industries has the same structural issue: the most valuable knowledge in the company is undocumented, lives in the heads of senior people, and disappears when they retire or get poached. That’s not a technology gap. It’s a knowledge encoding problem, and solving it requires working directly with the domain expert to capture what they know.

So we flipped the model. We now partner with subject matter experts and encode their knowledge into agent architectures. The client trains the agent in natural language—like onboarding a junior hire. They say things like “when you see this type of legal clause, flag it and check for X.” We build the supervisor and planner layers that turn those instructions into reliable workflows that worker agents then perform on behalf of their human counterpart. The result isn’t a generic chatbot. It’s a purpose-built system that thinks like your best employee on their best day, every time, at scale.

Tribal Knowledge Encoding Patterns Across Verticals

The specifics change by industry, but the encoding pattern is consistent. A domain expert’s heuristics get translated into agent rules, validation logic, and structured outputs. The table below maps how this plays out in practice across the verticals where we’ve shipped production systems.

| Vertical | Knowledge Type | Agent Behavior | Input Format | Production Impact |

| Manufacturing | Supplier data schemas, column mappings, unit conversions, QA tolerances | Recognizes and normalizes inconsistent spreadsheet formats against canonical schema | Excel/CSV from 50+ suppliers | 6 weeks of manual work → minutes per cycle |

| Legal | Clause identification, risk factor patterns, deal term heuristics, precedent matching | Extracts deal metrics into structured queryable formats; lawyers build agents via natural language | PDFs, unstructured docs, historical deal data | Days of review → seconds; 80+ agents built by non-technical lawyers |

| Industrial Distribution | Part compatibility, substitution rules, pressure ratings, configuration validation | CPQ agent acts as digital sales twin; validates configurations via rules engine with conversational interface | Conversational natural language + product catalog | Junior reps quote like 20-year veterans; self-serve customer quoting |

| Automotive / Fleet | Telematics interpretation, maintenance history correlation, cross-system conflict resolution | Normalizes inputs from fragmented systems; establishes single source of truth before diagnostic layer | Telematics APIs, shop software, driver reports | Data layer cleaned first; predictive repair routing deployed on solid foundation |

What Shipping Vertical Agents Looks Like in Practice

Manufacturing: Six Weeks of Data Cleanup Collapsed Into Minutes

The pain here is unglamorous and massive. Manufacturing companies receive thousands of spreadsheets from suppliers with inconsistent formatting: typos, merged cells, different column names for the same data. A company we work with was spending a month and a half of man-hours manually normalizing supplier data every cycle just to ingest clean records into their database, and it required multiple departments and stakeholders to coordinate.

We deployed agents that recognize and map data formats automatically. Not a custom importer for each vendor, but an agent trained to negotiate format discrepancies the way a human data analyst would—except it runs in minutes instead of weeks. This is the “last mile of data integration” that nobody wants to talk about because it isn’t glamorous, but it’s foundational. It’s also where companies hemorrhage the most operational time, and where future automation projects will break if the data layer isn’t clean first.

Legal: When Domain Experts Become Their Own Engineers

Law firms sit on terabytes of historical deal data trapped in PDFs and unstructured documents. During M&A, lawyers spend hours manually reviewing documents for specific clauses—indemnification caps, risk factors, regulatory conditions. The work is high-value but brutally repetitive.

We built a legal knowledge base where agents extract deal metrics into structured, queryable formats. A lawyer can now ask “show me all deals from 2020 with this specific risk factor” and get an answer in seconds instead of days. More importantly, with one legal client we enabled non-technical lawyers to build 80+ agents themselves using natural language. These lawyers became their own engineers. They combined their subject matter expertise directly with a coding agent that helped them build the automations, agents, and tools they needed. They didn’t need our engineering team to configure every workflow. They described what they wanted, and the system built it. That’s the structural shift: the domain expert doesn’t file a ticket and wait. They encode their own knowledge, on their own timeline.

Industrial Distribution: The Quoting Bottleneck That Costs More Than You Think

Configuring complex industrial products—hydraulic hose assemblies, pump systems, custom fabrication—requires years of hands-on experience. The senior sales rep who knows which parts are compatible, which substitutions work, and which configurations will fail inspection is the most valuable person in the building. They’re also the bottleneck. When they leave, the company’s quoting accuracy falls off a cliff.

We built a Configure, Price, Quote agent that acts as a digital sales twin. It uses a rules engine to prevent invalid configurations but lets users interact conversationally—ask “I need a hose assembly rated for offshore oil platforms, 3000 PSI, with quick-disconnect fittings.” A customer can now serve themselves, or a junior sales employee with three months of job experience can quote like a veteran with two decades by working directly with the agent. That isn’t a productivity improvement. It’s a structural change in how the business operates, and the client owns the IP outright.

Automotive: Why the Prediction Layer Fails Without Clean Foundations

Fleet management companies deal with data scattered across telematics systems, maintenance histories, shop management software, and driver reports. Nothing talks to anything else. Before we could deploy diagnostic agents that predict repairs and route drivers to specific shops, we had to clean the base data layer—normalize inputs, resolve conflicts between systems, establish a single source of truth.

This is the part most AI vendors skip. They want to build the flashy prediction layer on top of garbage data. In real teams, that approach breaks within weeks because the model’s outputs are only as reliable as the inputs underneath. Everyone knows the principle, but it bears repeating: clean the foundation first, then automate the coordination. And agents themselves can help clean the foundation, which turns the data remediation from a one-time project into an ongoing, self-correcting process.

Three Ways Tribal Knowledge Encoding Breaks in Practice

The first and most common failure is trying to encode knowledge without the domain expert in the room. Engineering teams attempt to reverse-engineer what the expert knows by reading documentation or interviewing stakeholders secondhand. In practice, this produces agents that handle the easy 80% of cases but break on the edge cases that matter most—the exact situations where the expert’s judgment is irreplaceable. The fix is structural: the domain expert has to be a participant in the encoding process, not a source you consult once and move on. Our approach puts them in a continuous feedback loop with the agent, refining its behavior iteratively until it handles their real workflow, not a sanitized version of it.

The second failure is deploying agents on top of dirty or fragmented data. This is especially lethal in manufacturing and fleet management, where data arrives from dozens of sources with no canonical schema. The agent dutifully processes garbage inputs and produces garbage outputs—but now with the false confidence of an automated system. Companies that skip the data normalization step end up with agents that create more problems than they solve, because downstream decisions are being made on unreliable information. The automotive example above is instructive: we had to build the data layer before the diagnostic agents could work. There’s no shortcut.

The third failure is over-scoping the initial deployment. Companies get excited about what agents could do and try to automate six workflows simultaneously. Every one of those workflows has its own edge cases, data requirements, and stakeholder dynamics. In real teams, this creates a project that’s permanently 80% done across five workstreams and 100% done on none of them. The beachhead strategy exists specifically to prevent this: solve one workflow completely, prove the ROI, then expand. Specificity isn’t a constraint on ambition. It’s the prerequisite for it.

One Boring Problem, Solved Completely, Then Expand

A recurring pattern across every vertical we work in: don’t try to solve everything at once. Find one specific, often unglamorous problem and solve it completely. We call this the Normandy approach—storm one beach, secure it, then expand.

The micro-workflow becomes the entry point. Map Excel columns for a manufacturer. Extract deal terms for a law firm. Automate quoting for a distributor. Each of these is a small, solvable problem with clear ROI. Once the client sees it working, they inevitably want more: integrations, custom development, scaling the system to adjacent workflows. The beachhead creates a natural expansion path for both the technology and the client relationship.

This strategy also solves the positioning problem. “We build AI agents” means nothing to a buyer. “We built a system that lets a junior sales rep quote like a 20-year veteran, and it’s live in production at three distributors” means everything. Specificity is the entire game in both product development and go-to-market.

Agents as a Legacy Integration Layer (Not a Rewrite)

There’s a secondary play here that compounds over time. Every one of these industries runs on legacy systems—ERPs, mainframes, homegrown databases that nobody wants to touch, like the last Jenga piece holding up the entire structure. The traditional modernization approach is expensive, risky, and slow: rewrite everything from scratch, migrate the data, and hope nothing breaks.

We use agents as an integration layer instead. Rather than rewriting the legacy system, we deploy agents that understand how it works. They analyze the codebase, write integration code, generate tests, and bridge the old system to new APIs. What used to take months of engineering time now takes days. The legacy system stays running while new capabilities layer on top. This isn’t a replacement strategy—it’s an augmentation strategy. And it dramatically reduces the risk that keeps most companies stuck on systems they built in 2006.

The Knowledge You Encode Is the Only IP You Keep

Infrastructure commoditizes. Models improve on someone else’s roadmap. The thing that doesn’t commoditize is the encoded judgment of the people who actually run your business. A competitor can license the same LLM and rent the same cloud infrastructure. They can’t replicate 22 years of knowing which hose assembly configuration will fail inspection, or which M&A clause patterns signal regulatory risk.

The companies that will own their AI advantage are the ones encoding tribal knowledge into systems they control—systems where the client owns the IP, where the domain logic lives in their environment, and where the agent gets smarter as their experts refine it. We build it so you can own it. That’s the design principle, and it’s the only approach that survives the next five years of model churn without leaving you dependent on a vendor you can’t leave.

Sitting on Expertise That Isn’t Scaling?

Spiral Scout has been building production software for 16 years. We encode domain expertise into AI agent systems that work reliably in regulated, high-stakes environments. If you’re sitting on tribal knowledge that isn’t scaling – or you’ve tried the horizontal AI approach and it went nowhere – we should compare notes.

We’ll map your highest-leverage opportunity in a single session