BEFORE ANYONE WRoTE A LINE OF CODE, WE MAPPED THE SYSTEM

Market

Segmentation

Workflow

Decomposition

System

Architecture

Solutions

Industries

Technologies

About THE Project

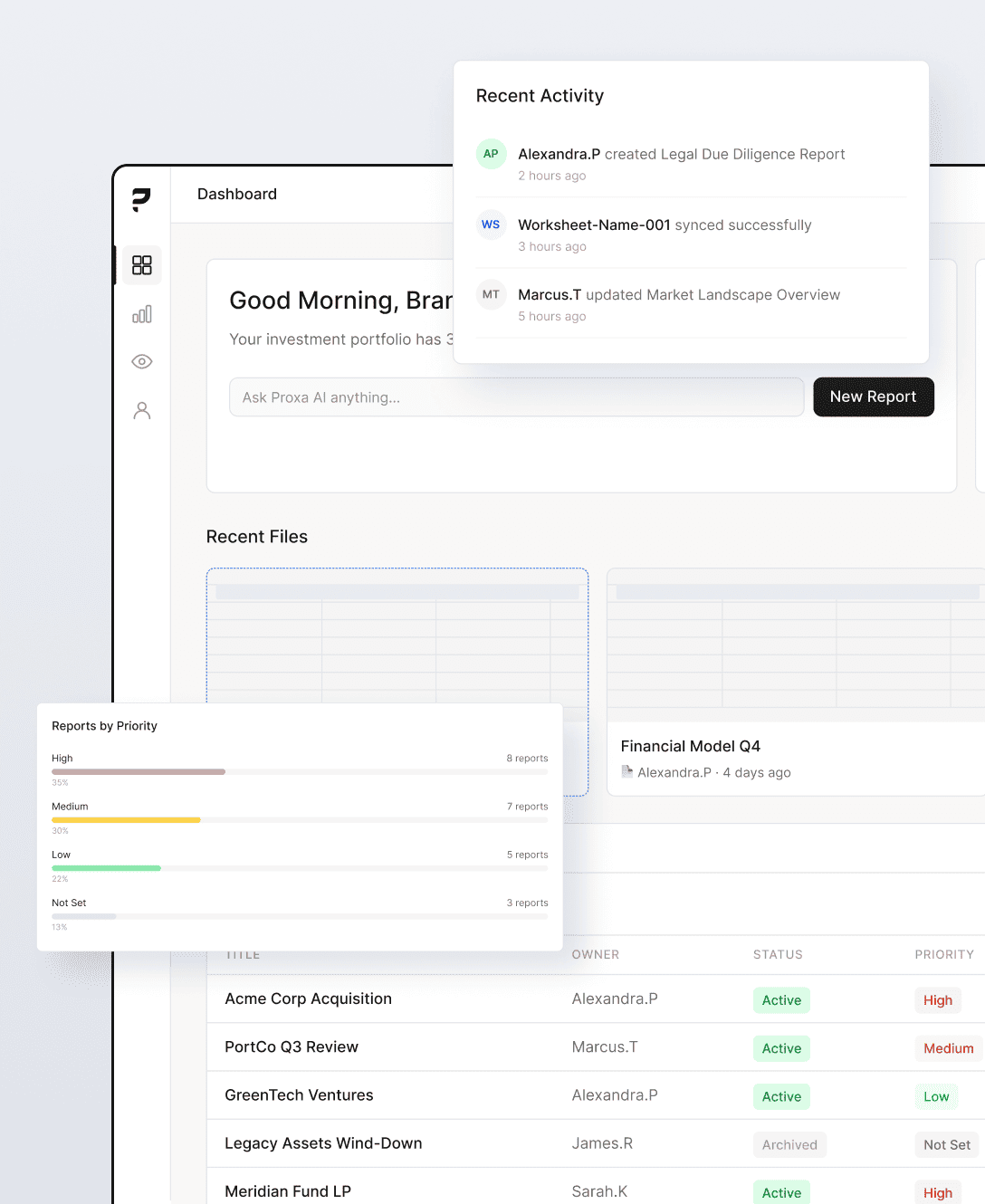

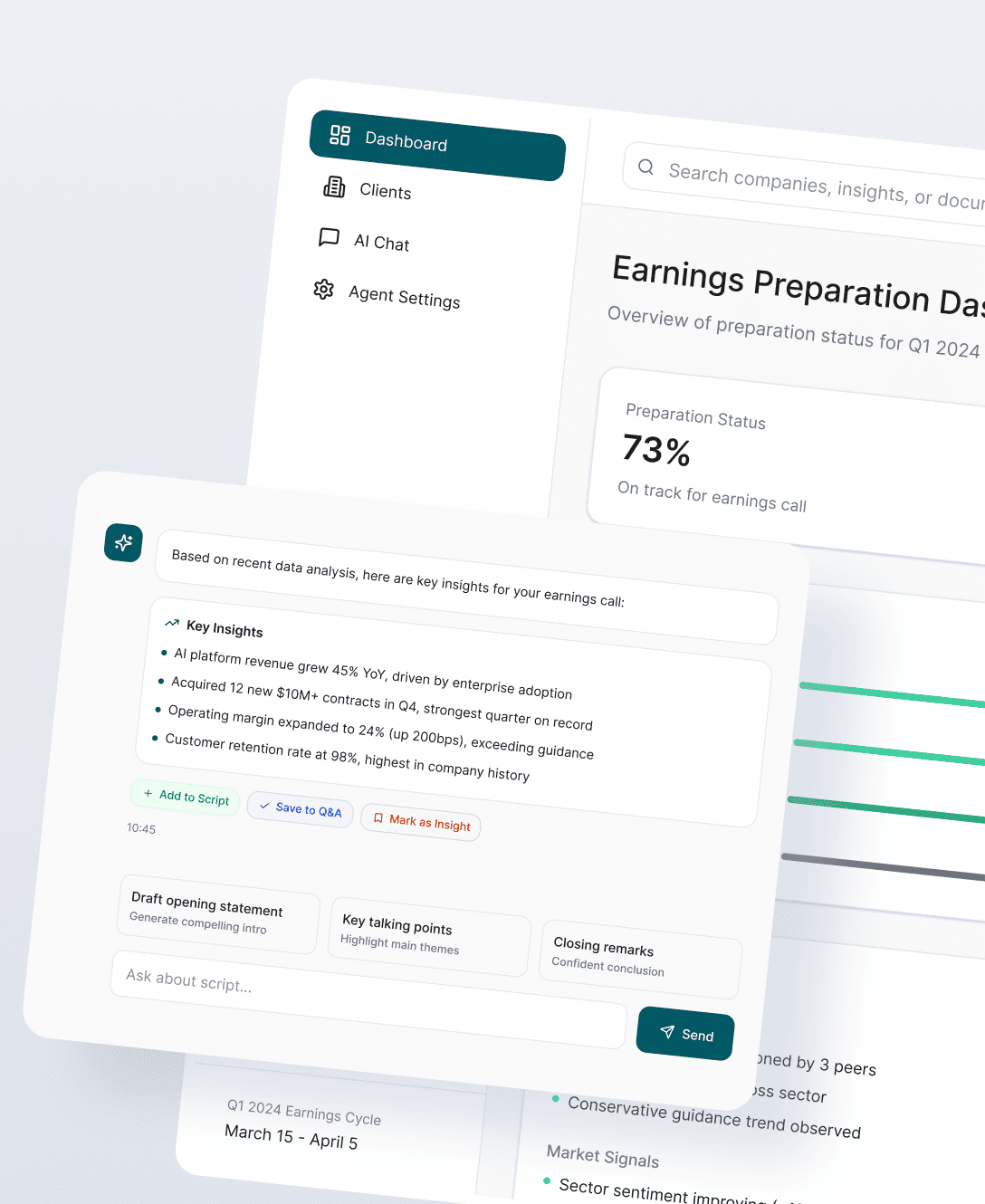

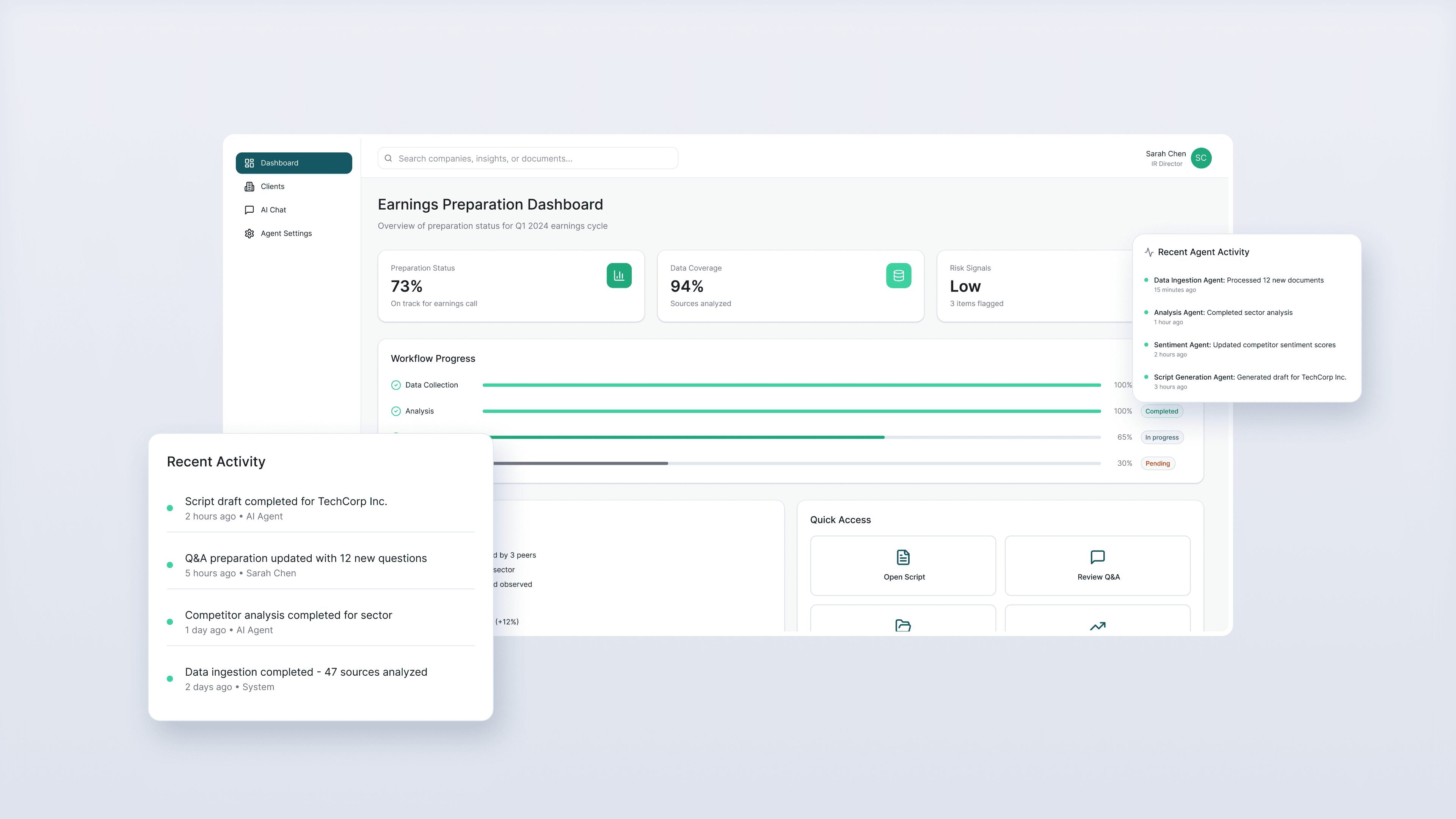

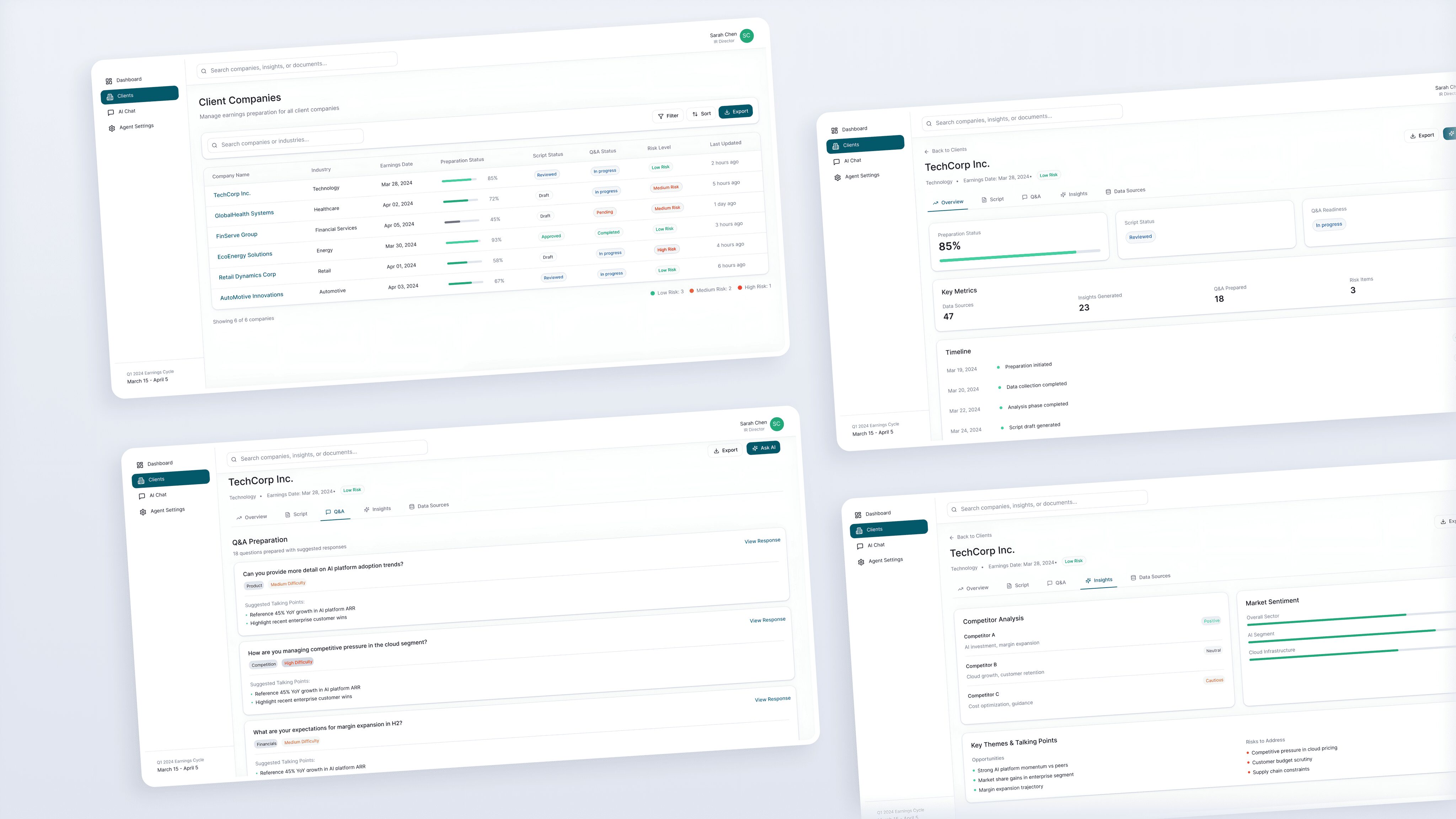

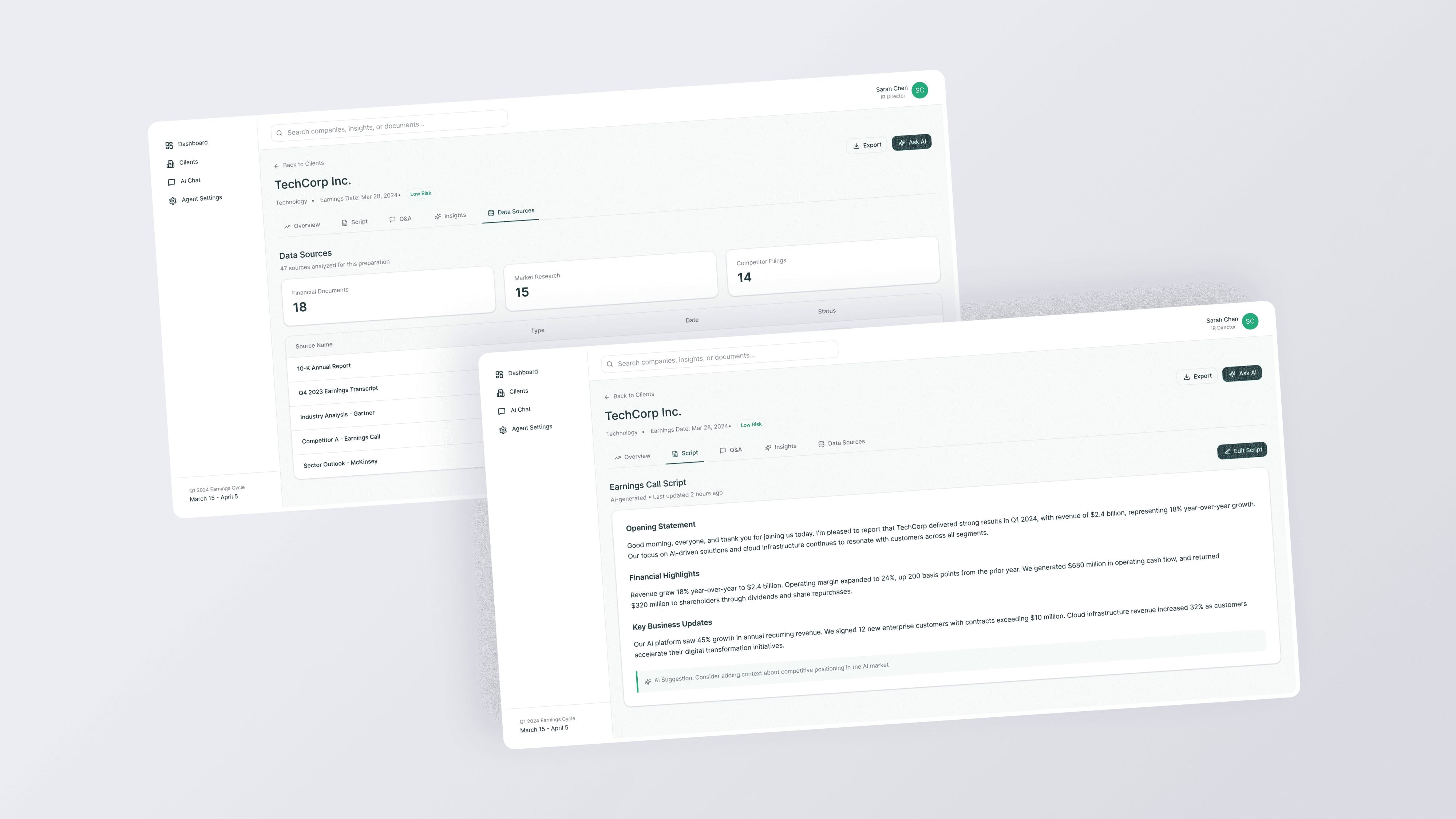

An early-stage product team came to us with a clear frustration and an early vision: there had to be a better way to prepare executives for earnings calls. The existing workflow was a mess of manual steps like pulling competitor transcripts, tagging Q&A segments, hunting for sentiment-driving language, assembling briefing documents the night before. All of it done by hand, under deadline, by people whose time cost a lot.

Our engagement wasn’t a product build. It was structured discovery at a systems level. We treated the client’s vision as a workflow orchestration problem and wanted to learn where did the manual effort actually live, what does it cost in time and decision quality, and what does a system architecture look like that could gradually automate it without removing executive control or introducing compliance risk. The goal was to arrive at Phase One with a foundation, not a guess and help them launch specialized AI agents in the future.

OBJECTIVES

- Reconstruct the end-to-end earnings call preparation workflow as it actually operates today including the informal steps most tools don’t account for

- Pinpoint the specific handoffs where manual effort creates delay, inconsistency, or decisions

- Evaluate the IR software landscape not by feature set but by workflow ownership

- Define a credible system direction for an AI-assisted knowledge base

- Establish architectural guardrails for future agent-based automation in a high-trust, regulated domain

Challenges

Solutions

The real workflow wasn’t documented

Earnings call preparation looks simple from the outside with prep a script, anticipate questions, review the numbers. In practice it’s a multi-day process involving five or more stakeholders, three to six data sources, and a series of judgment calls that live entirely in people’s heads. There was no single document describing how this work actually happened. Before we could design anything, we had to reconstruct the workflow from scratch using stakeholder interviews, timeline analysis, and reverse-engineering from outputs.

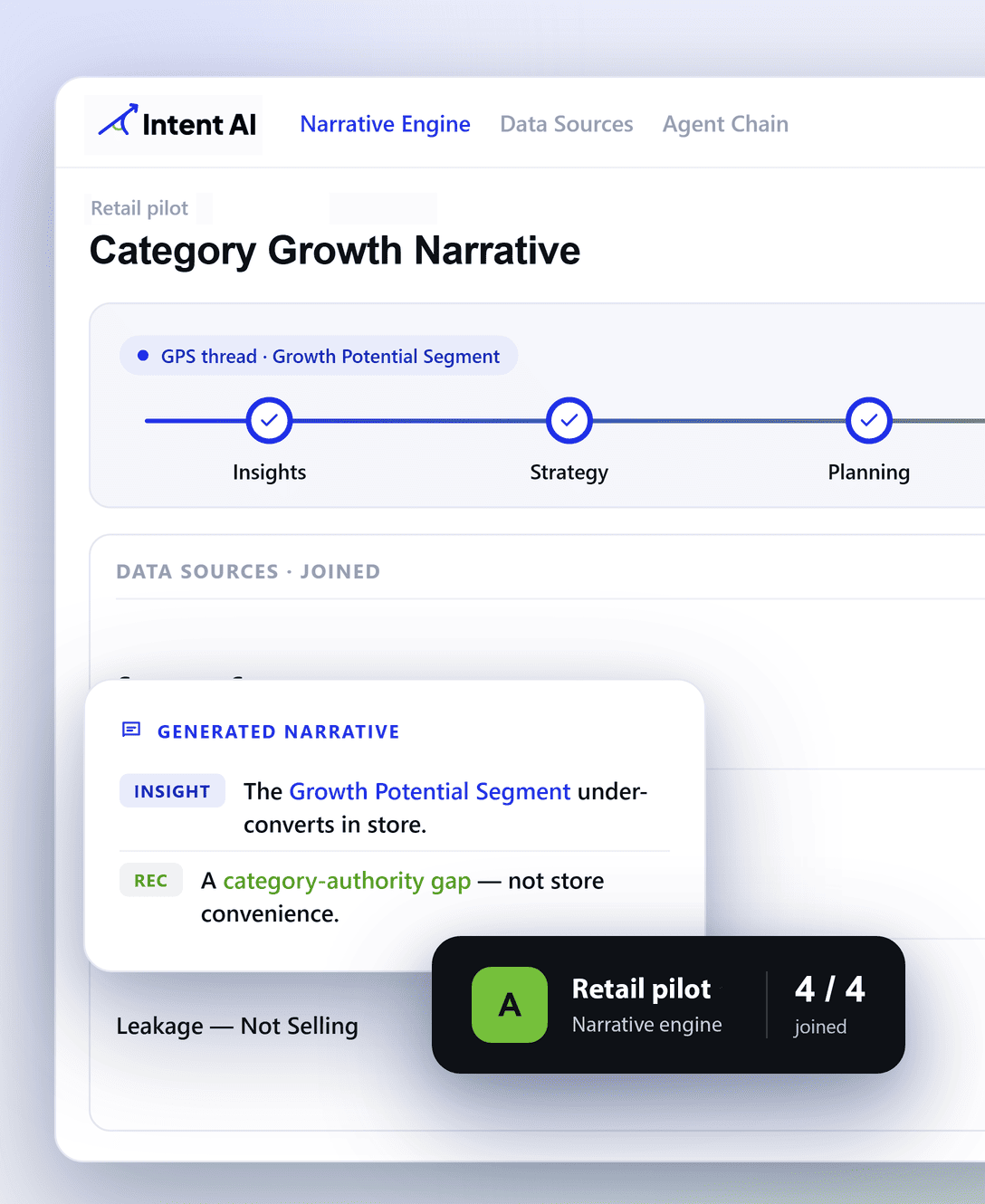

Mapping by workflow ownership

We built a workflow decomposition map that broke the preparation process into discrete stages with data ingestion, competitive extraction, theme summarization, sentiment tagging, script drafting, Q&A simulation with handoff points and time costs attached to each. This became the foundation for every architecture decision that followed.

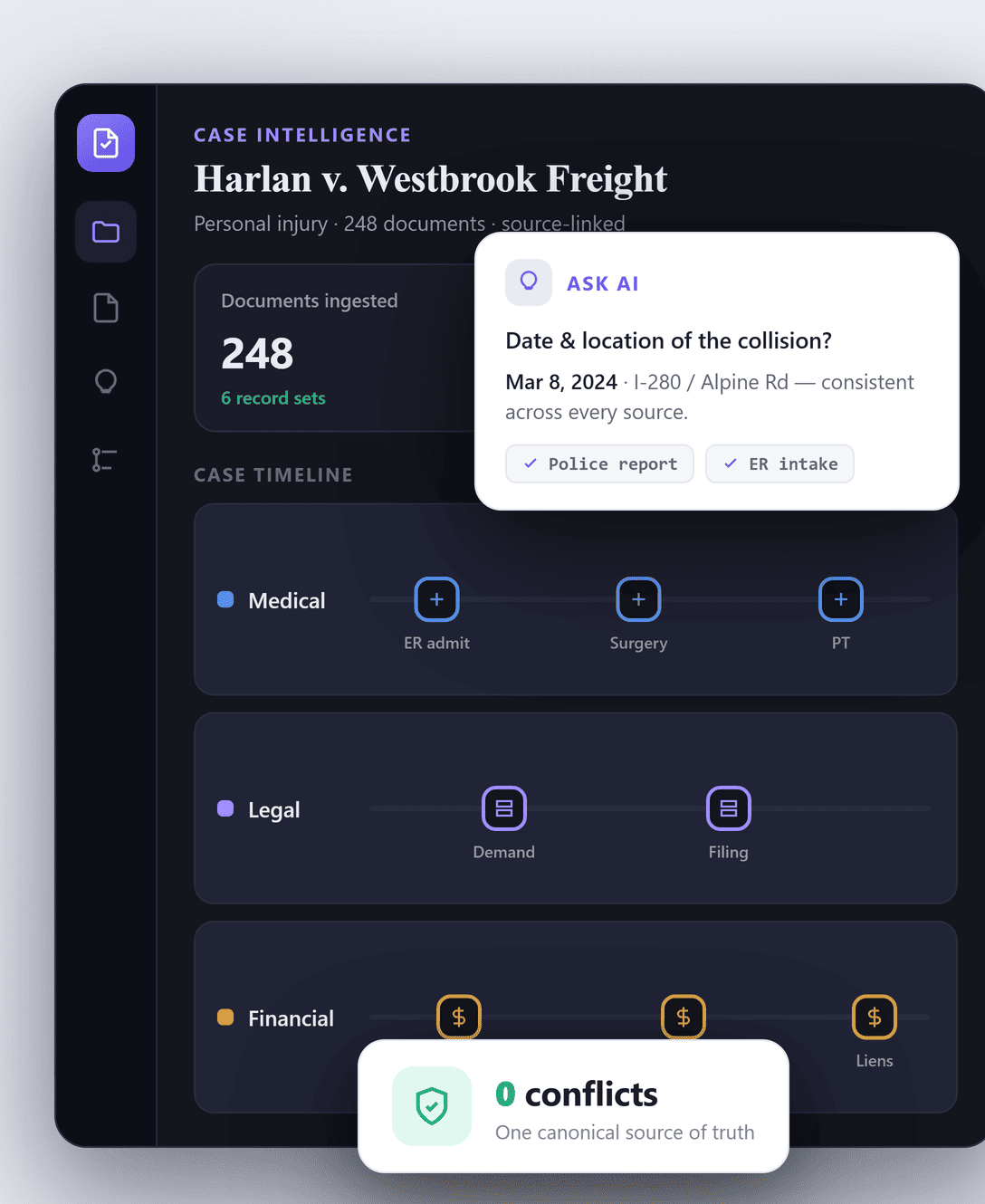

Trust constraints limit where AI can operate

Investor relations is a high-stakes, low-error-tolerance domain. A hallucinated statistic in a briefing document, an incorrectly attributed competitor quote, or an AI-generated response that misreads analyst sentiment. Any of these could damage executive credibility or create disclosure risk. The client understood this. The question wasn’t whether to use AI; it was how to use it in a way that executives would actually trust and adopt.

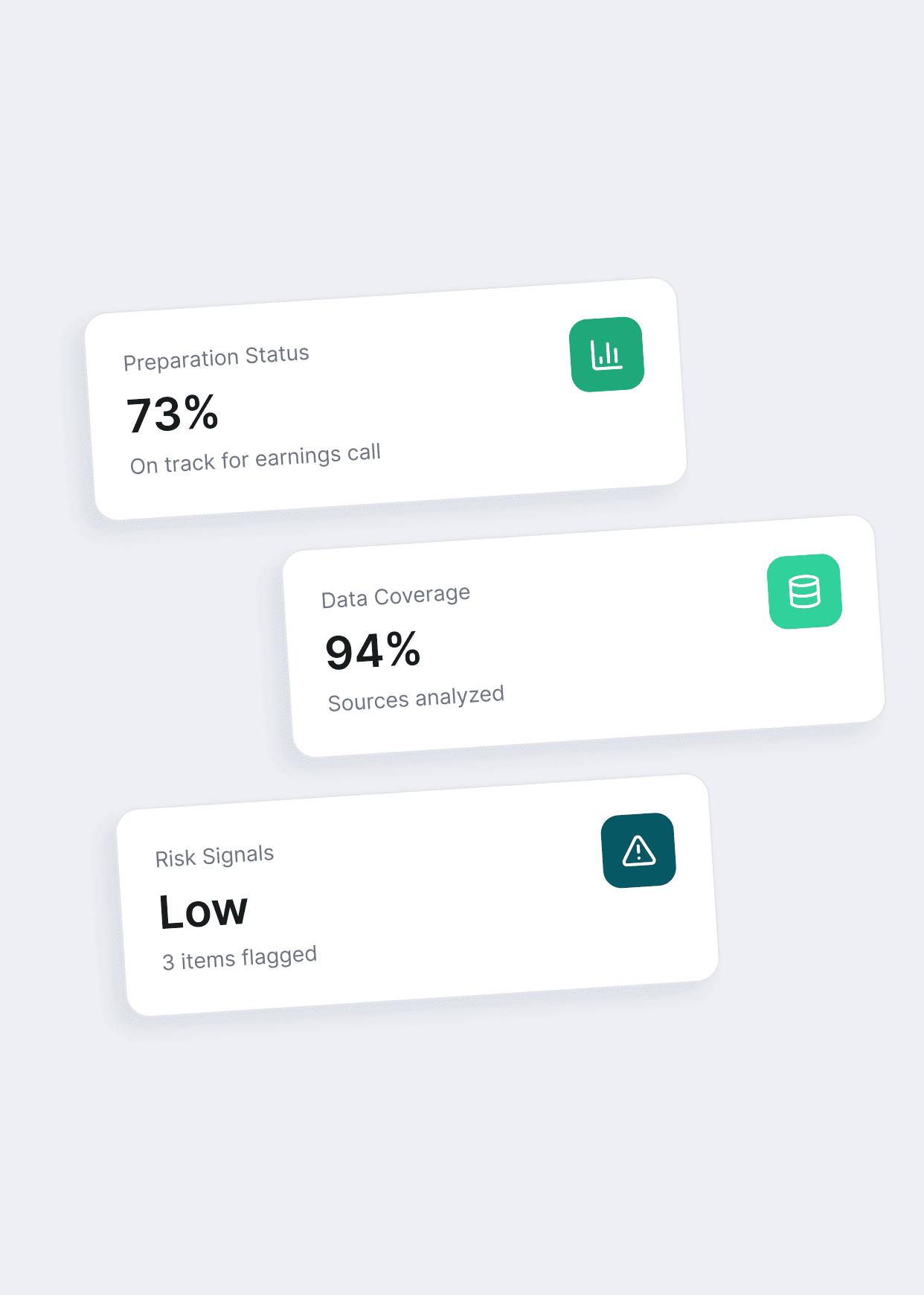

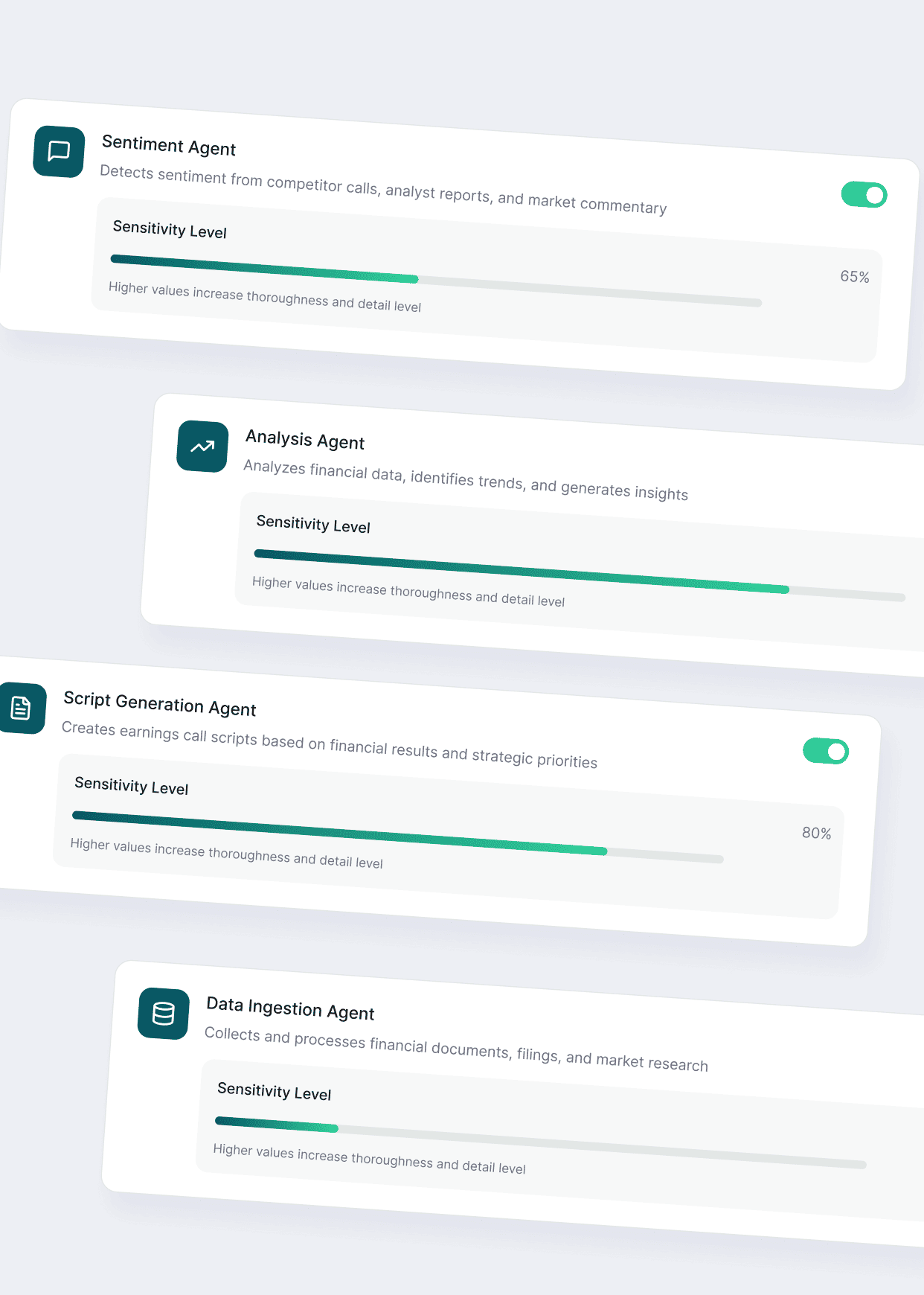

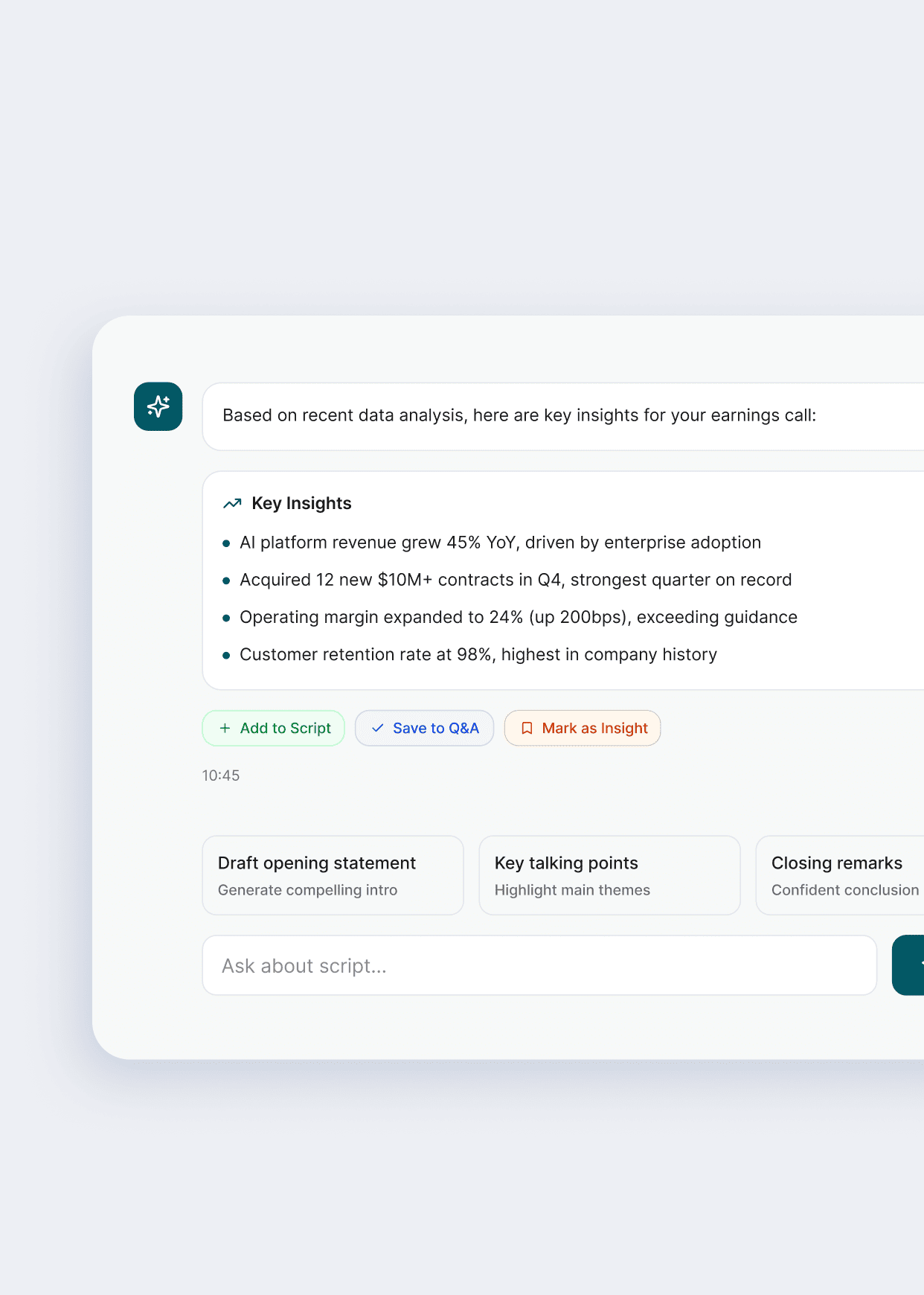

Built trust from day one

Rather than designing for maximum automation, we established an AI operations boundary for each workflow stage. Some tasks like transcript ingestion, segment extraction, theme tagging are strong candidates for full automation with audit trails. Others like final script review, analyst tone interpretation, live Q&A framing require human judgment and need AI in an assistive role only. We codified these boundaries in the architecture spec so they could be enforced at the engineering level, not just as policy.

The competitive landscape was hard to read

There are 40+ tools in the IR software space, but most of them compete on features rather than workflow ownership. Evaluating them on a feature-by-feature basis was misleading. The real question was: which stage of the preparation workflow does each tool control, and where does every tool leave a gap that a new entrant could actually own?

Defining the architectural North Star

We reframed the competitive analysis around workflow stages rather than product categories. This surfaced a clear whitespace. No tool meaningfully owns pre-call preparation as an active, intelligent workflow. Most IR platforms are archival or publishing tools with some analytics bolted on. The gap wasn’t in post-event analysis. It was in the 72 hours before an earnings call, which is exactly where the client’s platform had the strongest defensible position.

High-stakes hallucination risks

Early enthusiasm for generative AI risked pushing autonomous drafting into a context where inaccuracies, tone drift, or hallucinations would be unacceptable.

A knowledge-first foundation

We anchored the system around a structured knowledge base aggregating transcripts, extracted Q&A, and sentiment-signaling language. AI was positioned as an assistive layer with querying, summarizing, and drafting only against verified source material.

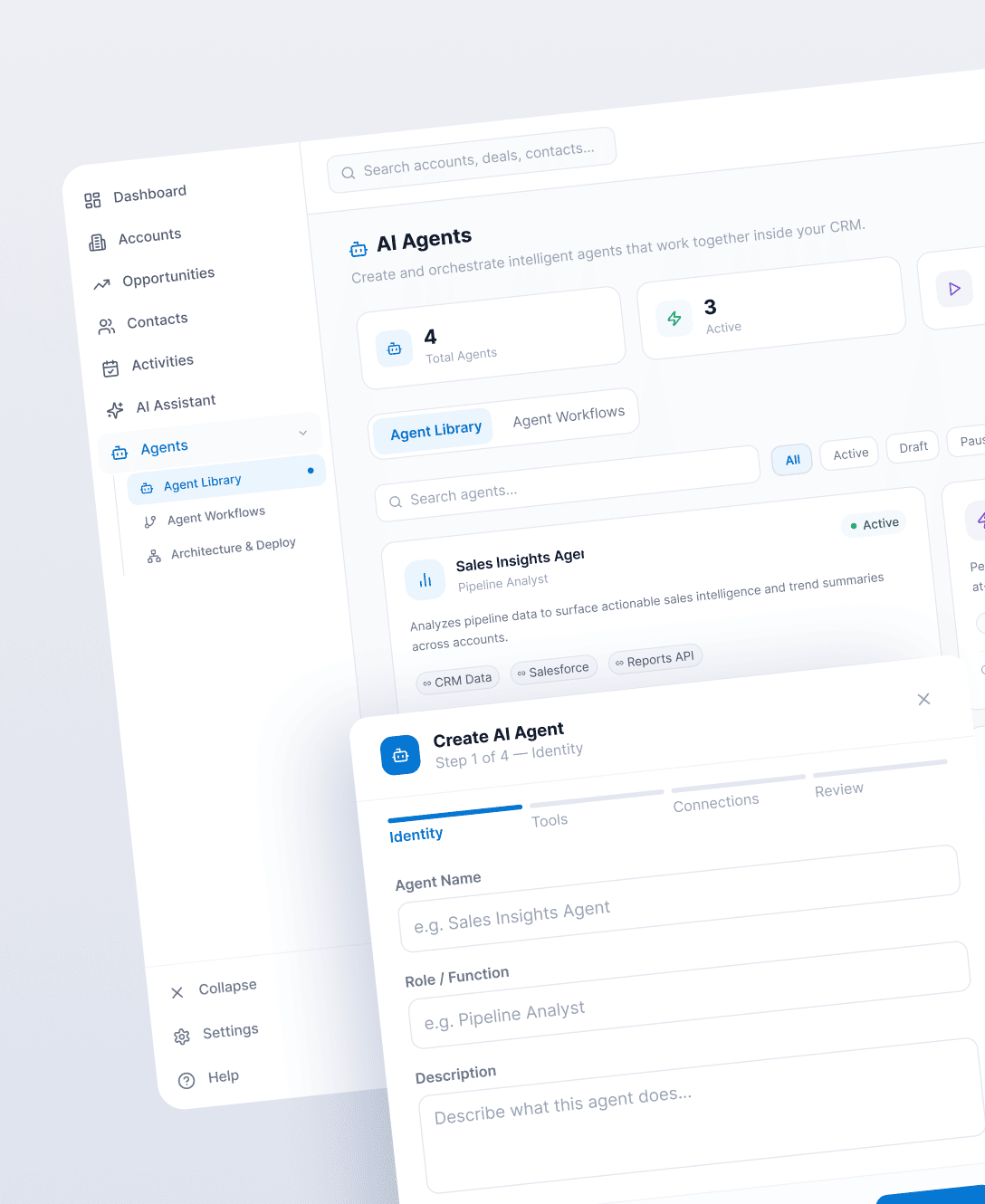

Overlapping AI capabilities

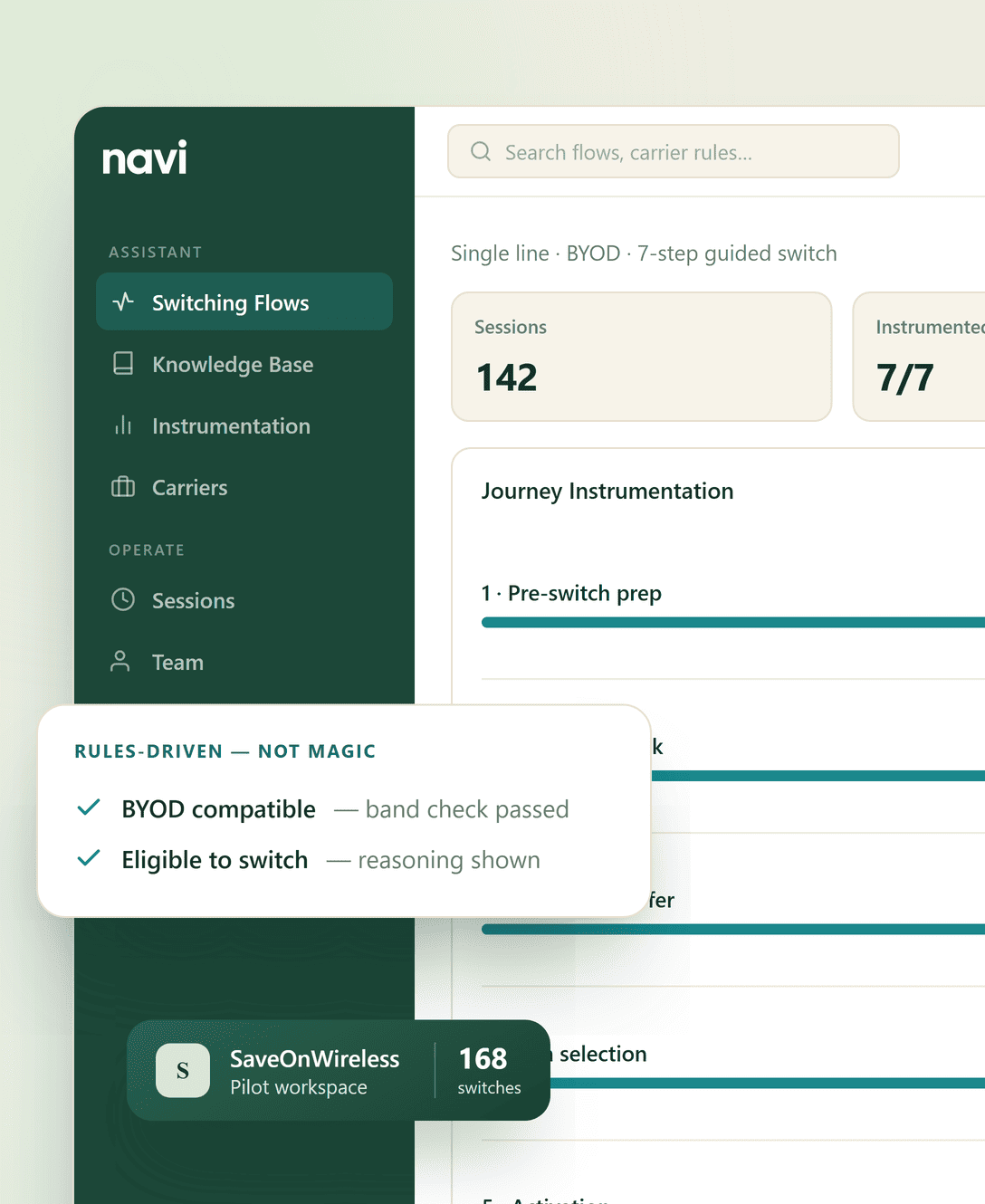

The vision included multiple AI capabilities, but without clear boundaries, overlapping agent behavior would compound errors.

Establishing strict agent boundaries

Discovery work defined future agent roles ingestion, extraction, script drafting, sentiment analysis with clean interfaces and handoff points, enabling collaboration without loss of reliability.

our approach

Our approach during discovery centered on one constraint. AI that executives can’t trust during an earnings call is worse than no AI at all. Every recommendation we made balanced technical possibility against regulatory sensitivity, reputational exposure, and realistic adoption inside high-pressure workflows. The objective wasn’t to show what AI could do. It was to define where it should start.

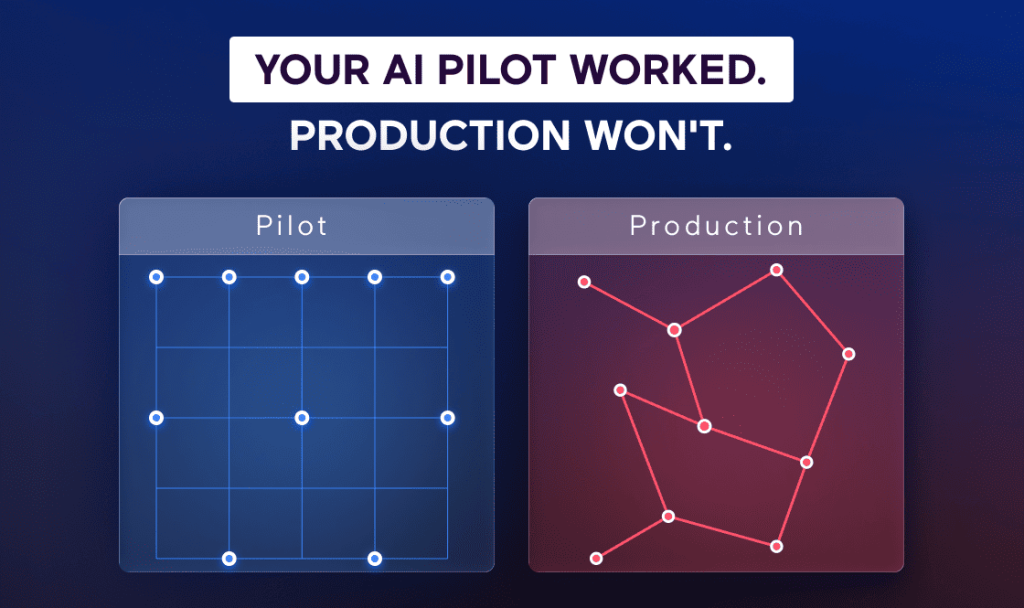

Start Where the Stakes Are Highest

We scoped the initial architecture to earnings calls specifically, not because it’s the only IR workflow that matters, but because it’s the one with the clearest preparation bottleneck, the highest stakes if something goes wrong, and the most measurable manual workload to automate. Solving this one workflow well earns the trust needed to expand. Solving it poorly closes the door on everything else.

Design for Extension, Not Exhaustion

The temptation with early-stage AI products is to design for every future use case at once and ship nothing. We resisted that. The architecture we outlined starts with a single pipeline: ingest, extract, summarize, surface. Each stage is isolated. Adding a Q&A simulation agent, a competitor tracking agent, or a sentiment scoring layer later doesn’t require touching what was already built. The foundation scales without being rebuilt.

Preserve Executive Control

Every architectural decision was evaluated through one question: can the executive using this system understand what they’re looking at and override it if they disagree? AI-generated briefings need source attribution. Sentiment scores need to show the language they were derived from. Q&A suggestions need to be editable, not just selectable. These aren’t nice-to-haves in a regulated, high-visibility domain, they’re the difference between a tool executives adopt and one that sits unused because nobody trusts the output.

Project results

This engagement did not culminate in a shipped product – and that was intentional. What exists now is more durable:

– Validated Market Positioning: A stage-by-stage breakdown of the IR software landscape, mapped against workflow ownership rather than product categories. The client now knows exactly where they’re entering the market, who they’ll displace, and why feature parity isn’t the game they need to play.

– Operational Gap Analysis: A documented reconstruction of the earnings call preparation workflow – with time costs, error surfaces, and handoff failures identified at each stage. This isn’t a market opportunity slide. It’s the input a product team actually needs to scope Phase One.

– Architecture Direction: A system design that separates automation candidates from human-judgment requirements at the workflow level, built to support modular agent expansion without core rewrites. Defensible to investors, legible to engineers, adoptable by executives.

– Phase One Blueprint: A scoped, risk-ordered build plan the client can take directly into development – with clear success criteria for each component and explicit guardrails around the AI trust boundary.

boundary.

The client has a north star they built with full understanding of the domain. That’s harder to achieve than it sounds, and it’s worth more than a prototype that ships fast and pivots constantly.

Key Takeaways

- Market discovery is a systems problem. The market opportunity isn’t in the gap between features. It’s in the gap between workflow stages no one currently owns.

- AI value comes from owning a workflow, not from having the best model. Which stage of the process you control matters more than what LLM you’re calling.

- In high-stakes domains, assistive AI earns adoption. Autonomous AI earns rejection. Sequence matters.

- Architectural clarity before Phase One development isn’t a luxury. It’s the cheapest risk mitigation you can buy.

If you’re building AI into a workflow where the cost of a bad output is a boardroom mistake, a compliance exposure, or an executive who stops trusting the tool, the system needs to be designed before it’s built. We do that work. If you’re in a similar stage, start there.

Start with System Thinking, Not AI Features

Meet the founders

Tell us your goals

Receive a proposal

Project kickoff

“Anton is an exceptional technologist. I would feel comfortable having him work on any technical challenge.” – Ryland Goldstein, Head of Product, Temporal