Designing Agentic Control Systems for Data and Financial Readiness at CarAdvise

Canonical Agent

States

Read-Only

Validation

Human

OVERSIGHT

Solutions

Industries

Technologies

About THE Project

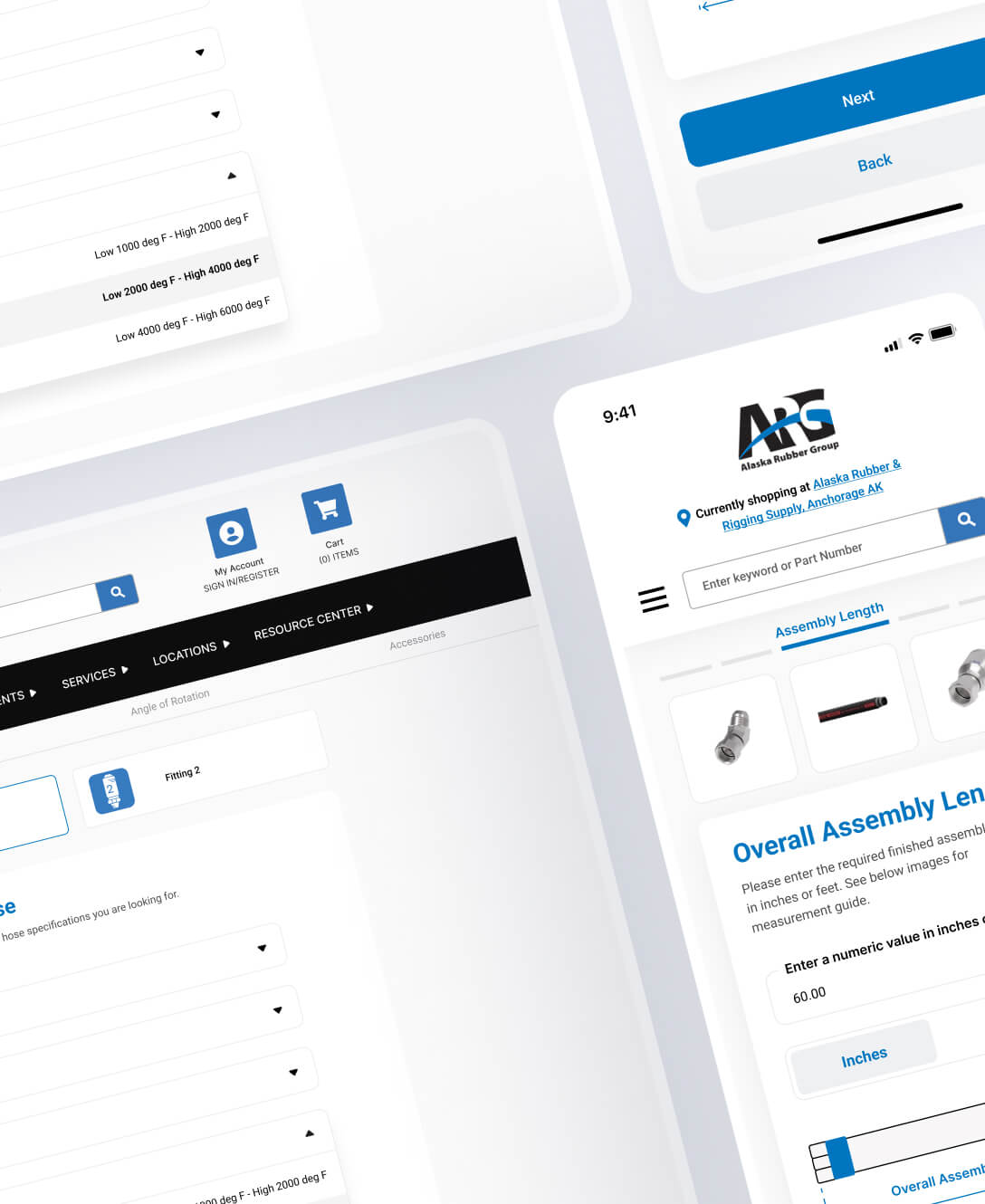

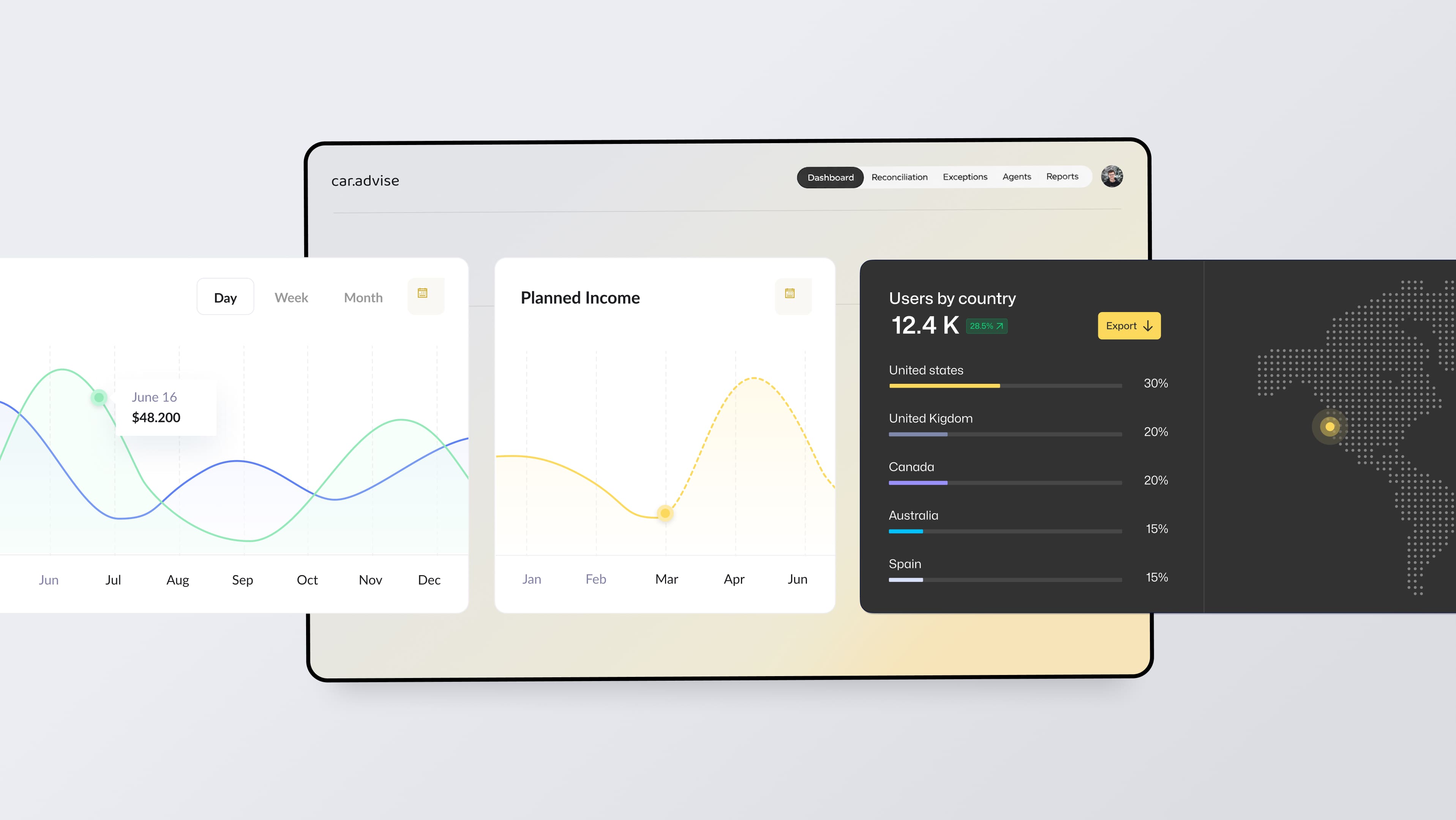

CarAdvise operates a transaction-heavy automotive marketplace where pricing accuracy, reconciliation, and payouts depend on data flowing correctly across multiple systems. As the business scaled, small data defects increasingly caused downstream issues – Ops escalations, Finance delays, and manual reconciliation work that relied heavily on tribal knowledge.

CarAdvise leadership and engineering recognized that adding more automation or “smart features” would not solve the root problem. The system lacked clear control points and canonical definitions for when data, orders, and payments were actually trustworthy.

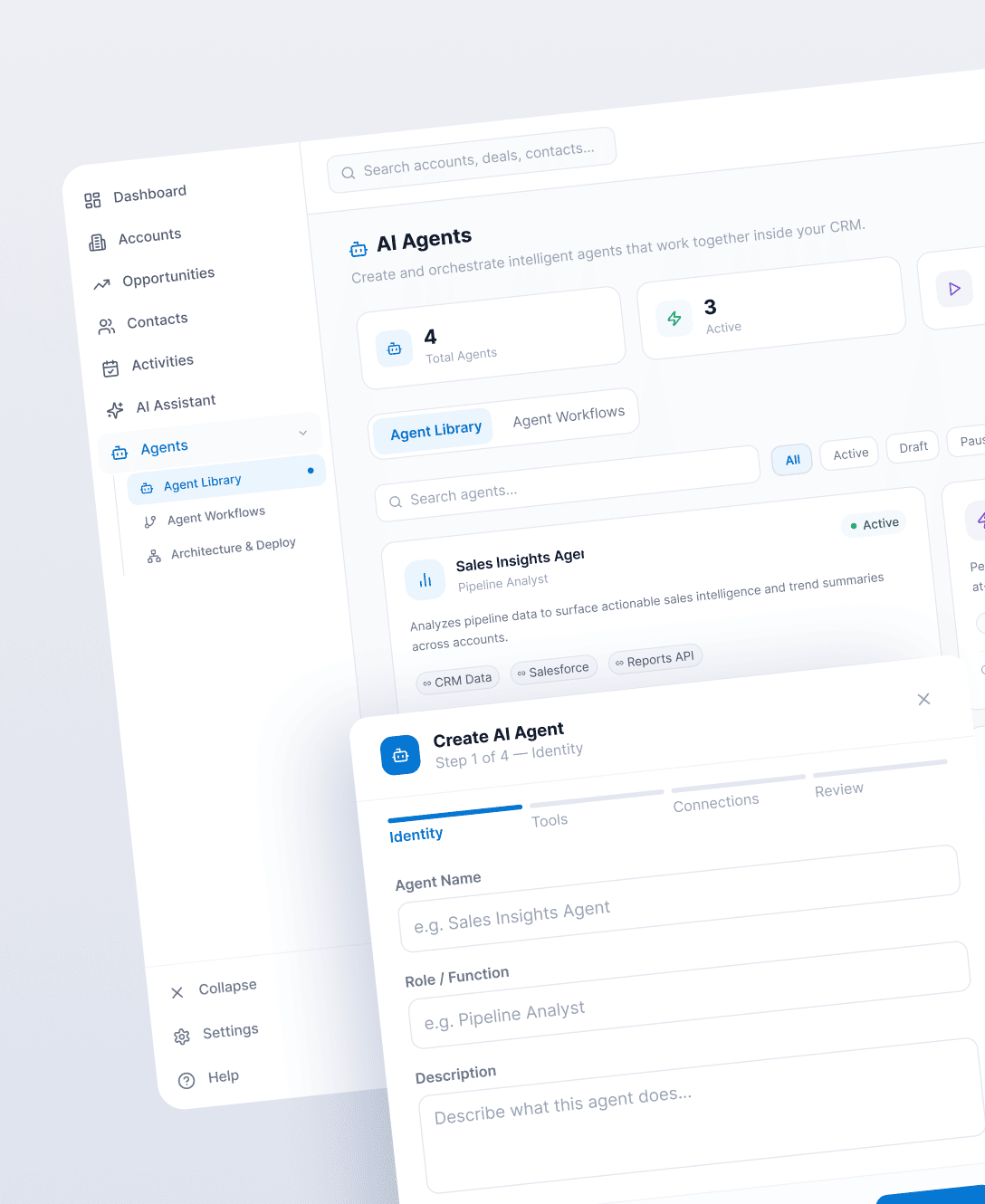

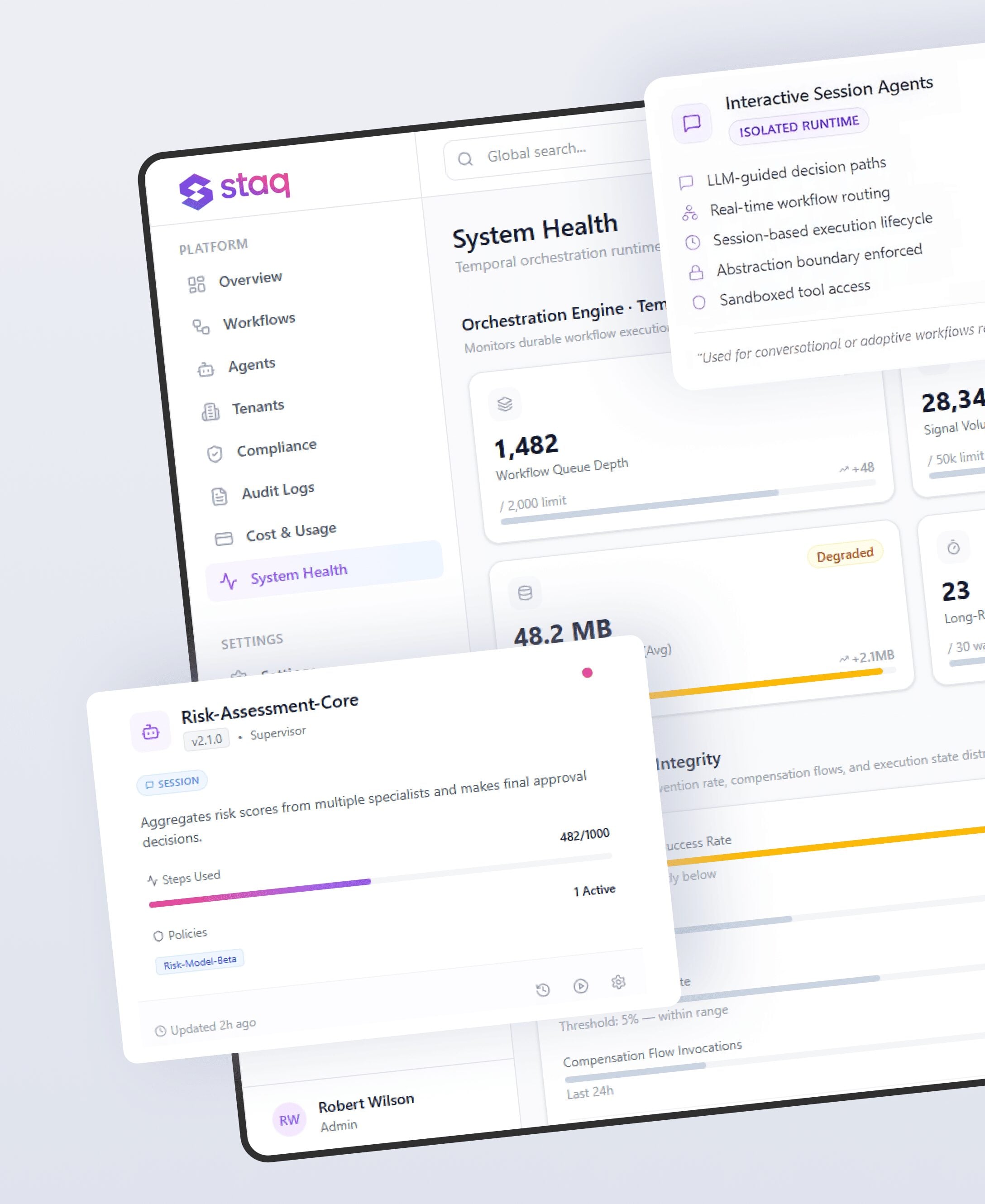

Spiral Scout was engaged to lead a discovery and pilot-design effort focused on defining how AI agents could safely operate inside these workflows without introducing financial or operational risk. The work included building multi-tenant, long-running workflows, auditability, permissioning, failure recovery, integration within their live enterprise systems.

This work centered on architecture, constraints, and decision-making, not feature delivery.

Objectives

- Identify where data quality failures originate and how they propagate

- Define canonical system states for completion, settlement, and payout readiness

- Reduce reliance on manual Ops and Finance judgment

- Establish guardrails for introducing AI into financial workflows

- Create a reusable agent pattern that future builds can rely on

Challenges

Solutions

Data Risk Was Invisible Until It Became Expensive

Invalid VINs, missing vehicle classification, orphaned services, and pricing inconsistencies routinely flowed through the system undetected. These issues were typically discovered only during reconciliation or partner disputes – when resolution was slow and costly.

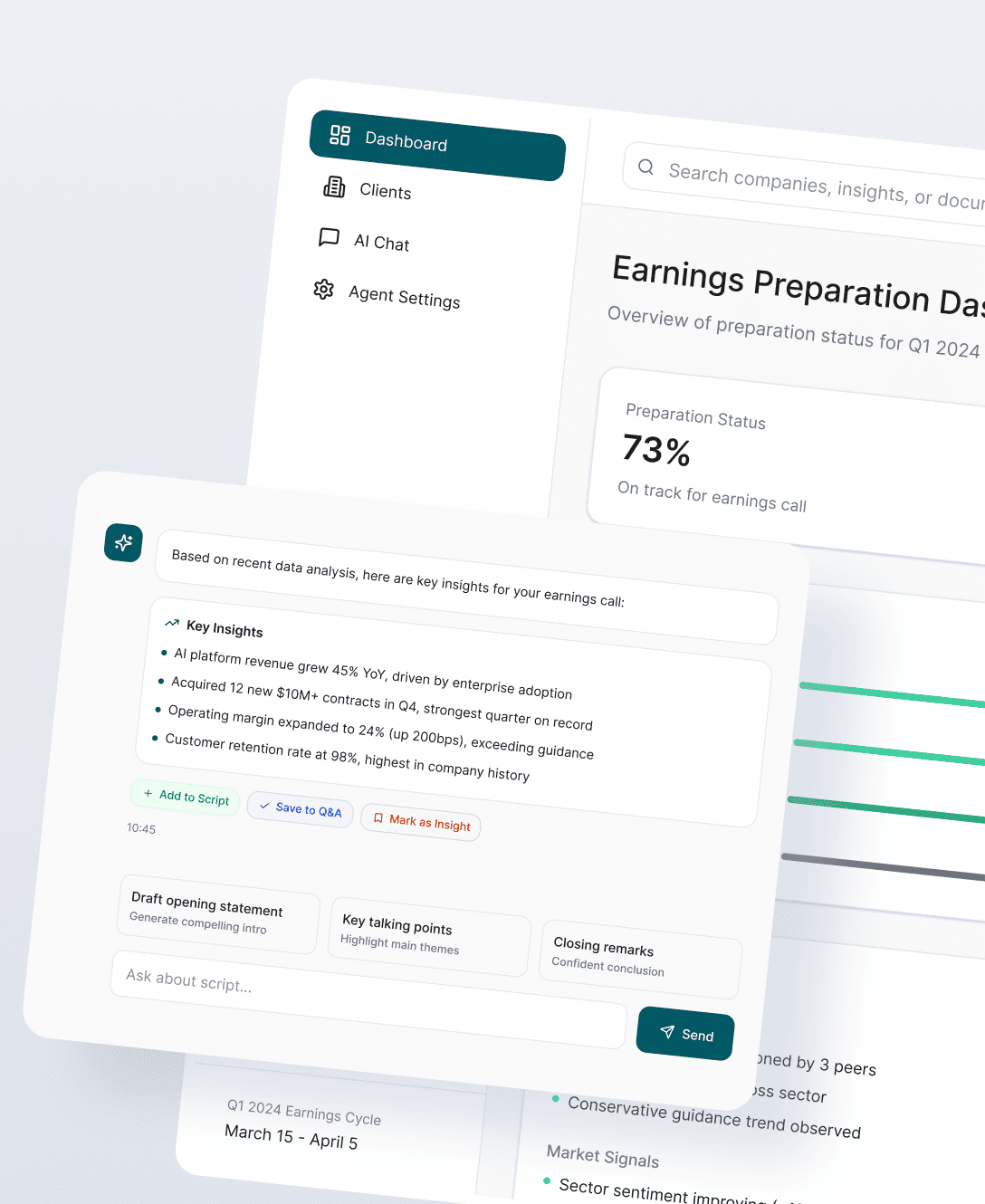

Data Health Agent (Pilot Design)

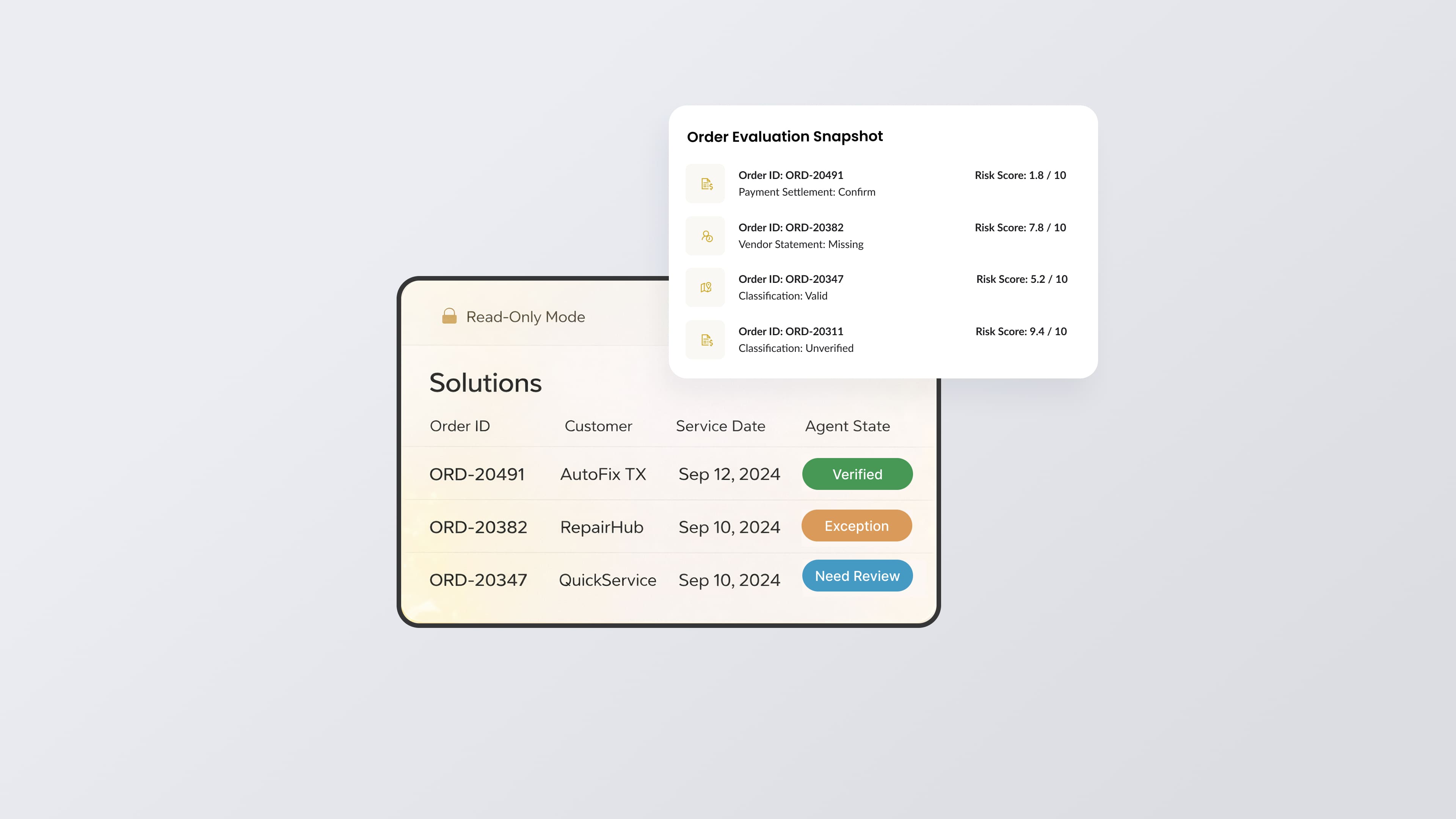

We designed a read-only Data Health Agent concept that continuously evaluates vehicles and orders using event-driven triggers and scheduled scans. The agent does not fix data; it detects anomalies, classifies business risk, and routes structured context to Ops early.

This reframed data quality as an ongoing control problem, not a cleanup task.

“Completed” Was Not a Reliable System State

Orders could be marked complete internally while still lacking settled payments or matching vendor invoices. Teams relied on experience rather than system logic to determine payout eligibility.

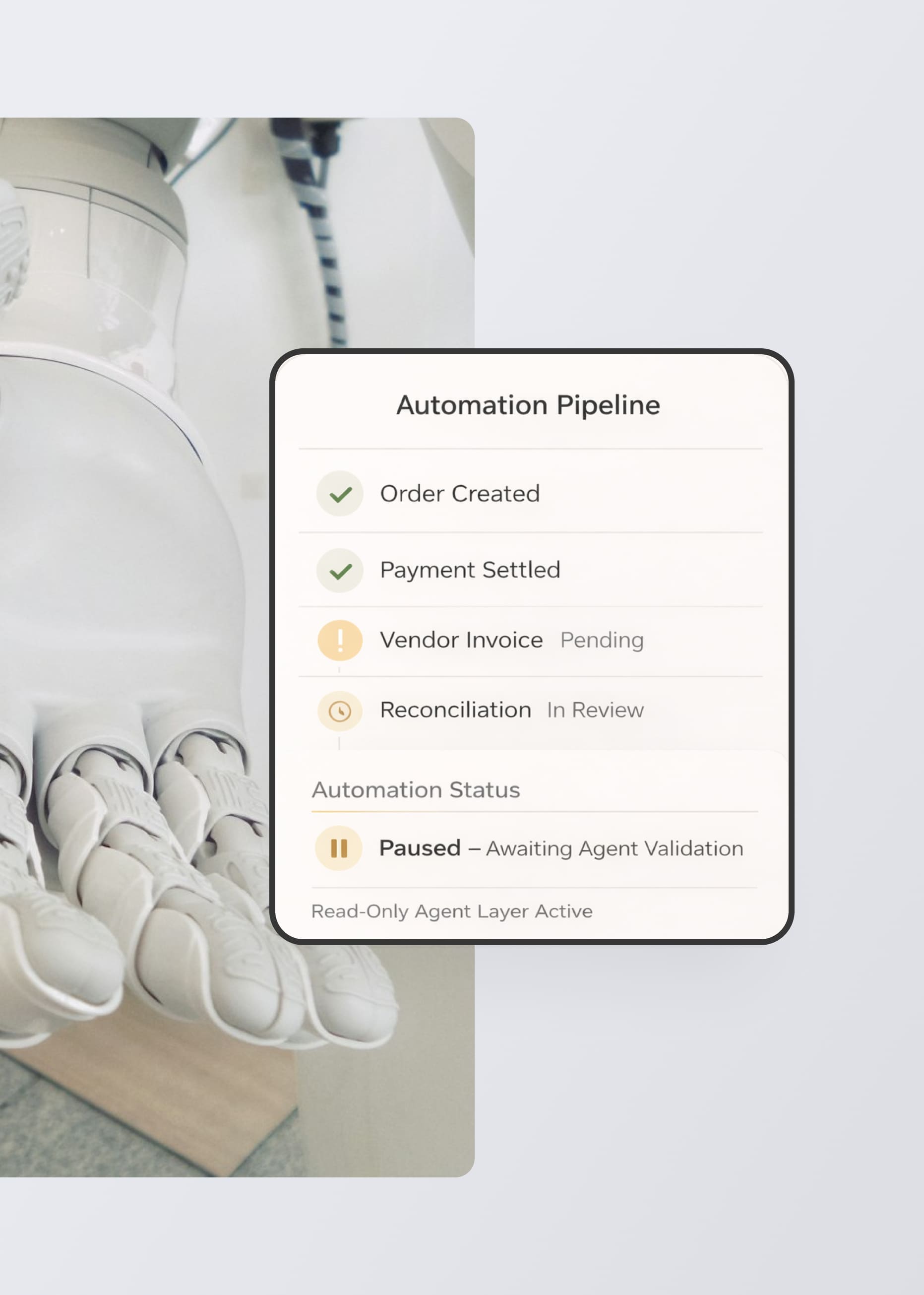

Agent-Defined Reconciliation States

We defined a set of agent-derived reconciliation states computed via three-way matching across internal orders, payment records, and vendor statements. These states exist outside core order models, reducing schema risk while removing ambiguity.

This clarified that completed and payout-ready are fundamentally different system states.

Manual Reconciliation Didn’t Compound Learning

Ops and Finance repeatedly resolved the same categories of reconciliation issues with no systematic way to capture or reuse decisions.

Exception Classification Framework

We designed a structured exception taxonomy that agents can apply consistently. Each failure is classified, contextualized, and routed for human resolution – creating a foundation for future automation without removing human authority.

AI Introduces Risk in Financial Systems

Allowing agents to modify pricing or approve payouts would introduce unacceptable operational and audit risk.

Guardrails by Architecture

All proposed agents are explicitly constrained to read-only access. They validate, classify, and recommend – but never change financial data or approve payouts. Perfect matches can be flagged, but authority remains with Finance.

our Project strategy

This required production judgment across finance, data modeling, and AI risk – not just ML experimentation.

Govern Before You Automate

Agents were framed as control layers that make systems observable and trustworthy before they become autonomous.

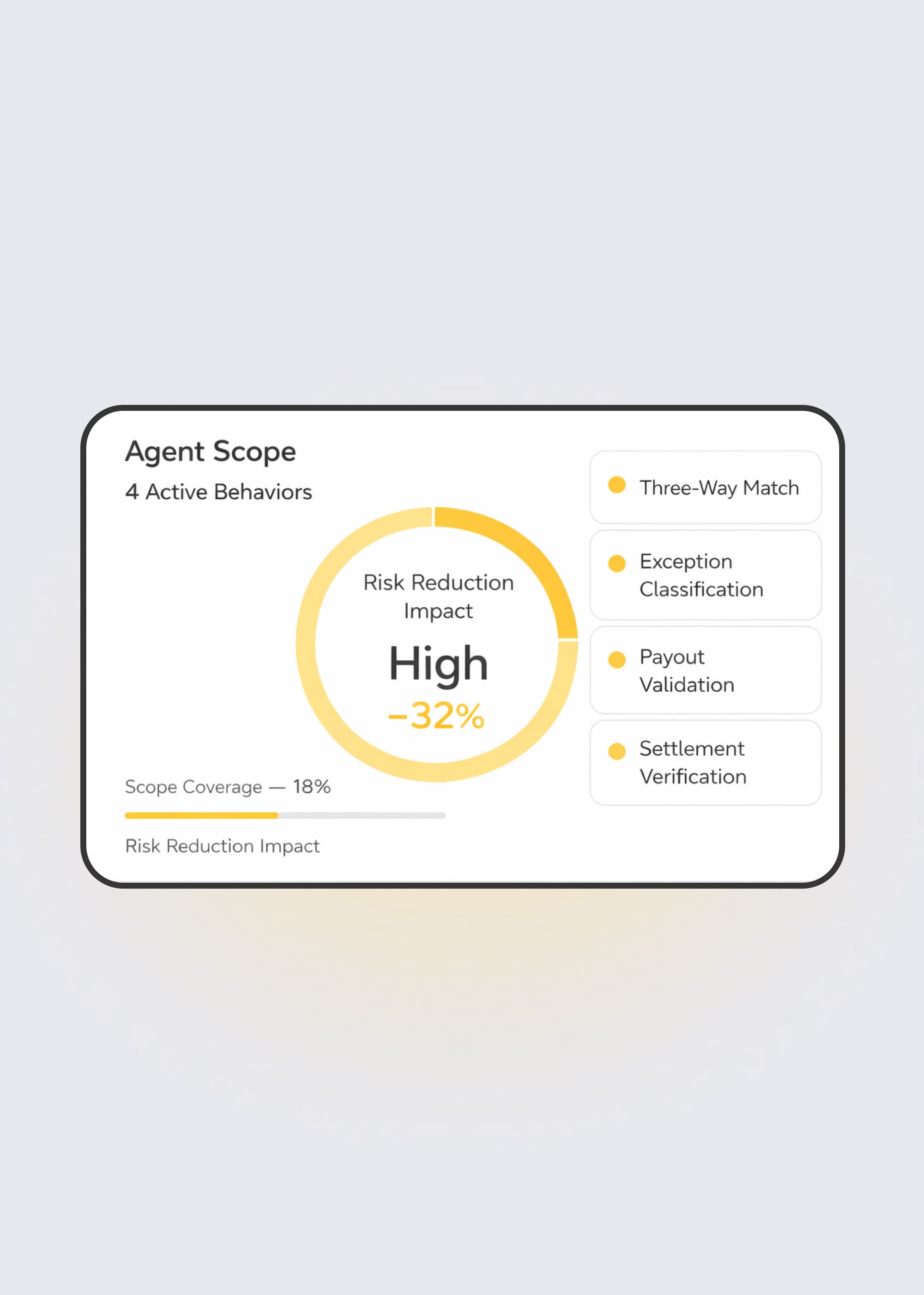

Narrow Scope, High Leverage

Rather than building broad assistants, we focused on the smallest set of agent behaviors that meaningfully reduce risk.

Architecture That Enables Independence

The agent patterns, states, and workflows were designed to be owned and extended by the CarAdvise team – not locked to Spiral Scout.

Current State & Direction

At the end of this phase, CarAdvise had:

– A clearly defined Data Health Agent architecture and scope

– Canonical reconciliation logic separating completion from payout readiness

– Guardrails for safely introducing AI into financial workflows

– A repeatable agentic pattern ready for future pilots and early builds

This work established an architectural north star for moving from manual processes and AI experimentation toward dependable, production-grade systems.

Key Takeaways

- System-level clarity matters more than feature velocity

- Read-only agents can deliver leverage without increasing risk

- Canonical states reduce human ambiguity better than dashboards

- Independence is designed, not promised

Worth thinking about if you’re trying to introduce AI into systems where money, trust, and operational correctness matter.

Automating high-stakes workflows? Let’s discuss your pilot.

Meet the founders

Tell us your goals

Receive a proposal

Project kickoff

“Anton is an exceptional technologist. I would feel comfortable having him work on any technical challenge.” – Ryland Goldstein, Head of Product, Temporal