Building a Reliable, Agent-Driven Production System for Proxa

Agentic

Retrieval

Grounded

Synthesis

Client

Independence

Solutions

Industries

Technologies

About THE Project

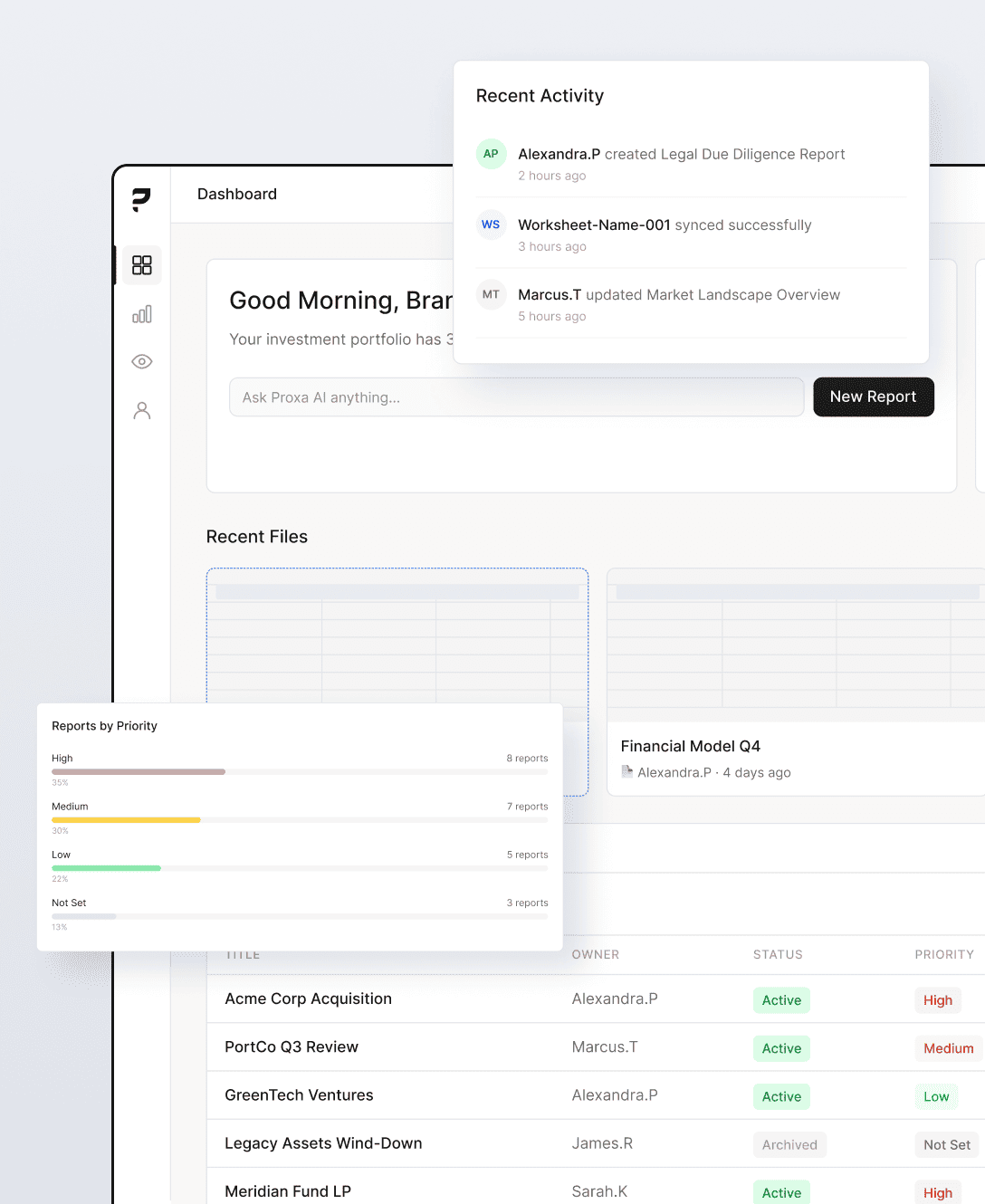

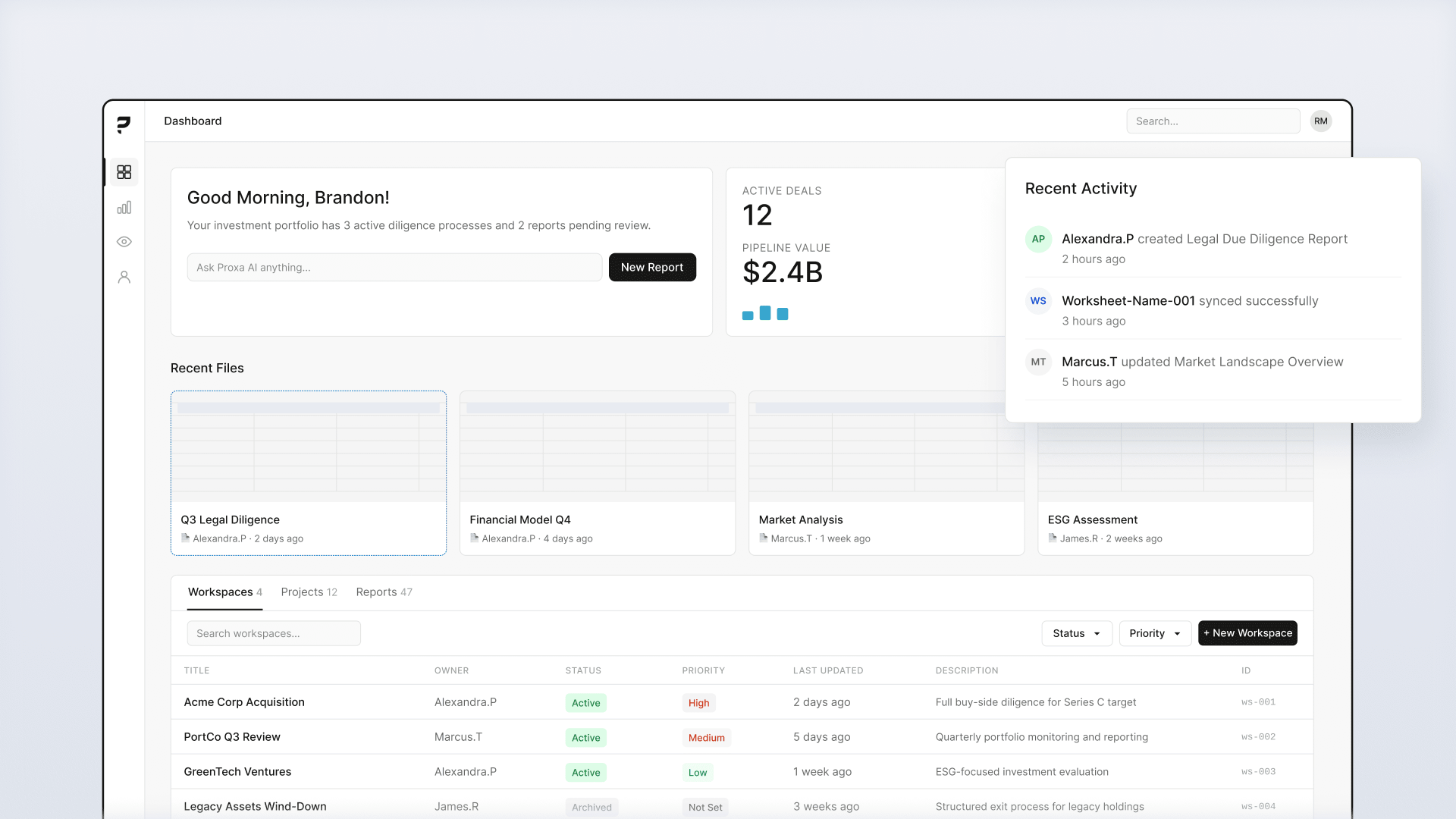

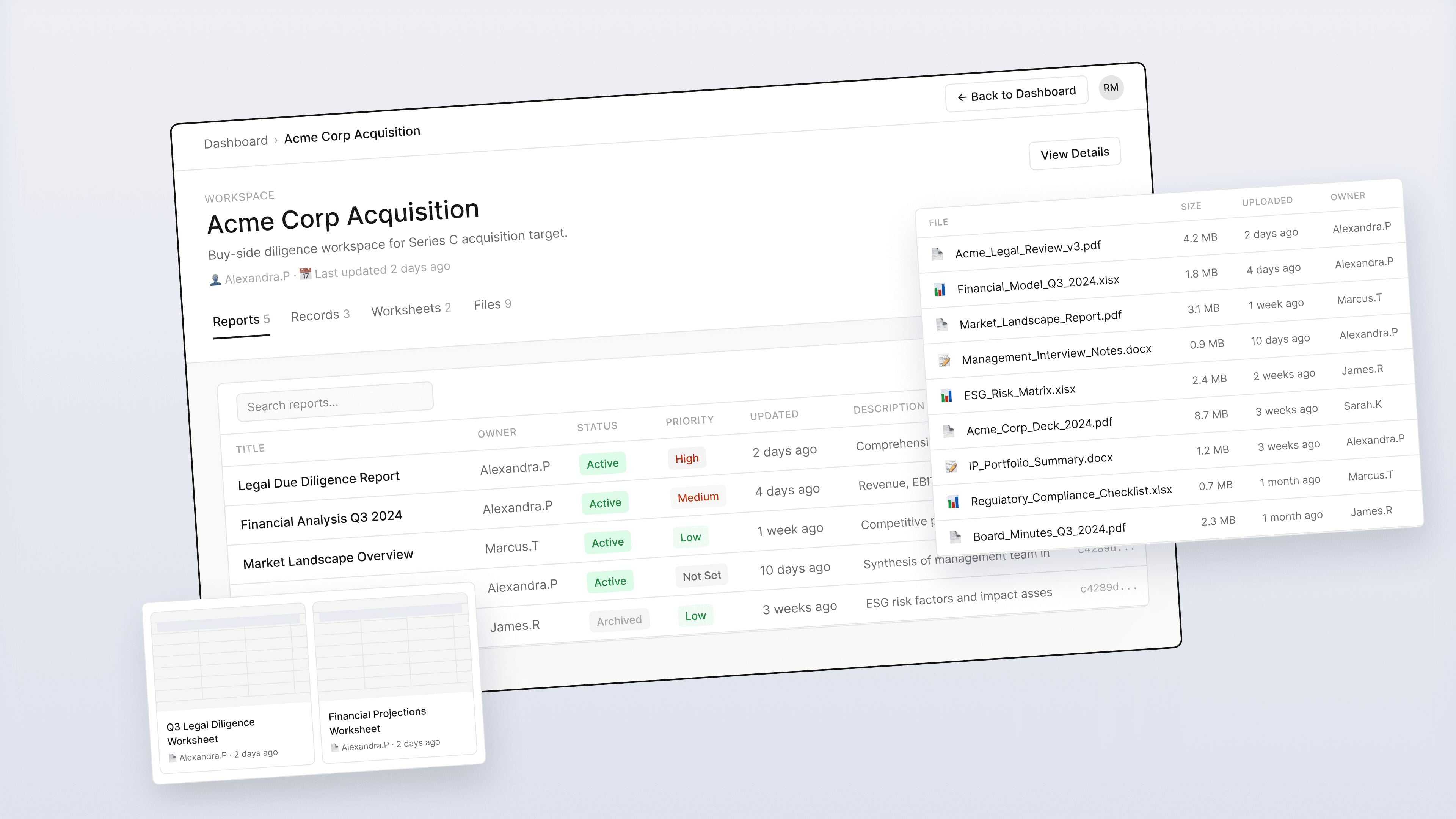

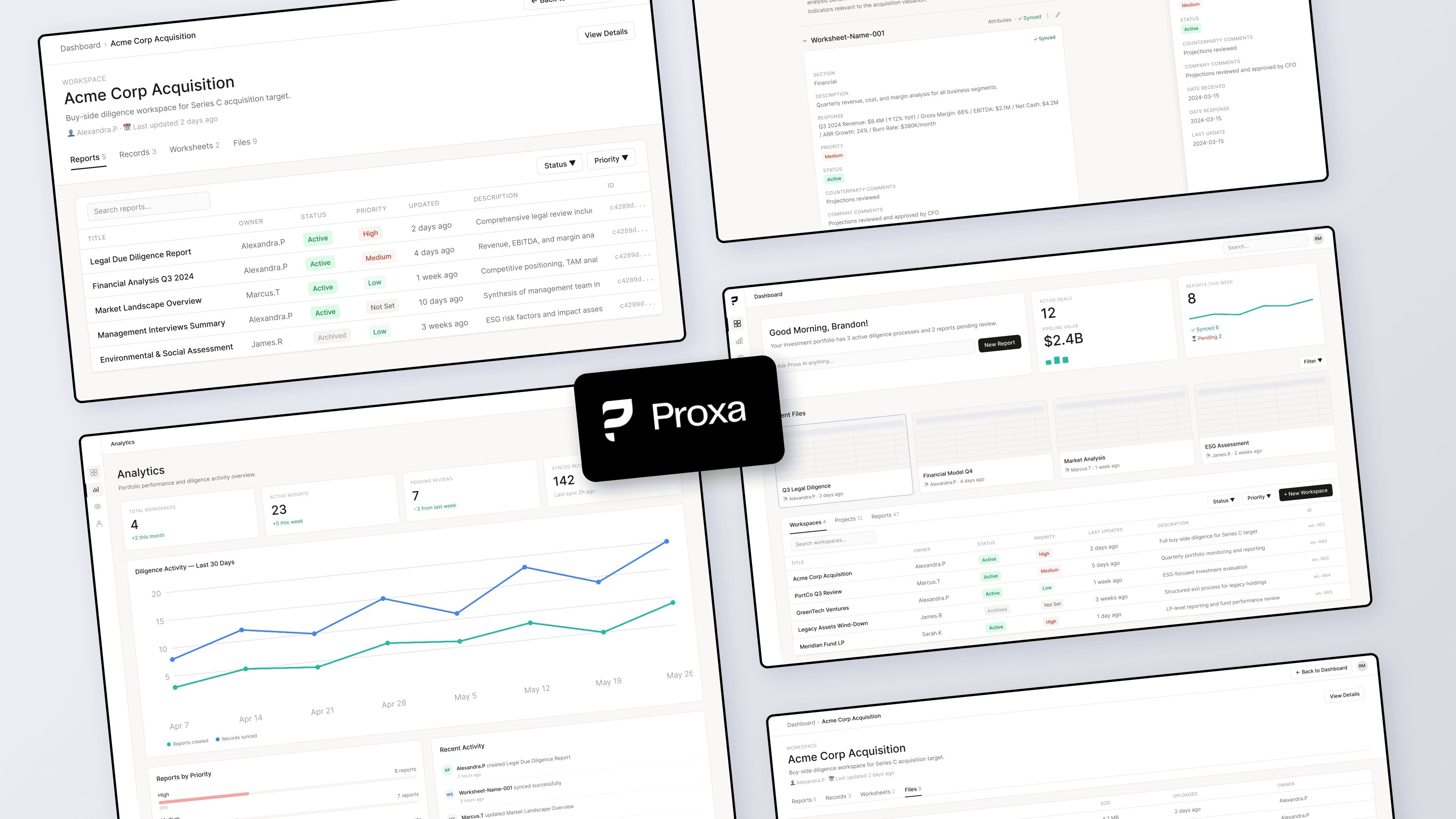

Proxa ships an AI data hub for executive teams. Executives make decisions on this data. Wrong answers are worse than no answers. The initial agent framework was falling apart under production constraints, and Proxa needed a system their internal team could own and operate without us.

The architecture they had was built for a prototype. Too many orchestration layers. Too much custom integration glue. Failure modes that hid problems instead of surfacing them. Executives were starting to lose confidence because the system kept breaking in ways the team couldn’t debug.

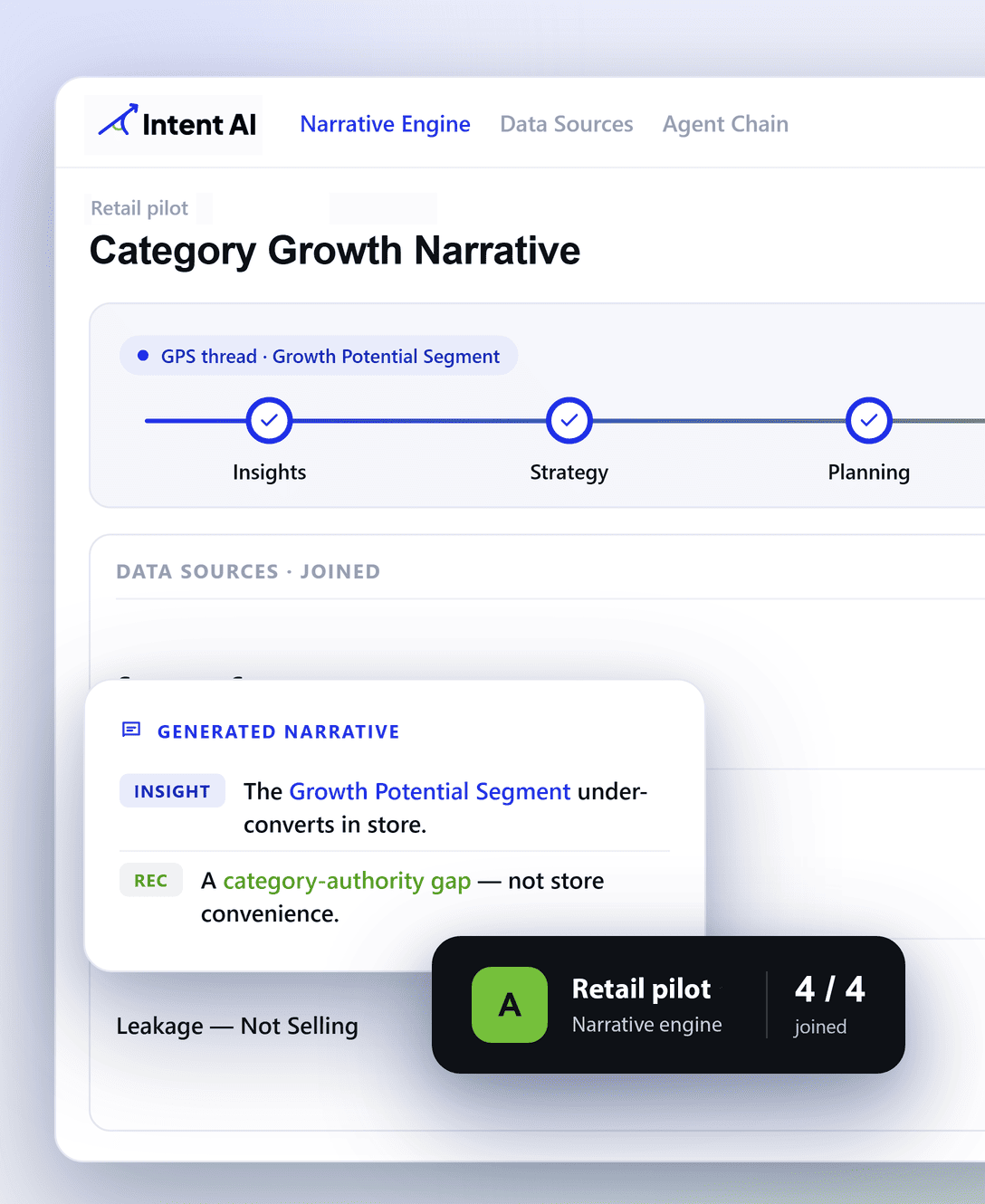

We shipped a simpler stack: agentic retrieval running against the Claude API, grounded synthesis with inline source attribution, and a conversational UI that lets executives search the corpus in natural language. Three core features. One architectural principle: if Proxa’s team couldn’t operate it after we left, it didn’t ship. System survivability is the outcome.

OBJECTIVES

- Eliminate hallucinated answers on executive-facing output.

- Ground every generated narrative in real source data.

- Scale document types without rebuilding the retrieval layer.

- Leave Proxa’s team in full control of the runtime after handoff.

- Ship on a stack that doesn’t require dedicated AI ops ownership.

Challenges

Solutions

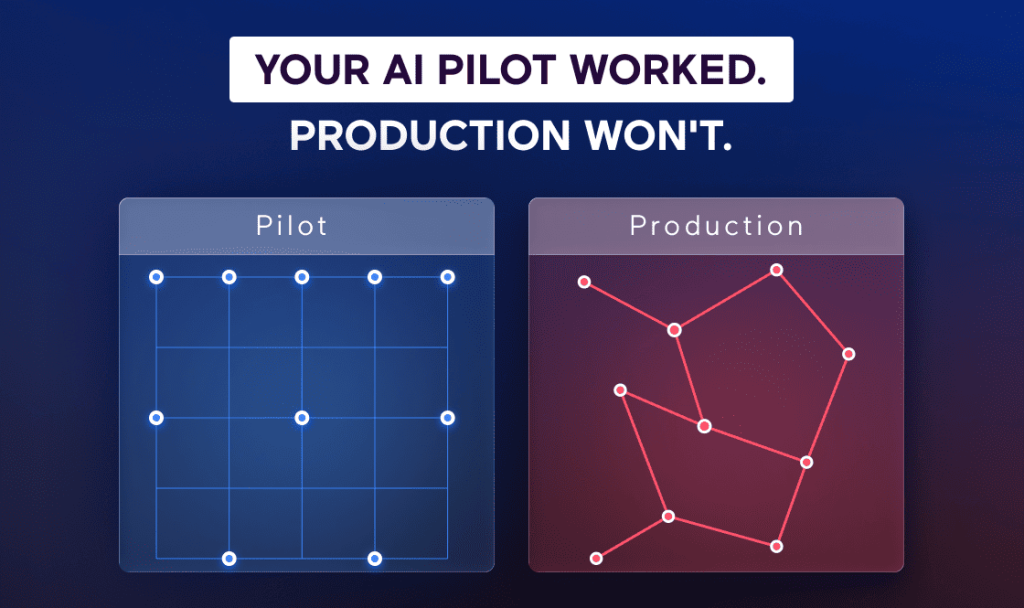

An Inherited Framework Failing Under Production Load

Proxa’s agent framework worked in development. It broke at scale. Too many moving parts. Too many custom orchestration layers. When something failed, the system hid the failure instead of surfacing it. The team couldn’t debug what was breaking because the architecture was too fragmented. Every new feature added risk. Every integration was a potential failure point.

Rewrite on a Simpler Stack

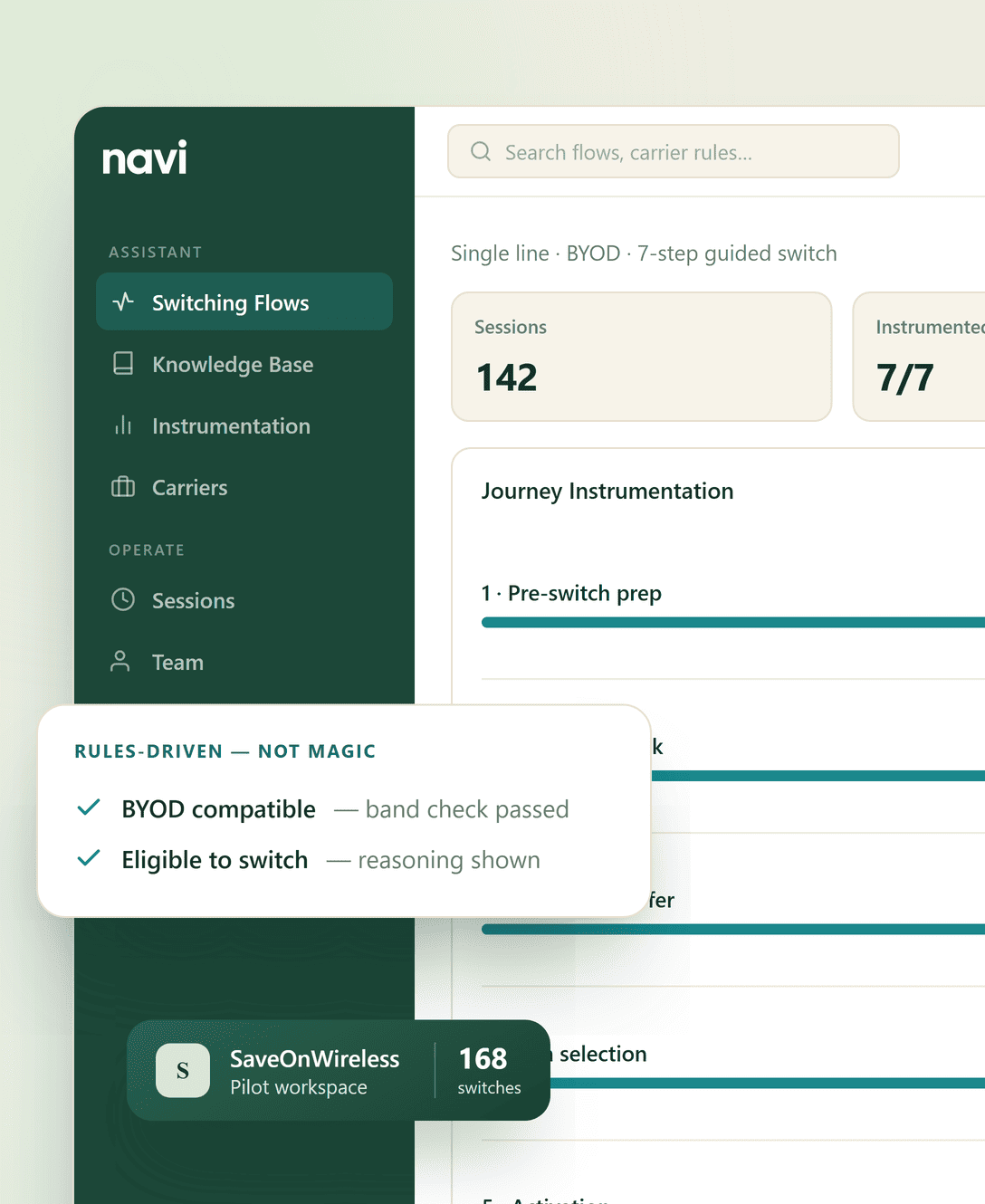

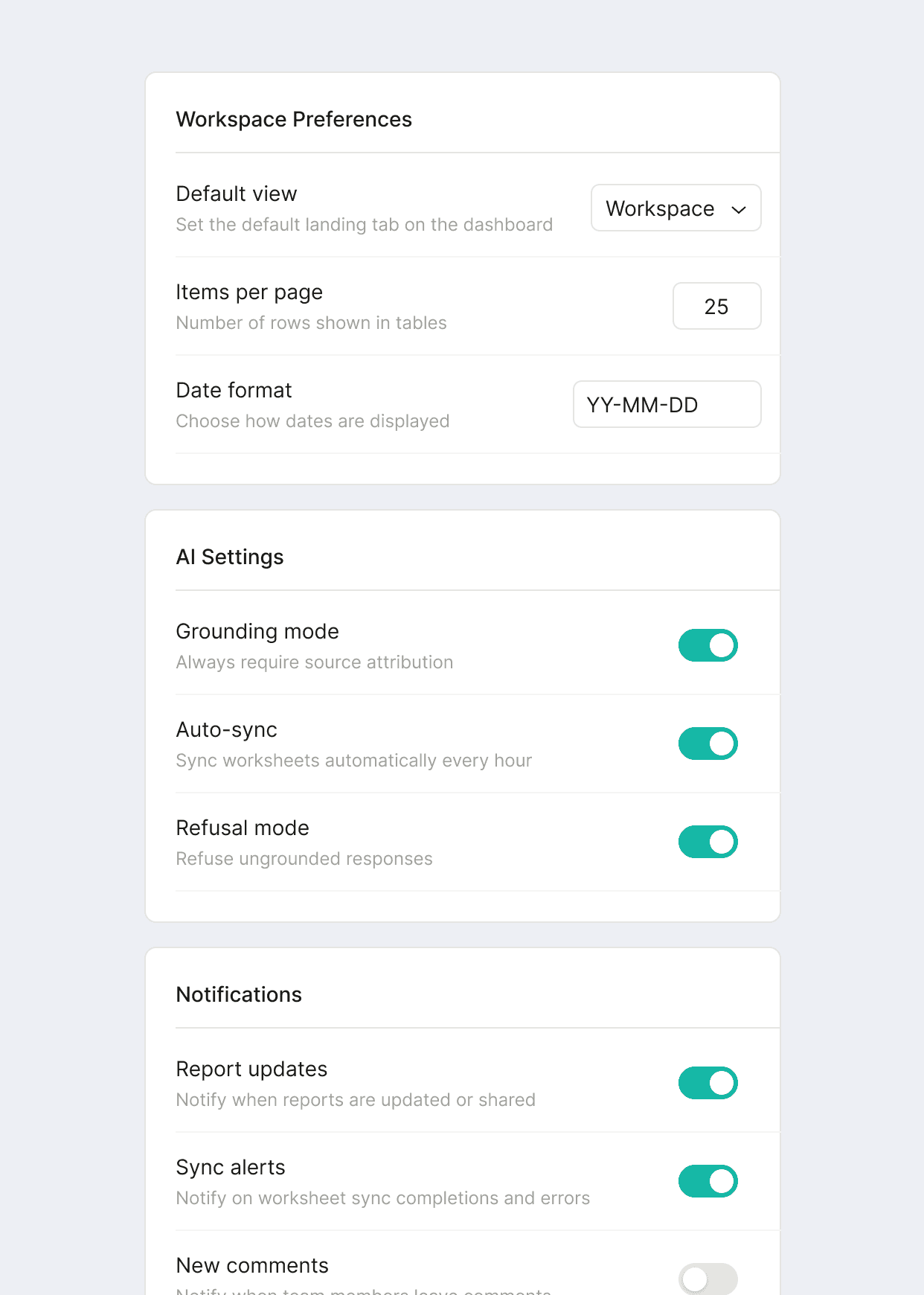

We replaced the orchestration framework with a tight agentic retrieval loop. Claude with direct tool access to the document corpus. Model reasons about what to pull next. Thin retrieval, refine, search again. No vector databases. No embedding pipelines. No retrieval tuning services. We give up index-time optimizations, but we gain an architecture Proxa’s team can own, extend, and debug without us. That’s the real tradeoff.

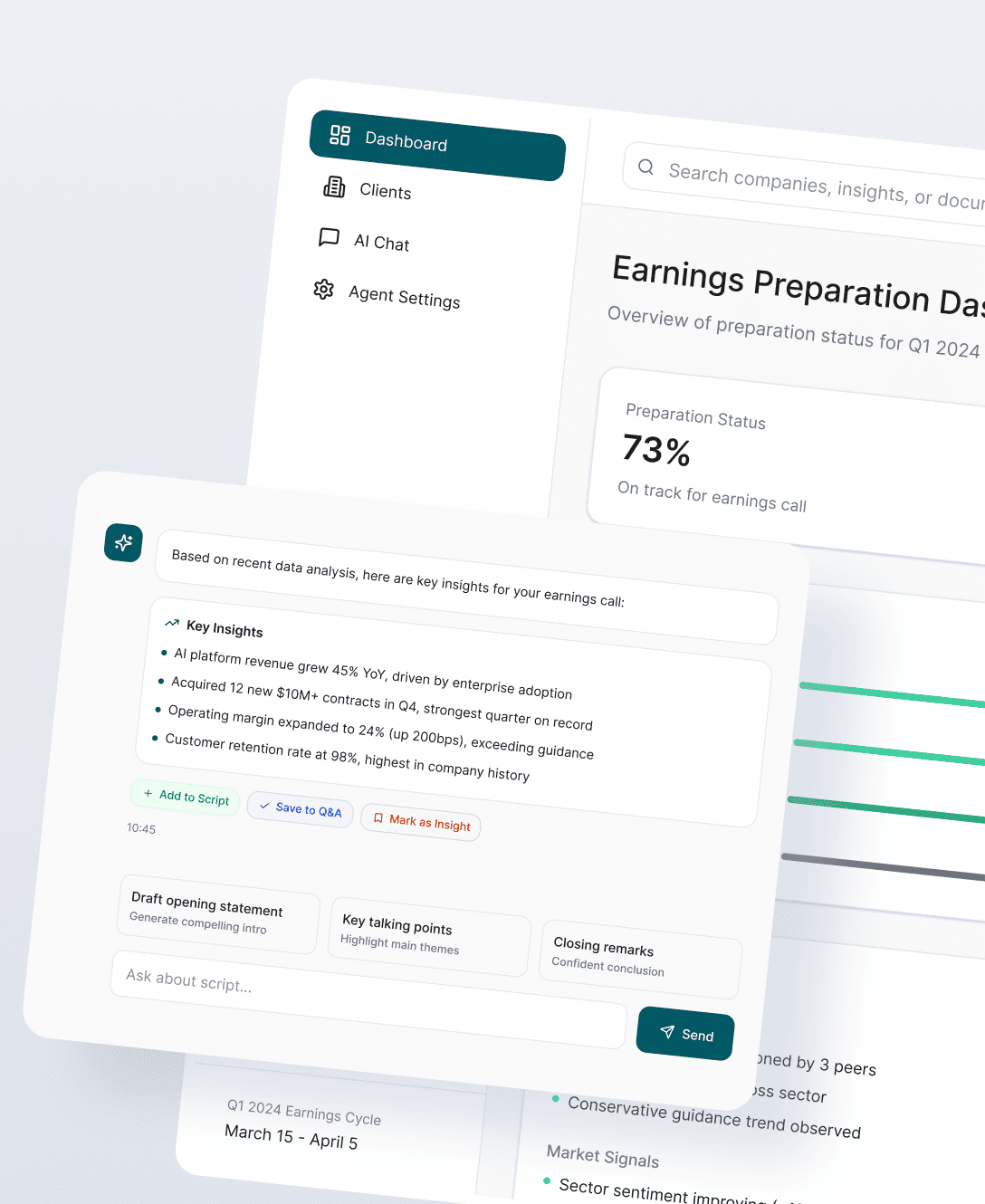

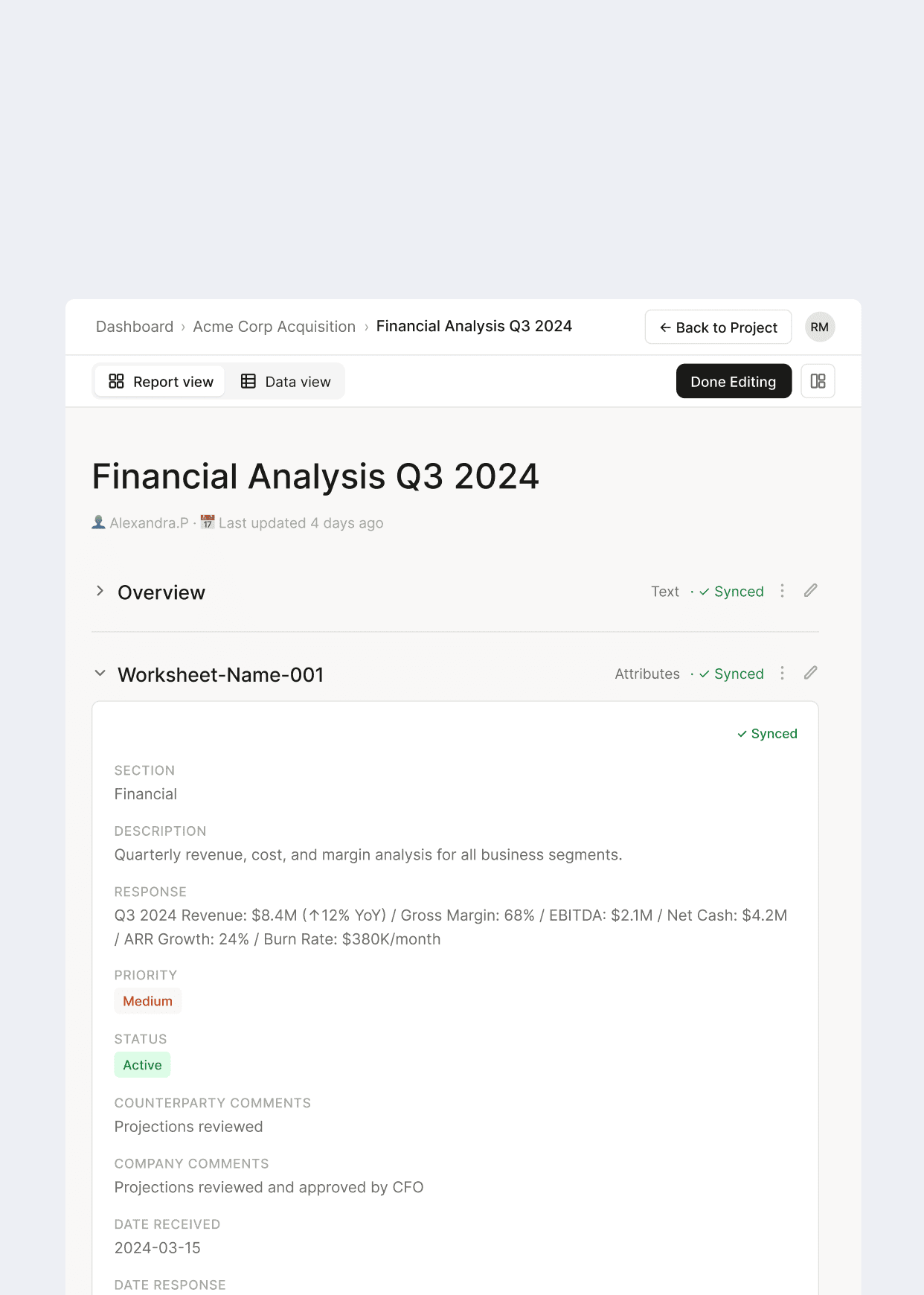

Generating Executive Narratives That Can Be Trusted

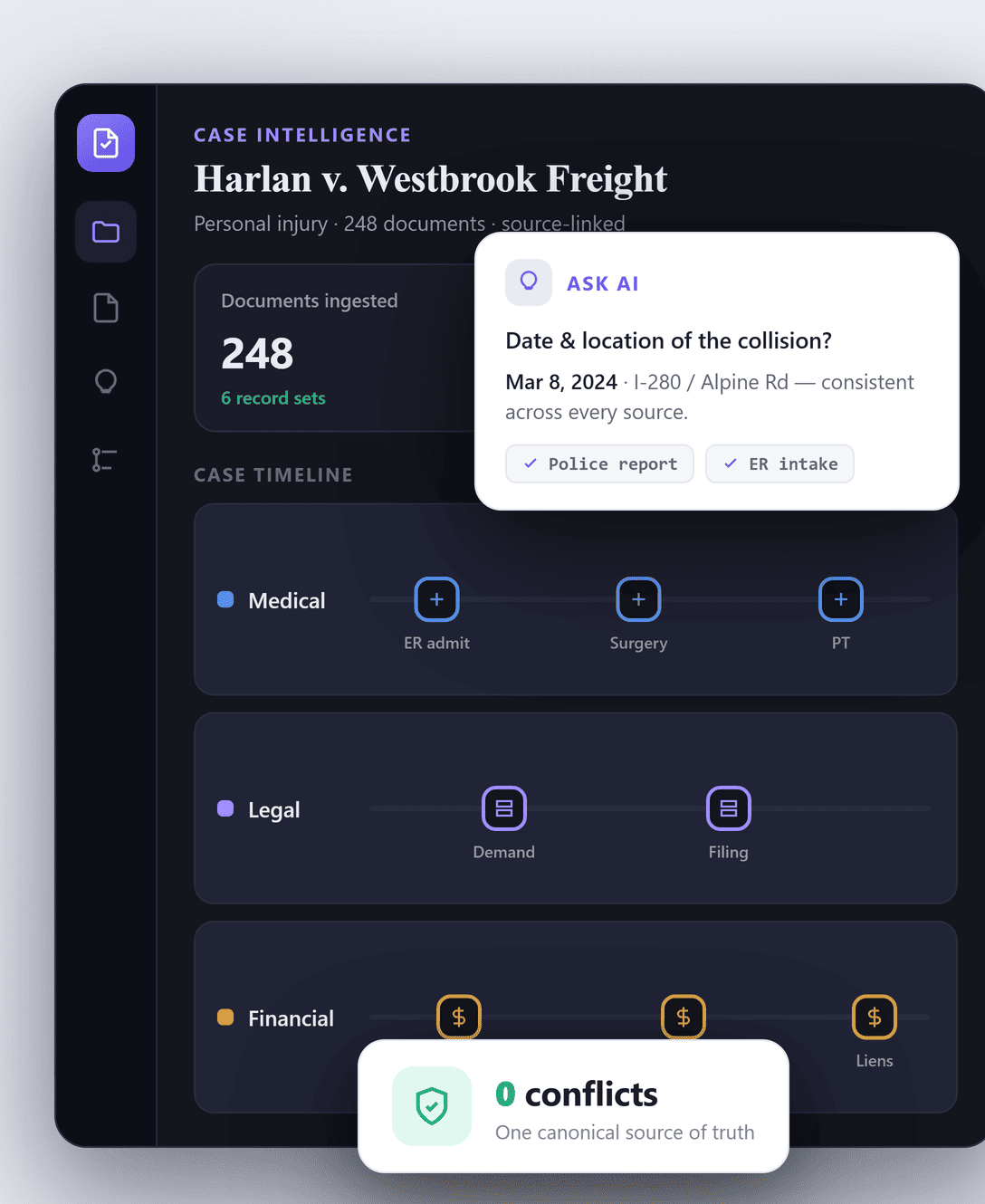

Retrieval solves half the problem. The other half is what the model does with what it finds. Most generative reporting layers synthesize over whatever was retrieved and produce confident-sounding output with no way to trace it back to source data. At the executive level, a confidently wrong fact ends up in a board deck before anyone catches it. Executives stop trusting the output. They stop using the product.

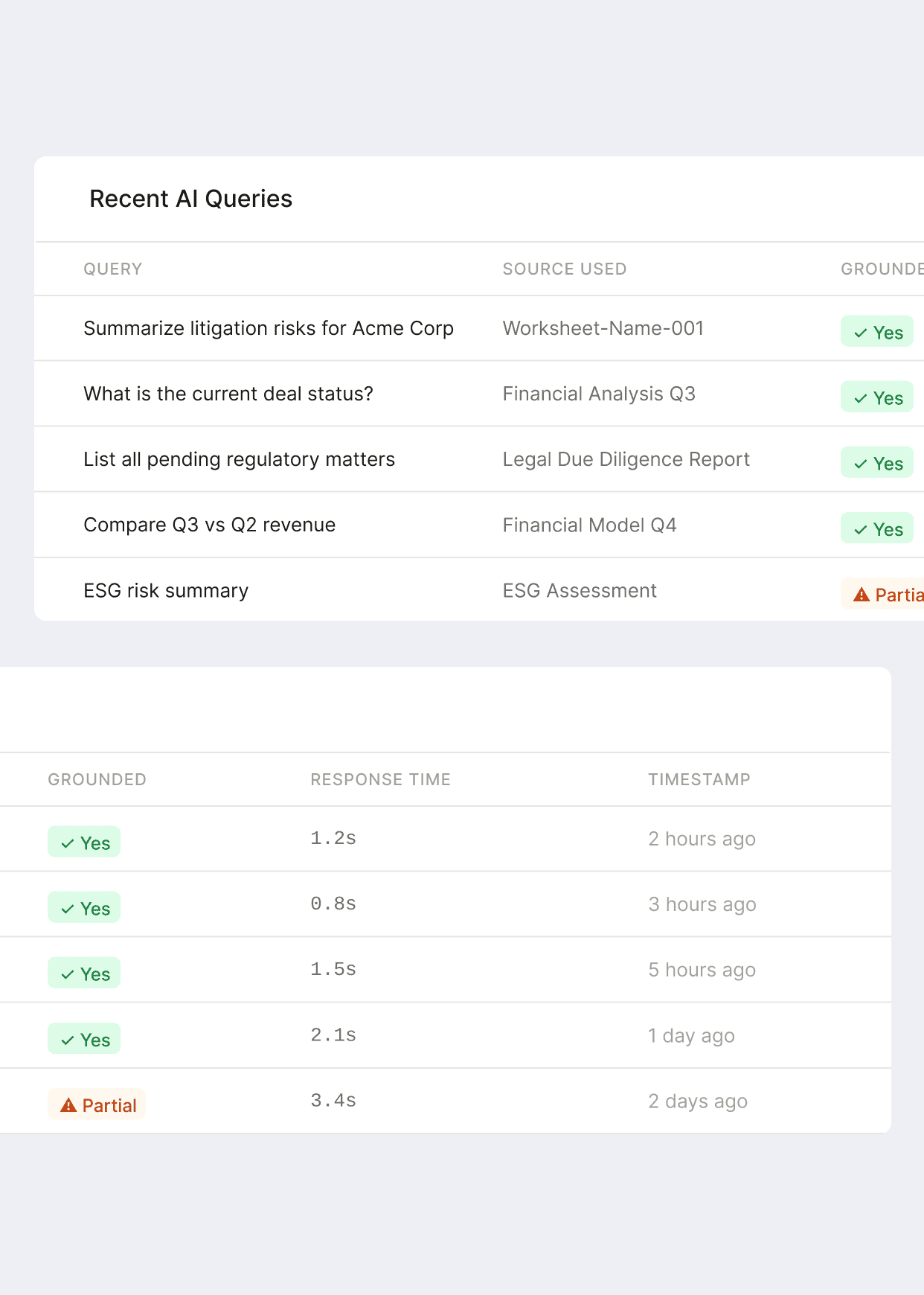

Grounded Synthesis with Inline Source Attribution on Every Output

We built the synthesis layer so that every report and every answer carries source attribution inline. If the model can’t ground a claim in the retrieved corpus, it refuses. If sources conflict, it flags them. Grounding is not a feature that gets added later. At the executive layer, source attribution and visible reasoning are the product. They are what make the output trustworthy enough to act on.

Chat-Over-Documents Fails the Same Way Every Time

Most RAG systems fail silently. Single-shot retrieval pulls whatever embeddings rank highest. Model synthesizes over whatever lands. A confidently wrong fact ends up in a board deck before anyone catches it. At the executive layer, a system that hallucinates is worse than no system. Executives stop trusting the output. They stop using the product.

Make the Retrieval Loop the Reliability Mechanism

We architected reliability into the loop itself, not as a validation layer bolted on after synthesis. Thin pull. Model reasons about what else to check. Conflicting sources? Flag them. No grounding? Refuse. Every report and every copilot answer carries source attribution inline. The agentic loop is the reason you can trust the output. It fails loudly. That’s the right failure mode for executive-facing AI.

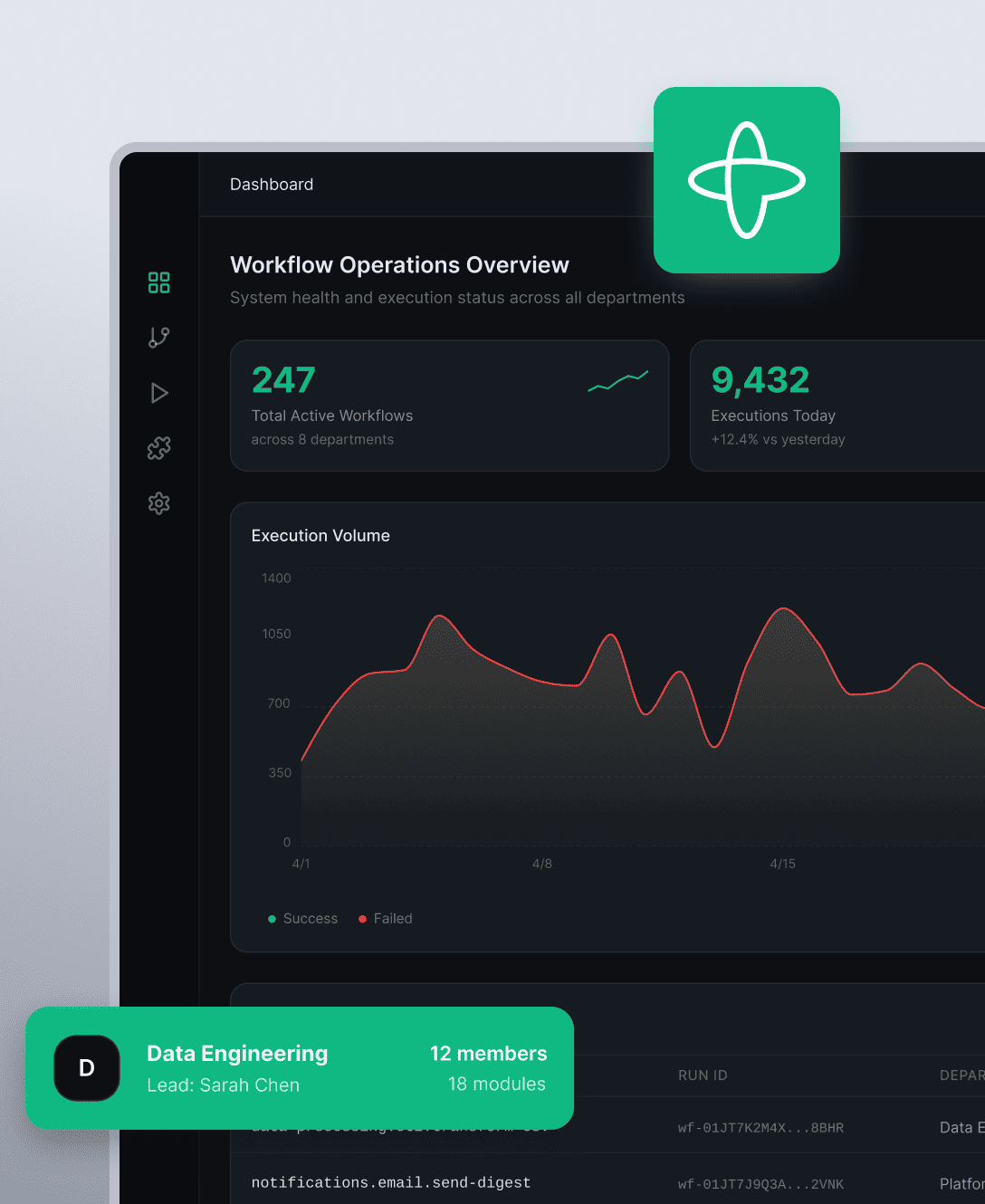

Stack Complexity vs. Internal Team Capacity

A production AI stack needs a dedicated owner: vector databases, embedding services, rerankers, retrieval orchestration, monitoring pipelines. Proxa’s engineering team is lean. Every moving part we add is one they own forever. Adding complexity to solve a problem that doesn’t exist for them is adding liability.

Strip the Stack to What Proxa Actually Owns

We collapsed the architecture to its essentials: Claude API for reasoning, document access via standard file I/O, React UI on the frontend, hosted on Azure where Proxa already runs. No vendor lock-in. No proprietary black boxes. No custom services that require ongoing support. If an engineer at Proxa couldn’t understand, modify, and operate a component after we shipped it, it didn’t ship.

Strategy

Production judgment on this build came down to one constraint: long-term ownership matters more than short-term convenience. Every architectural decision was evaluated through that lens.

De-Risked by Rewriting, Not Adding

Keeping the inherited framework would have been the lower-friction choice in the short term. It also would have left Proxa operating a system that was going to keep breaking. We made the harder call: rewrite the agent layer on a simpler stack. Not because rewriting is fun, but because the long-term architecture bet is what matters. A broken system you’re dependent on is a tax on every feature you ship next.

Managed Tradeoffs with Agentic Retrieval

We chose the retrieval pattern that fails loudly over the one that fails silently. Fixed pipelines hide their failures. Agentic loops either find grounding or tell you they didn’t. That’s the right failure mode for executive-facing output. We lose some retrieval optimization, but we gain visibility into when the system isn’t confident. That visibility is the product.

Built for Client Independence

Azure for hosting, because Proxa already runs on it. Claude API for reasoning, because one vendor is simpler than a custom orchestration framework. Standard React on the UI. Every component was evaluated against one question: can Proxa’s team own this after we leave? If the answer was no, it didn’t ship. That constraint is what made the architecture durable.

Project Results & Impact

Proxa now runs an agent-driven AI layer in production, integrated into the product they already ship. The long-term architecture bet is the stripped-down stack. That bet stays cheap to operate as the document corpus and user base grow.

Tangible outputs:

– Agentic retrieval loop running on the Claude API.

– Generative reporting producing executive-ready narratives from their data.

– Grounded synthesis with inline source attribution on every output.

– Generative UI layer for in-app conversational access to the corpus.

– Azure-hosted infrastructure integrated into Proxa’s existing product.

– Documentation and runbooks owned by Proxa’s internal team.

Key Takeaways

- Agentic retrieval is production-grade. When the model reasons about what to pull next, pipeline machinery disappears. You get simpler architecture, lower operational burden, and better visibility into when the system isn’t confident.

- Sometimes the right move is to rewrite. An inherited framework that’s going to keep breaking under load is a bigger tax than a clean replacement. Short-term friction beats long-term liability.

- The stack that survives is the one your team owns. Architecture that requires dedicated AI ops ownership is architecture that won’t scale inside a lean team. Strip it down. Make it transparent. Let the team understand every component.

- Grounding is not a feature you add later. At the executive layer, source attribution and visible reasoning are the product. They’re what make the output trustworthy. Build them in from the start.

- Client independence is an architectural outcome. It’s not a closing-phase handoff or a nice-to-have documentation package. It shapes every decision from day one. If your team can’t own it, it shouldn’t ship.

Worth thinking about if you’re shipping agent-driven AI into a live product and you want a stack your team can own.

AI You Can Trust With Real Decisions

Meet the founders

Tell us your goals

Receive a proposal

Project kickoff

“Anton is an exceptional technologist. I would feel comfortable having him work on any technical challenge.” – Ryland Goldstein, Head of Product, Temporal