There is a moment in every AI project where everything feels possible. You have a prototype that summarizes documents, or a chatbot that answers questions about your product catalog, or an AI agent that drafts emails from CRM data. The demo works. Everyone is excited. The CEO sends a Slack message with a fire emoji. Then you try to put it into production and the whole thing falls apart.

We have spent the last 16 years building software at Spiral Scout, and the last two specifically helping companies ship AI systems that do real work inside real businesses. Not demos. Not proof of concepts that live in a slide deck. Actual systems that touch ERP data, process orders, talk to legacy APIs, and run around the clock without someone watching the logs. The pattern I keep seeing is the same: the distance between “look what AI can do” and “this actually runs our business” is where projects go to die. And almost nobody talks about it clearly.

This post is for the builders. If you are in the vibe coding world spinning up agents and workflows fast, or if you are a services firm trying to figure out how to deliver AI projects without getting burned, these are the failure modes I see over and over – and the things we have learned the hard way about how to avoid them.

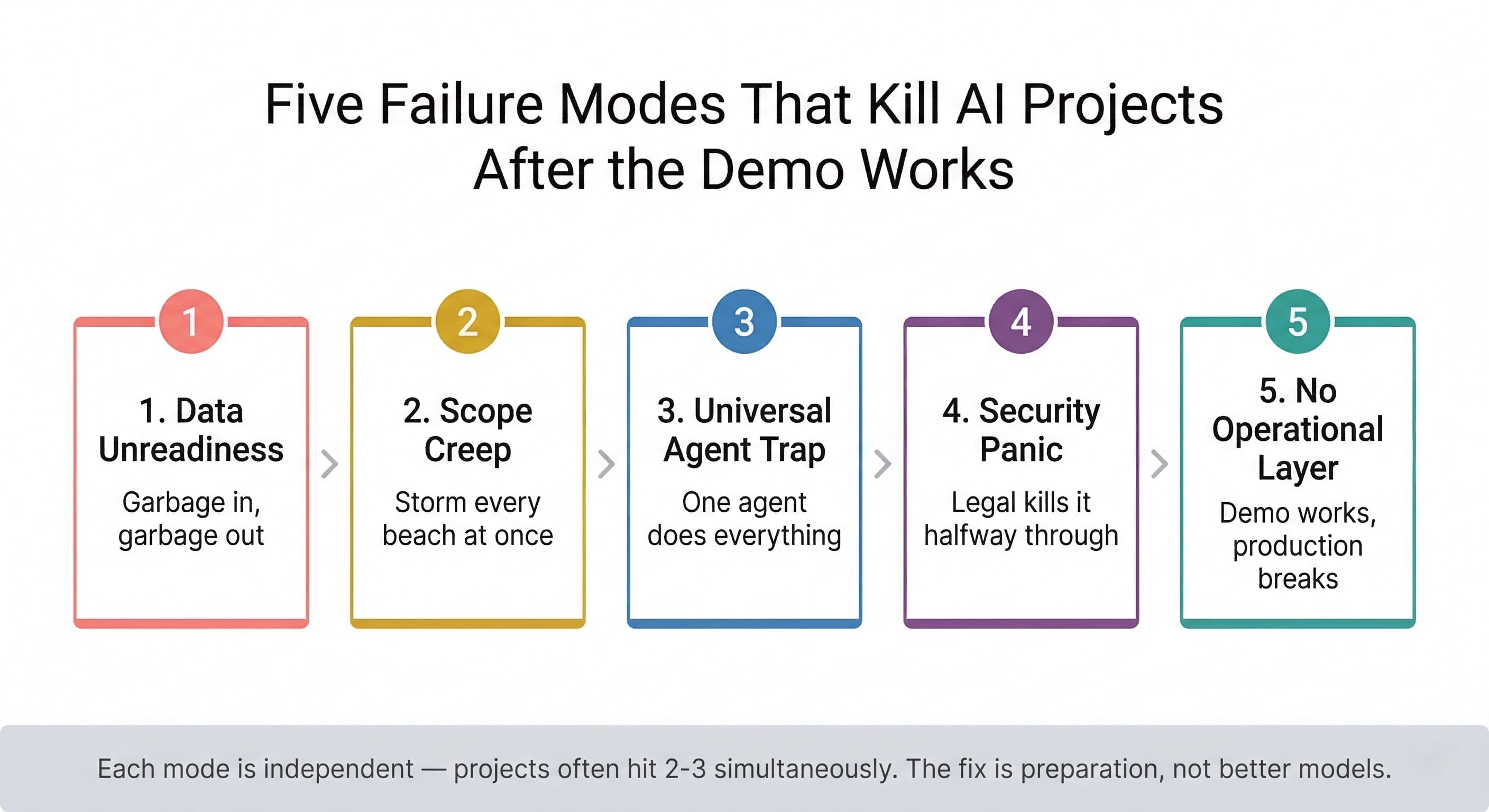

Five failure modes that kill AI projects after the demo works.

The Data Problem Nobody Wants to Deal With

The most common reason AI projects fail has nothing to do with AI. It is the data underneath.

Think of it like trying to build a house on a landfill. You can have the best architect in the world, but if the foundation is garbage, the house is going to sink. That is what happens when companies try to bolt AI agent automation onto data that lives in 47 different Excel files, three legacy databases, a shared drive nobody has organized since 2019, and “Steve’s head” – Steve being the guy who has been there 20 years and just knows where everything is.

We ran into this head-on with a CPQ project we built through Wippy for an industrial distributor. The client wanted an intelligent quoting agent that could configure complex hose and fitting assemblies – hundreds of SKUs, compatibility rules, pressure ratings, regional pricing. Sounds like a perfect AI use case, right? It is. But when we got into the data, the product catalog was spread across multiple systems with inconsistent naming, missing specs, and pricing that lived partly in an ERP and partly in a rep’s memory.

Before we wrote a single line of agent logic, we had to run what I call a meta-analysis – basically feeding the schema and structure of their data into an LLM to figure out what is actually possible with what they have. Not moving the data, not building a pipeline. Just understanding the shape of the problem. That step alone saved months of wasted effort because it surfaced gaps before anyone committed budget to filling them. An AI Readiness Audit formalizes this exact process – it is the first thing we recommend before any team writes agent code.

If you are building AI and you skip this step, you are gambling. And the house always wins.

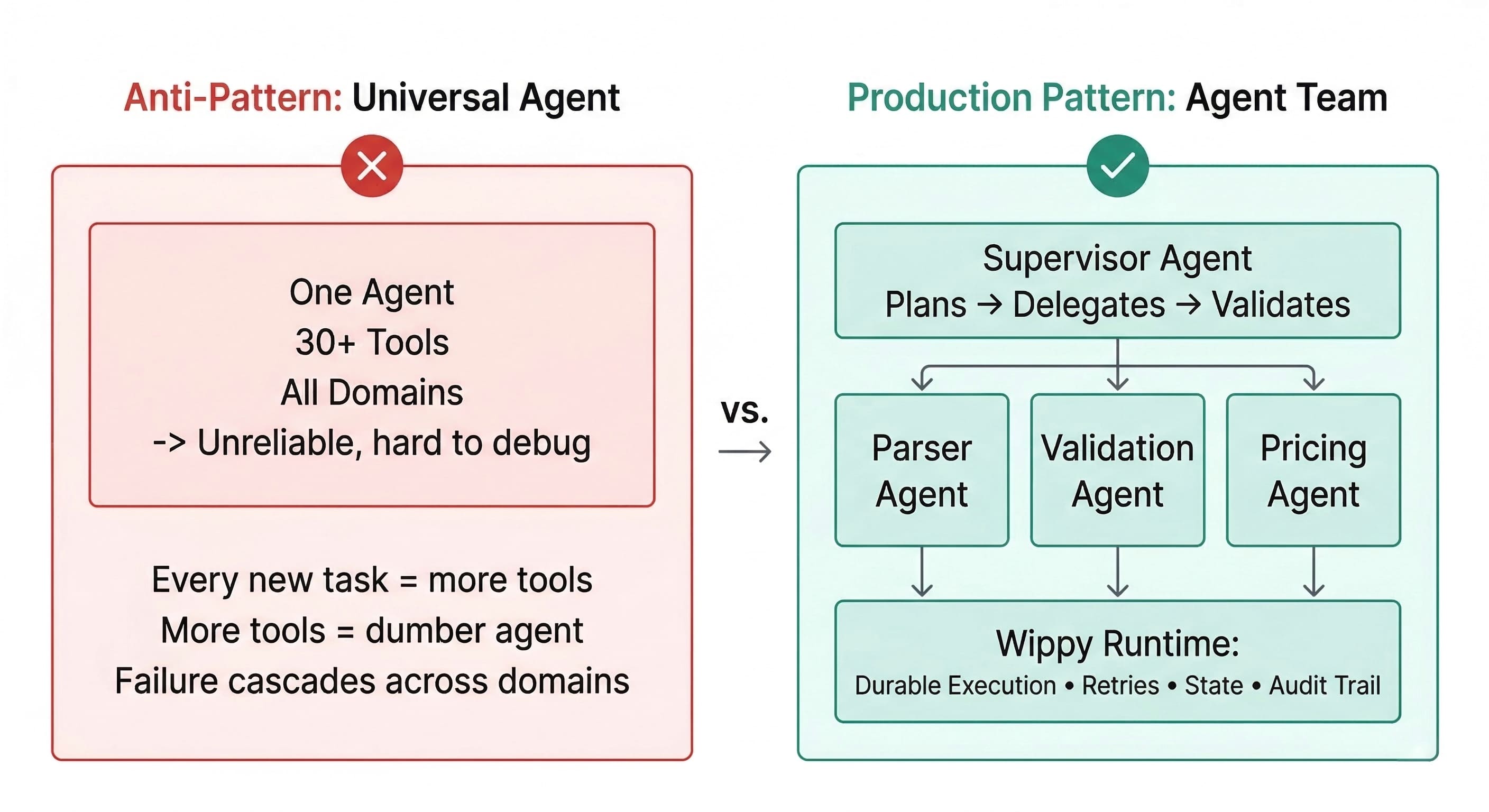

The Universal Agent Trap Will Kill Your Reliability

Here is the second thing that kills projects: trying to build one agent that does everything.

I get why it is tempting. You see demos where a single agent browses the web, writes code, queries a database, sends an email, and books a meeting. Looks convincing. But in production, every tool you add to an agent’s toolkit makes it a little dumber and a little less reliable. By the time you have given it 30 or 40 tools, it is like handing a new employee the entire company operations manual on day one and saying “figure it out.” We explored this problem in depth in our breakdown of modern AI agent architectures – the research is clear that agent reliability degrades as scope expands.

The approach that actually works is decomposition. Instead of one super-agent, you build a team of specialists coordinated by a supervisor. One agent reads the file. Another validates the data. A third makes the decision. A fourth executes the action. Each one is small, testable, and easy to debug. This is the delegation between agents pattern that separates production systems from prototypes.

Universal agent (anti-pattern) vs. supervised agent team (production pattern).

This is core to how we architected Wippy. It is not a single-agent product – it is the runtime that orchestrates teams of agents across long-running workflows. When we built an automated QA system for a client, we did not create one agent that “does testing.” We built a pipeline where one agent analyzes the codebase, another generates test cases, another executes them, and a supervisor agent manages the whole lifecycle, including retries when something fails. Each piece is simple. The orchestration is where the real engineering lives. You can see this same multi-agent coordination pattern across every production system we have shipped.

If you are vibe coding and your single-file agent works great on a happy path, that is a strong start. But the moment you need it to handle edge cases, retry failures, manage state across hours or days, and not lose its mind when an API times out – you need actual infrastructure under it. That is not a criticism of fast prototyping. It is a recognition that production and prototype are different animals.

Security Panic Kills Projects That Already Work

Even when the tech works perfectly, projects die for a completely non-technical reason: security and legal teams kill them.

This happens constantly. A team gets halfway through a pilot, the AI is performing well, everyone is bought in – and then someone from legal asks “wait, are we sending our client contracts to OpenAI’s servers?” and the whole thing freezes. We watched this pattern nearly derail Project Fortress, a legal deal management platform with 50+ agents handling sensitive contract data. The solution was not better AI – it was better architecture.

The fix is boring but essential: have the architecture conversation first. Before you build anything, define exactly how data flows, where it is processed, what the model retains (ideally nothing), and how you isolate tenant data. When we deploy Wippy for clients, one of the first things we establish is that the AI models are essentially disposable fuel – they process information but do not store it. The client’s data and the agents trained on it stay within their environment. The “gasoline” is replaceable. The “car” and its contents belong to the client. That means the client owns the IP, the agents, and all of the data – always.

If you are a services firm delivering AI projects and you are not leading with this conversation, you are going to waste weeks of engineering time on projects that get killed by a compliance review.

Find Your Normandy Before You Storm Every Beach

Anton Titov, our CTO and the author of the official Temporal PHP SDK, has a line I keep coming back to: find your Normandy. Do not try to storm every beach at once. Pick one specific, painful, manual process and solve that completely before expanding.

The temptation – especially when a client is excited and the budget is there – is to build the whole vision in phase one. An AI that handles quoting and inventory and customer communications and forecasting. What you end up with is five things that semi-work instead of one thing that works perfectly. And when the CFO asks “is this thing actually saving us money?” the answer is a mumbled “well, sort of, in theory, once we finish the next phase.”

The projects that succeed start small and prove the plumbing works. Can the agent actually connect to your systems? Can it read the data it needs? Can it produce an output a human would trust? Our Product Discovery process exists specifically to answer those questions before any engineering budget gets committed. If you cannot build a simple agent that talks to your data in a week, you are not ready for the complex reasoning work. Get the wiring right first.

What the Vibe Coding World Gets Right (and What It Misses)

I love what is happening in the vibe coding community. The speed at which people are building functional prototypes is staggering, and it is pulling AI development out of the ivory tower and into the hands of people who actually understand their own problems. That is a big deal.

What gets missed is the operational layer. The prototype works on your laptop with your test data and your patience. Production means handling concurrent users, managing state across sessions that last days or weeks, retrying gracefully when third-party APIs flake out, maintaining audit trails so you can explain why the agent made a specific decision, and doing all of this without someone watching the logs. Anton puts it directly: “If your agent cannot survive an API timeout at 2am without losing state, it is a toy. Infrastructure is what separates a demo from a system.”

That is not a knock on building fast. It is an argument for building fast on a foundation that can hold the weight. The best AI projects I have seen start with rapid prototyping to validate the idea, then move to proper workflow orchestration and infrastructure for the production version. Skip that second step and you end up with a fragile thing that breaks at 2am and nobody knows how to fix it. We built Wippy precisely to bridge that gap – so that teams can go from working prototype to production-grade system without rebuilding everything from scratch. You can see how this played out in practice across our multi-agent system deployments, from legal deal automation to pre-sales workflow orchestration to high-volume seasonal processing.

Failure Mode Reference: Symptoms, Root Causes, and Fixes

| Failure Mode | Symptom | Root Cause | Fix |

| Data Unreadiness | Agent hallucinations, wrong outputs on real data | Fragmented, unnormalized data across systems | Run a meta-analysis on schema before writing agent logic |

| Scope Creep | Five features that semi-work, none fully production-ready | Trying to solve all problems in phase one | Pick one beachhead workflow and win it completely first |

| Universal Agent Trap | Agent gets dumber as you add capabilities, inconsistent results | Single agent with 20–40+ tools across too many domains | Decompose into specialized agents with a supervisor |

| Security Panic | Legal or InfoSec kills the project mid-build | Data flow and model retention not defined upfront | Architecture conversation on day one: isolation, retention, IP ownership |

| No Operational Layer | Demo works; production breaks at 2am with no recovery | No durability, no state management, no observability | Use a runtime with durable execution, retries, and audit trails |

The Basics Are the Whole Playbook

The companies actually shipping AI are not doing anything exotic. They are doing the basics well: clean data, clear scope, proper architecture, and the discipline to start small. Audit your data before you touch a model. Decompose your agents into specialists. Solve the security conversation on day one. Pick one beachhead and win it completely. Then expand.

That space between demo day and day-to-day is where most AI projects go to die. It is also where the actual value gets created – for the builders willing to do the unglamorous work of making it real.

Not Sure If Your Data and Architecture Are Ready for AI?

Spiral Scout’s AI Readiness Audit evaluates your data landscape, system architecture, and workflow complexity against the five failure modes above — and maps a concrete path from where you are to a pilot that actually ships. Whether you are exploring AI agent automation, expertise automation, or building on Wippy, we will tell you what is ready and what is not.